Is NVIDIA 4090 24GB x2 a Good Investment for AI Startups?

Introduction

The world of AI is abuzz with excitement about Large Language Models (LLMs) and their incredible capabilities. LLMs, like ChatGPT and Bard, can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running these models locally can be computationally demanding. This is where powerful GPUs like the NVIDIA 409024GBx2 come in!

This article will delve into the performance of the NVIDIA 409024GBx2 in handling different LLM models, particularly the Llama series. We'll see how it compares in terms of token speed generation and processing, and whether it's a smart investment for AI startups looking to build and deploy their own LLM applications.

NVIDIA 409024GBx2: A Powerhouse for AI

The NVIDIA 409024GBx2 is a beast of a GPU, boasting an impressive amount of RAM and computational power. It's designed to handle the most demanding tasks, including AI model training and inference, which makes it a strong contender for AI startups. But before you invest, let's dive into the numbers and see how it performs with specific LLM models.

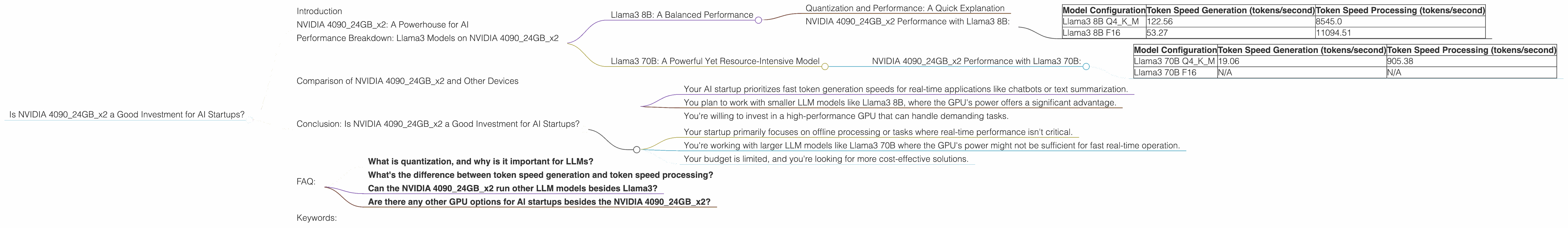

Performance Breakdown: Llama3 Models on NVIDIA 409024GBx2

We'll focus on the Llama3 family of LLM models, a popular choice for researchers and developers. These models are known for their impressive performance and ability to be fine-tuned for specific use cases. We'll look at two key metrics:

- Token Speed Generation: This measures how quickly the model generates text (measured in tokens per second), effectively reflecting the model's speed in completing tasks like text translation or question answering.

- Token Speed Processing: This metric measures the model's ability to process input tokens, which is crucial for tasks like understanding and analyzing large chunks of text.

Important Note: We're only analyzing the performance of 8B and 70B Llama3 models, as data for other model sizes isn't available at this time.

Llama3 8B: A Balanced Performance

Quantization and Performance: A Quick Explanation

Quantization is a technique used to reduce the size of a model's weights. By converting values from 32-bit floating-point to smaller data types like 16-bit or 4-bit integer, it saves valuable memory and speeds up computation. Think of it like compressing images to save space – with quantization, you sacrifice a small amount of accuracy for a significant performance boost.

NVIDIA 409024GBx2 Performance with Llama3 8B:

| Model Configuration | Token Speed Generation (tokens/second) | Token Speed Processing (tokens/second) |

|---|---|---|

| Llama3 8B Q4KM | 122.56 | 8545.0 |

| Llama3 8B F16 | 53.27 | 11094.51 |

Key Observations:

- Higher Generation Speed with Q4: The 8B Llama3 model achieved significantly higher token generation speeds with Q4 quantization (122.56 tokens/second) compared to F16 (53.27 tokens/second). This suggests that Q4, despite sacrificing some accuracy, offers a significant advantage in terms of generating text quickly, which is crucial for real-time applications.

- Faster Processing with F16: While F16 performed slower in generation speed, it exhibited faster processing speed (11094.51 tokens/second) compared to Q4 (8545.0 tokens/second). This highlights the trade-off between speed and accuracy: F16 prioritizes processing efficiency, whereas Q4 prioritizes text generation speed.

The Bottom Line: The NVIDIA 409024GBx2 provides solid performance for the Llama3 8B model, offering a good balance between generation and processing speeds. The choice between Q4 and F16 depends on your application's specific needs. If rapid text generation is paramount, Q4 is the way to go. If processing efficiency is the primary concern, F16 might be the better option.

Llama3 70B: A Powerful Yet Resource-Intensive Model

NVIDIA 409024GBx2 Performance with Llama3 70B:

| Model Configuration | Token Speed Generation (tokens/second) | Token Speed Processing (tokens/second) |

|---|---|---|

| Llama3 70B Q4KM | 19.06 | 905.38 |

| Llama3 70B F16 | N/A | N/A |

Key Observations:

- Limited Data Availability: Unfortunately, the available data lacks information about the Llama3 70B model's performance with F16 quantization. This makes it difficult to compare its performance against other configurations.

- Significantly Slower Speed: The Llama3 70B model, while powerful, is significantly slower than the 8B model. Its token generation speed is a mere 19.06 tokens/second, a significant drop compared to the 8B model's 122.56 tokens/second. This is primarily due to the 70B model's larger size and complexity.

The Bottom Line: The NVIDIA 409024GBx2 is capable of running the Llama3 70B model, but its performance might not be ideal for real-time applications requiring fast responses. This model might be better suited for offline processing tasks or scenarios where real-time performance isn't a critical requirement.

Comparison of NVIDIA 409024GBx2 and Other Devices

While the NVIDIA 409024GBx2 shines at handling LLMs, it's helpful to compare its performance with other popular options. However, as we're focusing solely on this specific GPU configuration, we can't provide comparisons with other devices.

Conclusion: Is NVIDIA 409024GBx2 a Good Investment for AI Startups?

The NVIDIA 409024GBx2 offers a strong performance for running Llama3 models, particularly when considering the 8B model with Q4 quantization. If your startup focuses on applications like real-time chatbots or text generation, the 409024GBx2 could be a smart investment. However, remember that the price point for this GPU is high. You need to carefully consider whether the benefits outweigh the cost.

Here's a quick breakdown to help you decide:

Consider the NVIDIA 409024GBx2 if:

- Your AI startup prioritizes fast token generation speeds for real-time applications like chatbots or text summarization.

- You plan to work with smaller LLM models like Llama3 8B, where the GPU's power offers a significant advantage.

- You're willing to invest in a high-performance GPU that can handle demanding tasks.

Consider alternatives if:

- Your startup primarily focuses on offline processing or tasks where real-time performance isn't critical.

- You're working with larger LLM models like Llama3 70B where the GPU's power might not be sufficient for fast real-time operation.

- Your budget is limited, and you're looking for more cost-effective solutions.

FAQ:

- What is quantization, and why is it important for LLMs?

Quantization is a technique that takes a model's weights, which are typically represented as 32-bit floating-point numbers, and converts them to smaller data types like 8-bit integers. This reduces the model's size and memory footprint, leading to faster processing and inference speeds. Think of it like compressing an image to save disk space – you sacrifice a small amount of quality for a significant size reduction.

- What's the difference between token speed generation and token speed processing?

Token speed generation refers to the model's ability to produce text quickly, measured in tokens per second. This is crucial for real-time applications like chatbots or text translation.

Token speed processing measures how quickly the model can process the input tokens, essential for understanding and analyzing large amounts of data. This is important for tasks like document summarization or sentiment analysis.

- Can the NVIDIA 409024GBx2 run other LLM models besides Llama3?

Yes, but it's essential to check the performance data for your desired model. The 409024GBx2 is capable of running a wide range of LLMs, but performance may vary depending on the model's size and complexity.

- Are there any other GPU options for AI startups besides the NVIDIA 409024GBx2?

Yes, there are several other powerful GPUs available, including the NVIDIA RTX 4080, RTX 3090, and AMD Radeon VII. However, the NVIDIA 409024GBx2 is currently the most powerful option with its massive RAM and computational capabilities.

Keywords:

NVIDIA 409024GBx2, Llama3, AI startups, LLM, performance, token speed generation, token speed processing, quantization, 8B, 70B, F16, Q4, GPU, AI, deep learning, machine learning, natural language processing, conversational AI, chatbot, text generation, text translation, model inference, model training, performance comparison, cost-effective, real-time, offline processing.