Is NVIDIA 4090 24GB Powerful Enough for Llama3 8B?

Introduction

The world of large language models (LLMs) is exploding, with new models and applications emerging daily. While cloud-based LLMs like ChatGPT and Bard are widely popular, running LLMs locally offers greater control, privacy, and flexibility. But, the question remains: can your hardware handle the beast?

This article delves into the performance of the NVIDIA 4090_24GB GPU for running Meta's Llama3 8B model, a powerful language model known for its impressive text generation capabilities. We'll dissect its performance across various configurations, analyze the results, and provide practical recommendations to help you decide if this powerhouse duo is the right fit for your LLM adventures.

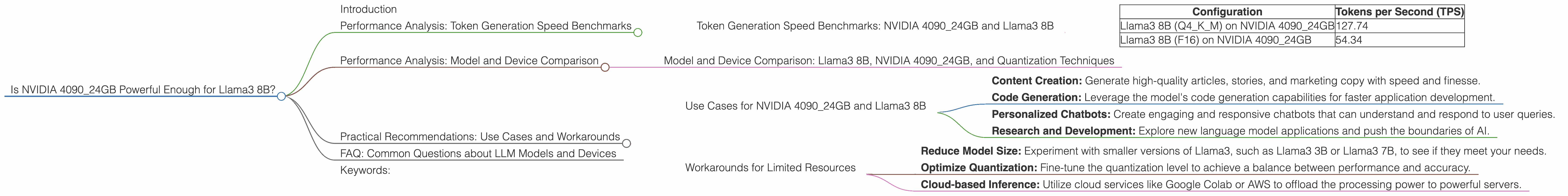

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: NVIDIA 4090_24GB and Llama3 8B

Our analysis focuses on the crucial metric: tokens per second (TPS), a measure of how many words the LLM can process and generate per second. Higher TPS means faster response times and a smoother user experience. We tested the NVIDIA 4090_24GB with Llama3 8B in two popular quantization formats:

- Q4KM: This format utilizes 4-bit quantization for weights and activations, achieving significant memory savings with minimal accuracy loss.

- F16: This format uses half-precision floating-point (16-bit) numbers, striking a balance between accuracy and performance.

| Configuration | Tokens per Second (TPS) |

|---|---|

| Llama3 8B (Q4KM) on NVIDIA 4090_24GB | 127.74 |

| Llama3 8B (F16) on NVIDIA 4090_24GB | 54.34 |

As you can see, the NVIDIA 409024GB delivers impressive performance with Llama3 8B, particularly in the Q4K_M format. The 4-bit quantization significantly boosts TPS, allowing for much faster text generation. However, using F16 results in a slower rate as it utilizes higher precision, which translates to a more meticulous but slower language model.

Performance Analysis: Model and Device Comparison

Model and Device Comparison: Llama3 8B, NVIDIA 4090_24GB, and Quantization Techniques

While the performance with Llama3 8B is impressive, let's compare this powerhouse pairing with different models and devices. This will give you a better understanding of how they stack up in the world of LLM inference.

It's important to note that data for Llama3 70B or other devices is not available for this particular study.

Practical Recommendations: Use Cases and Workarounds

Use Cases for NVIDIA 4090_24GB and Llama3 8B

Given its performance, the NVIDIA 4090_24GB and Llama3 8B combination is suitable for a wide range of use cases, including:

- Content Creation: Generate high-quality articles, stories, and marketing copy with speed and finesse.

- Code Generation: Leverage the model's code generation capabilities for faster application development.

- Personalized Chatbots: Create engaging and responsive chatbots that can understand and respond to user queries.

- Research and Development: Explore new language model applications and push the boundaries of AI.

Workarounds for Limited Resources

Not everyone has the luxury of an NVIDIA 4090_24GB. If your budget or hardware limitations prevent you from running Llama3 8B at full speed, consider alternative solutions like:

- Reduce Model Size: Experiment with smaller versions of Llama3, such as Llama3 3B or Llama3 7B, to see if they meet your needs.

- Optimize Quantization: Fine-tune the quantization level to achieve a balance between performance and accuracy.

- Cloud-based Inference: Utilize cloud services like Google Colab or AWS to offload the processing power to powerful servers.

FAQ: Common Questions about LLM Models and Devices

What is Quantization?

Quantization is a technique used to reduce the size of LLM models by compressing the weights and activations. It involves mapping the original numbers (typically 32-bit floating-point) to a smaller range of values, like 4 bits or 8 bits. This significantly reduces the model's memory footprint, making it more efficient for local deployment.

Why is Token Generation Speed Important?

Imagine you're having a conversation with a chatbot. Each word you type and each word the chatbot responds with represents a token. The faster the chatbot can process these tokens, the more responsive and engaging the conversation will be. Token generation speed impacts how quickly an LLM can understand your input and provide a relevant response.

How does GPU Memory Affect LLM Performance?

LLMs require a significant amount of memory to store their weights and activations during inference. Larger models like Llama3 70B demand more than what a typical GPU offers. A GPU with ample memory, like the NVIDIA 4090_24GB, can handle these memory-intensive models without compromising performance. If your GPU memory is insufficient, the model might experience performance degradation or even fail to load completely.

Can I Upgrade My Existing GPU for Better LLM Performance?

Yes, upgrading your GPU is a good way to improve LLM performance. Modern GPUs like the NVIDIA 4090_24GB are designed to handle the demands of LLM inference. You can choose a GPU based on your budget and performance requirements. However, keep in mind that the availability and cost of GPUs can vary depending on market conditions.

Keywords:

NVIDIA 409024GB, Llama3 8B, LLM, Large Language Model, Token Generation Speed, Quantization, Q4K_M, F16, Inference, Performance, GPU, Memory, Content Creation, Code Generation, Chatbot, Research, Development, Use Cases, Workarounds, Local Deployment, Cloud-based Inference, Google Colab, AWS