Is NVIDIA 4090 24GB a Good Investment for AI Startups?

Introduction

The world of artificial intelligence (AI) is buzzing with excitement, driven by the incredible progress of large language models (LLMs). These models, capable of generating human-like text, translating languages, writing different kinds of creative content, and answering your questions in an informative way, are revolutionizing various industries.

For AI startups, developing and deploying these models is crucial for gaining a competitive edge. But with the complexity of LLMs comes the need for powerful hardware to handle the heavy computations. One of the most sought-after GPUs for this task is the NVIDIA 4090 24GB.

This article will delve into the performance of the NVIDIA 4090 24GB for running LLMs, specifically focusing on the Llama 3 model. We'll explore its strengths and limitations, helping you decide if it's the right investment for your AI startup.

NVIDIA 4090 24GB: A Powerhouse for AI

The NVIDIA 4090 24GB is a top-of-the-line graphics card known for its exceptional performance. This beast boasts a massive 24GB of GDDR6X memory and a powerful Ada Lovelace architecture, making it a powerful contender for AI workloads. But how well does it hold up for running LLMs? Let's dive into the data.

Performance Comparison of NVIDIA 4090 24GB for Llama 3

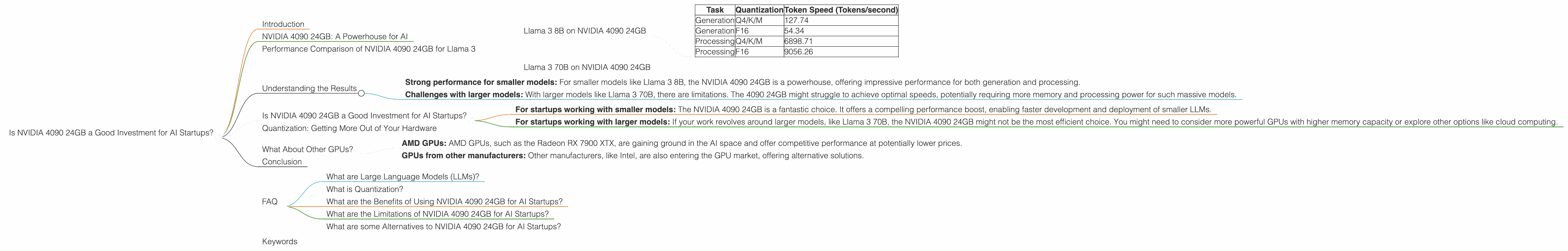

To gauge the performance of the NVIDIA 4090 24GB, we'll compare its token speeds for two different Llama 3 models: Llama 3 8B and Llama 3 70B. These models represent different sizes and complexities, offering a broader understanding of the GPU's capabilities.

These comparisons will highlight the NVIDIA 4090 24GB's strengths and weaknesses for AI startups working with Llama 3.

Llama 3 8B on NVIDIA 4090 24GB

Let's first examine the performance of the NVIDIA 4090 24GB for the Llama 3 8B model. We'll look at the token generation and processing speeds for two different quantization levels: Q4 (quantized to 4 bits) and F16 (floating point 16-bit).

| Task | Quantization | Token Speed (Tokens/second) |

|---|---|---|

| Generation | Q4/K/M | 127.74 |

| Generation | F16 | 54.34 |

| Processing | Q4/K/M | 6898.71 |

| Processing | F16 | 9056.26 |

As you can see, the NVIDIA 4090 24GB performs exceptionally well for Llama 3 8B, achieving impressive token speeds.

- Quantization: When quantizing the Llama 3 8B model to Q4, the NVIDIA 4090 24GB achieves a token generation speed of 127.74 tokens per second. This means it can generate text at an incredibly fast pace.

- Processing: Similarly, for processing, the NVIDIA 4090 24GB excels with a speed of 6898.71 tokens per second for Q4 quantization.

F16 Performance: While the Q4 performance is impressive, it's worth noting that the F16 performance for generation is significantly lower at 54.34 tokens per second. This is because F16 uses more memory and processing power, leading to a drop in speed. However, for processing, the F16 performance is slightly faster, reaching 9056.26 tokens per second. This can be attributed to the F16 format being more efficient for certain processing tasks.

Llama 3 70B on NVIDIA 4090 24GB

The scenario changes when we move to the larger Llama 3 70B model. Unfortunately, there are no data available for the NVIDIA 4090 24GB running the Llama 3 70B model.

The absence of data doesn't necessarily mean the NVIDIA 4090 24GB is incapable of handling this model. However, it suggests that the GPU might struggle to run the Llama 3 70B model efficiently, especially at higher quantization levels.

Understanding the Results

The results highlight the NVIDIA 4090 24GB's strengths and limitations when it comes to LLMs.

- Strong performance for smaller models: For smaller models like Llama 3 8B, the NVIDIA 4090 24GB is a powerhouse, offering impressive performance for both generation and processing.

- Challenges with larger models: With larger models like Llama 3 70B, there are limitations. The 4090 24GB might struggle to achieve optimal speeds, potentially requiring more memory and processing power for such massive models.

Is NVIDIA 4090 24GB a Good Investment for AI Startups?

The answer depends on your specific needs:

- For startups working with smaller models: The NVIDIA 4090 24GB is a fantastic choice. It offers a compelling performance boost, enabling faster development and deployment of smaller LLMs.

- For startups working with larger models: If your work revolves around larger models, like Llama 3 70B, the NVIDIA 4090 24GB might not be the most efficient choice. You might need to consider more powerful GPUs with higher memory capacity or explore other options like cloud computing.

Quantization: Getting More Out of Your Hardware

Quantization is a technique used to reduce the size of a model and make it run faster by replacing floating-point numbers with lower precision integers.

Think of it like this: Imagine you have a picture of a landscape with millions of different shades of color. Quantization is like reducing the number of colors in the picture, making it smaller but still recognizably the same.

In the case of Llama 3 8B, using Q4 quantization allowed the NVIDIA 4090 24GB to achieve significantly faster speeds than with F16. This emphasizes the importance of using efficient techniques like quantization to get the most out of your hardware.

What About Other GPUs?

While the NVIDIA 4090 24GB is a top performer, it's not the only option available.

- AMD GPUs: AMD GPUs, such as the Radeon RX 7900 XTX, are gaining ground in the AI space and offer competitive performance at potentially lower prices.

- GPUs from other manufacturers: Other manufacturers, like Intel, are also entering the GPU market, offering alternative solutions.

It's essential to research and compare different GPU offerings to find the best fit for your specific needs and budget.

Conclusion

The NVIDIA 4090 24GB is a powerful GPU that can significantly boost performance for AI startups working with smaller models like Llama 3 8B. However, for larger models, it might struggle, and you may need to explore alternative options.

Remember that hardware isn't the only factor in AI success. Optimizing your code, choosing efficient quantization techniques, and exploring cloud computing options are also crucial.

FAQ

What are Large Language Models (LLMs)?

LLMs are AI systems trained on vast amounts of text data, enabling them to generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way.

What is Quantization?

Quantization is a technique used to reduce the size of a model and make it run faster by replacing floating-point numbers with lower precision integers. It's like compressing a file to make it smaller without losing too much quality.

What are the Benefits of Using NVIDIA 4090 24GB for AI Startups?

The NVIDIA 4090 24GB offers high performance and a massive amount of memory, making it a powerful tool for AI startups working with LLMs.

What are the Limitations of NVIDIA 4090 24GB for AI Startups?

The NVIDIA 4090 24GB might struggle with larger LLMs due to its memory capacity. It's also an expensive option, and you may need to explore other options like cloud computing.

What are some Alternatives to NVIDIA 4090 24GB for AI Startups?

AMD GPUs, like the Radeon RX 7900 XTX, and GPUs from other manufacturers like Intel are potential alternatives. You can also consider cloud computing services for accessing powerful GPUs without the upfront cost.

Keywords

NVIDIA 4090 24GB, AI Startups, LLMs, Llama 3, GPU, Token Speed, Quantization, Performance, F16, Q4, K/M, Generation, Processing, AMD, Radeon RX 7900 XTX, Intel, Cloud Computing, AI, Machine Learning, Deep Learning