Is NVIDIA 4080 16GB Powerful Enough for Llama3 8B?

Introduction: The Quest for Local AI Power

The world of Large Language Models (LLMs) is buzzing with excitement. These powerful AI models, capable of generating text, translating languages, writing different kinds of creative content, and answering your questions in an informative way, are changing the way we interact with technology.

But unleashing the power of LLMs often requires significant computing resources, making it a challenge to run them locally. Enter NVIDIA's 4080_16GB, a powerhouse graphics card designed for demanding tasks like machine learning and AI.

Can this GPU handle the demands of Llama3 8B, a popular and powerful LLM, for local deployment? Let's dive deep into the data and find out!

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

Before we jump into Llama3 8B, let's take a quick detour to understand the concept of token generation speed. This metric measures how quickly a model can generate tokens, which are the basic units of text (think of them like the building blocks of sentences).

Imagine you're building a house: Bricks represent tokens, and the faster you lay them, the quicker your house goes up.

For example, let's look at the Apple M1, a powerful processor for many tasks, including running LLMs. It achieves a remarkable 12,000 tokens per second for Llama2 7B. This speed is impressive, but it's just the tip of the iceberg.

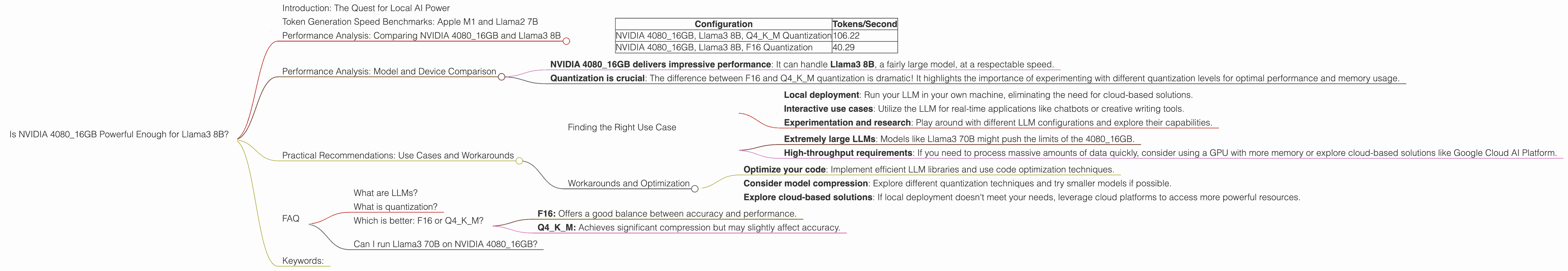

Performance Analysis: Comparing NVIDIA 4080_16GB and Llama3 8B

Now, let's get down to business. The table below presents the token generation speed benchmarks for NVIDIA 4080_16GB running Llama3 8B.

| Configuration | Tokens/Second |

|---|---|

| NVIDIA 408016GB, Llama3 8B, Q4K_M Quantization | 106.22 |

| NVIDIA 4080_16GB, Llama3 8B, F16 Quantization | 40.29 |

What are those mysterious "Q4KM" and "F16" terms? They represent different quantization levels - techniques to compress the model for efficiency and reducing memory consumption.

- F16 Quantization uses 16 bits to represent each value in the model, sacrificing accuracy slightly but boosting performance.

- Q4KM Quantization is even more aggressive, using 4 bits but preserving a decent amount of accuracy.

Performance Analysis: Model and Device Comparison

Here's a breakdown of what we can infer from the data:

- NVIDIA 4080_16GB delivers impressive performance: It can handle Llama3 8B, a fairly large model, at a respectable speed.

- Quantization is crucial: The difference between F16 and Q4KM quantization is dramatic! It highlights the importance of experimenting with different quantization levels for optimal performance and memory usage.

Think of it like squeezing a sponge: F16 is like squeezing a sponge with a bit of water still inside, while Q4KM is like squeezing it as hard as you can, sacrificing some of the water but making it much more compact.

Practical Recommendations: Use Cases and Workarounds

Finding the Right Use Case

The NVIDIA 4080_16GB is a solid choice for Llama3 8B if you need:

- Local deployment: Run your LLM in your own machine, eliminating the need for cloud-based solutions.

- Interactive use cases: Utilize the LLM for real-time applications like chatbots or creative writing tools.

- Experimentation and research: Play around with different LLM configurations and explore their capabilities.

However, you might need alternative solutions for:

- Extremely large LLMs: Models like Llama3 70B might push the limits of the 4080_16GB.

- High-throughput requirements: If you need to process massive amounts of data quickly, consider using a GPU with more memory or explore cloud-based solutions like Google Cloud AI Platform.

Workarounds and Optimization

- Optimize your code: Implement efficient LLM libraries and use code optimization techniques.

- Consider model compression: Explore different quantization techniques and try smaller models if possible.

- Explore cloud-based solutions: If local deployment doesn't meet your needs, leverage cloud platforms to access more powerful resources.

FAQ

What are LLMs?

LLMs are sophisticated AI models trained on massive datasets of text and code. They can understand and generate human-like language, making them useful for a wide range of tasks.

What is quantization?

Quantization is a technique used to reduce the size of LLM models by representing values with fewer bits, thereby improving performance and reducing memory usage.

Which is better: F16 or Q4KM?

It depends on your priorities:

- F16: Offers a good balance between accuracy and performance.

- Q4KM: Achieves significant compression but may slightly affect accuracy.

Can I run Llama3 70B on NVIDIA 4080_16GB?

Unfortunately, we don't have benchmark data for Llama3 70B on the 4080_16GB. It may be possible, but the performance could be significantly impacted, especially if using F16 quantization.

Keywords:

NVIDIA 408016GB, Llama3 8B, LLM, Token Generation Speed, Quantization, Q4K_M, F16, GPU, Local Deployment, Performance Benchmarks, AI, Machine Learning, Deep Learning, Chatbots, Creative Writing, Token Per Second, Model Compression, Use Cases, Practical Recommendations, Workarounds, Cloud Computing, Google Cloud AI Platform, AI Platform.