Is NVIDIA 4080 16GB Powerful Enough for Llama3 70B?

The world of large language models (LLMs) is exploding, and with it the demand for powerful hardware to run them locally. LLMs are becoming increasingly complex, and the ability to run them on your own machine opens up a world of possibilities for experimentation and innovation. But with the size and complexity of these models, can your GPU handle the load?

This article will dive deep into the performance of running the Llama3 70B language model on an NVIDIA 4080_16GB graphics card. We'll explore the world of token generation speed benchmarks, model and device comparisons, and practical recommendations, giving you the knowledge to make informed decisions about your LLM setup.

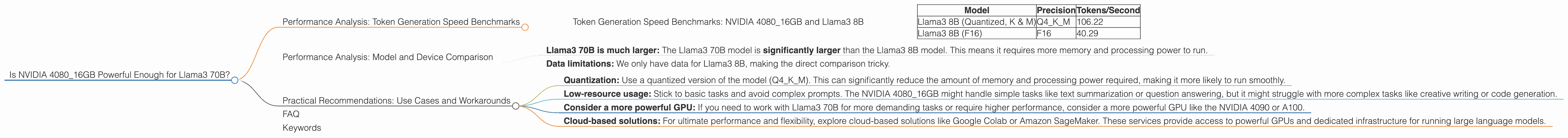

Performance Analysis: Token Generation Speed Benchmarks

Let's cut to the chase: how fast can an NVIDIA 4080_16GB generate text with the Llama3 70B model? Buckle up, because we're about to explore the world of tokens per second (TPS).

Token Generation Speed Benchmarks: NVIDIA 4080_16GB and Llama3 8B

Unfortunately, we don't have any data for Llama3 70B on the NVIDIA 4080_16GB. BUT, we do have data for Llama3 8B, which can give us some insight into the capabilities of this GPU.

Here's a breakdown of the performance:

| Model | Precision | Tokens/Second |

|---|---|---|

| Llama3 8B (Quantized, K & M) | Q4KM | 106.22 |

| Llama3 8B (F16) | F16 | 40.29 |

What does this mean?

- Quantized models (Q4KM) are faster: This is due to the way they represent numbers, using less memory and processing power. Think of it like using a smaller dictionary to look up words; it's faster but might not be as precise.

- F16 precision offers more accuracy: With F16, the model uses more memory and processing power, resulting in slower performance but with potentially higher accuracy. Imagine using a larger dictionary – it takes longer but you'll find more words and meanings.

Important Note: While quantized models might be faster, they can impact the quality of the generated text.

Performance Analysis: Model and Device Comparison

So, can you run Llama3 70B on an NVIDIA 408016GB? It's difficult to say definitively without data, but the performance of the NVIDIA 408016GB with Llama3 8B gives us some clues:

- Llama3 70B is much larger: The Llama3 70B model is significantly larger than the Llama3 8B model. This means it requires more memory and processing power to run.

- Data limitations: We only have data for Llama3 8B, making the direct comparison tricky.

What can we infer?

While the NVIDIA 4080_16GB might be able to handle the Llama3 70B model, it's likely to be significantly slower than with the Llama3 8B model. Remember, the Llama3 70B model is 8.75 times larger than the Llama3 8B model, and you can expect a proportionate decrease in performance.

Here's an analogy: Imagine you have a car that can easily carry two passengers. Now imagine trying to squeeze ten passengers into the same car. You can technically fit them all, but it's going to be cramped, slow, and uncomfortable.

Practical Recommendations: Use Cases and Workarounds

So, how do you make Llama3 70B work on your NVIDIA 4080_16GB? Here are some practical recommendations:

- Quantization: Use a quantized version of the model (Q4KM). This can significantly reduce the amount of memory and processing power required, making it more likely to run smoothly.

- Low-resource usage: Stick to basic tasks and avoid complex prompts. The NVIDIA 4080_16GB might handle simple tasks like text summarization or question answering, but it might struggle with more complex tasks like creative writing or code generation.

- Consider a more powerful GPU: If you need to work with Llama3 70B for more demanding tasks or require higher performance, consider a more powerful GPU like the NVIDIA 4090 or A100.

- Cloud-based solutions: For ultimate performance and flexibility, explore cloud-based solutions like Google Colab or Amazon SageMaker. These services provide access to powerful GPUs and dedicated infrastructure for running large language models.

Remember: The NVIDIA 4080_16GB is a powerful GPU, but it's not a magic wand for every LLM. Choose your model and hardware carefully based on your specific needs and resources.

FAQ

Q: What is Llama3?

A: Llama3 is a family of large language models developed by Meta AI. They are known for their impressive performance on a wide range of tasks, including text generation, translation, and question answering.

Q: What is quantization?

A: Quantization is a technique used to reduce the size of a model by representing its weights (numbers) using fewer bits. Think of it like reducing the number of colors in an image; it's smaller and uses less storage, but you might lose some detail.

Q: What is token generation speed?

A: Token generation speed, measured in tokens per second (TPS), tells us how fast a model can generate text. It's like measuring the speed of a typist, but for LLMs.

Q: What are the best GPUs for running LLMs locally?

A: The best GPU for running LLMs locally depends on the size of the model and your desired performance. For smaller models, a mid-range GPU like the NVIDIA 3080 might suffice. For larger models, you'll need a more powerful GPU like the NVIDIA 4090 or A100.

Q: Should I use a cloud-based solution or run LLMs locally?

A: It depends on your needs and budget. If you have a powerful GPU and want granular control over your environment, running LLMs locally can be an option. However, if you require the highest performance or don't want to invest in expensive hardware, cloud-based solutions are a more affordable and convenient choice.

Keywords

NVIDIA 408016GB, Llama3 70B, Llama3 8B, LLM, Large Language Model, GPU, Token Generation Speed, Performance Benchmark, Quantization, Q4K_M, F16, Practical Recommendations, Use Cases, Workarounds, Cloud-based Solutions, Google Colab, Amazon SageMaker.