Is NVIDIA 4080 16GB a Good Investment for AI Startups?

Introduction

The world of artificial intelligence (AI) is booming, and large language models (LLMs) are at the forefront of this revolution. These powerful AI systems can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running these LLMs requires serious computing power, and that's where graphics processing units (GPUs) come in.

Today, we're diving deep into the NVIDIA 408016GB, a powerhouse GPU that's gaining popularity among AI enthusiasts. This article will explore whether the 408016GB is a suitable investment for AI startups, especially those focused on building and deploying local LLM models. We'll analyze its performance with different LLM models, including the popular Llama 3 family, and guide you through the technical details that matter most. Buckle up, it's going to be an exciting ride!

Understanding the NVIDIA 4080_16GB

The NVIDIA 4080_16GB is a high-end graphics card designed for demanding tasks like gaming, video editing, and, you guessed it, AI. It boasts impressive specifications, including:

- Massive 16GB GDDR6X Memory: This ensures smooth operation with large models and allows the GPU to store and access information quickly.

- Powerful Ada Lovelace Architecture: This enables improved performance and energy efficiency compared to previous generations.

- High-Speed PCIe 5.0 Interface: This ensures lightning-fast communication between the GPU and the rest of your system.

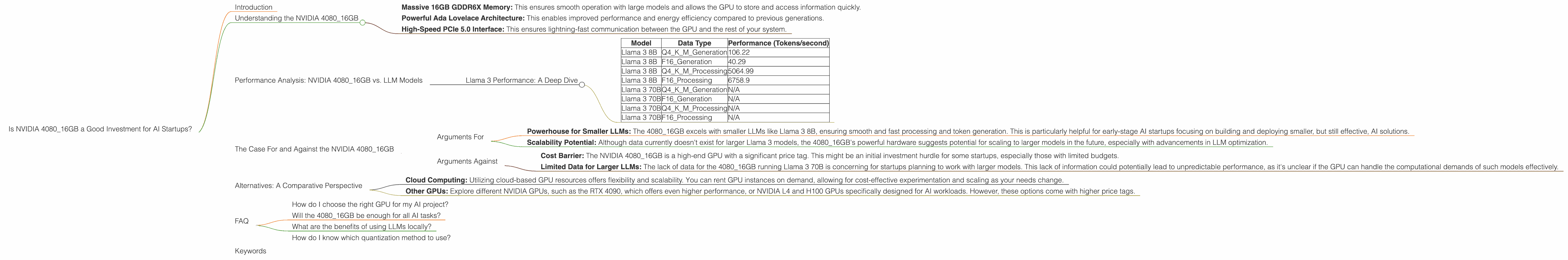

Performance Analysis: NVIDIA 4080_16GB vs. LLM Models

Llama 3 Performance: A Deep Dive

Let's get to the core of our investigation: How does the NVIDIA 4080_16GB perform with different Llama 3 models? We'll analyze both token generation and processing speeds, which are crucial metrics for understanding how efficiently your LLM will work.

Important: We'll be focusing on Llama 3 models (8B and 70B) for this analysis. Data for other LLMs or models beyond 8B and 70B isn't available for the 4080_16GB and won't be included.

Data Table:

| Model | Data Type | Performance (Tokens/second) |

|---|---|---|

| Llama 3 8B | Q4KM_Generation | 106.22 |

| Llama 3 8B | F16_Generation | 40.29 |

| Llama 3 8B | Q4KM_Processing | 5064.99 |

| Llama 3 8B | F16_Processing | 6758.9 |

| Llama 3 70B | Q4KM_Generation | N/A |

| Llama 3 70B | F16_Generation | N/A |

| Llama 3 70B | Q4KM_Processing | N/A |

| Llama 3 70B | F16_Processing | N/A |

Understanding the Data:

- Q4KM and F16: These refer to quantization methods used to reduce the size of the LLM models. Q4KM offers a balance between accuracy and speed, while F16 uses a smaller precision, potentially impacting accuracy but increasing speed.

- Generation: This refers to the speed at which the LLM generates new text or output based on the input provided.

- Processing: This measures the speed at which the LLM processes the input.

Key Observations:

- Impressive Performance with Llama 3 8B: The 408016GB exhibits strong performance with the Llama 3 8B model, particularly in Q4K_M processing, reaching a remarkable 5064.99 tokens/second. This indicates that the GPU can handle the computational demands of this model very efficiently.

- Impact of Quantization: Notice how the F16 quantization method generally leads to faster generation speeds than Q4KM. This is because F16 uses less precise data, which makes calculations simpler and faster. However, the trade-off might be a slight decrease in model accuracy.

- Missing Data for Llama 3 70B: Unfortunately, the data for the 4080_16GB running Llama 3 70B is currently unavailable. This suggests that further research and testing are needed to understand how this GPU performs with larger models.

The Case For and Against the NVIDIA 4080_16GB

Arguments For

- Powerhouse for Smaller LLMs: The 4080_16GB excels with smaller LLMs like Llama 3 8B, ensuring smooth and fast processing and token generation. This is particularly helpful for early-stage AI startups focusing on building and deploying smaller, but still effective, AI solutions.

- Scalability Potential: Although data currently doesn't exist for larger Llama 3 models, the 4080_16GB's powerful hardware suggests potential for scaling to larger models in the future, especially with advancements in LLM optimization.

Arguments Against

- Cost Barrier: The NVIDIA 4080_16GB is a high-end GPU with a significant price tag. This might be an initial investment hurdle for some startups, especially those with limited budgets.

- Limited Data for Larger LLMs: The lack of data for the 4080_16GB running Llama 3 70B is concerning for startups planning to work with larger models. This lack of information could potentially lead to unpredictable performance, as it's unclear if the GPU can handle the computational demands of such models effectively.

Alternatives: A Comparative Perspective

While the 4080_16GB is a strong contender, it's important to consider alternative options, especially if you're working with larger LLMs or are on a tighter budget:

- Cloud Computing: Utilizing cloud-based GPU resources offers flexibility and scalability. You can rent GPU instances on demand, allowing for cost-effective experimentation and scaling as your needs change.

- Other GPUs: Explore different NVIDIA GPUs, such as the RTX 4090, which offers even higher performance, or NVIDIA L4 and H100 GPUs specifically designed for AI workloads. However, these options come with higher price tags.

FAQ

How do I choose the right GPU for my AI project?

Consider the size and complexity of the LLMs you'll be using. Starting with smaller LLMs? The 4080_16GB might be a great choice. Planning to work with very large LLMs? Explore alternatives like cloud computing or higher-end GPUs. Read reviews and benchmark results to compare performance.

Will the 4080_16GB be enough for all AI tasks?

While the 4080_16GB is powerful, it's not a one-size-fits-all solution. Different AI applications have different computational needs. For tasks like image and video processing, you might need a GPU with different strengths.

What are the benefits of using LLMs locally?

Running LLMs locally grants you greater control over your data and infrastructure, avoiding potential privacy concerns and network issues.

How do I know which quantization method to use?

The choice between quantization methods like Q4KM and F16 depends on the specific requirements of your AI task. Q4KM offers a balance between accuracy and speed, while F16 might be better for tasks where speed is more critical.

Keywords

NVIDIA 408016GB, AI startups, LLMs, Llama 3, GPU, token generation, processing speed, quantization, Q4K_M, F16, cloud computing, data processing, local AI models, AI performance, AI investment, AI technology.