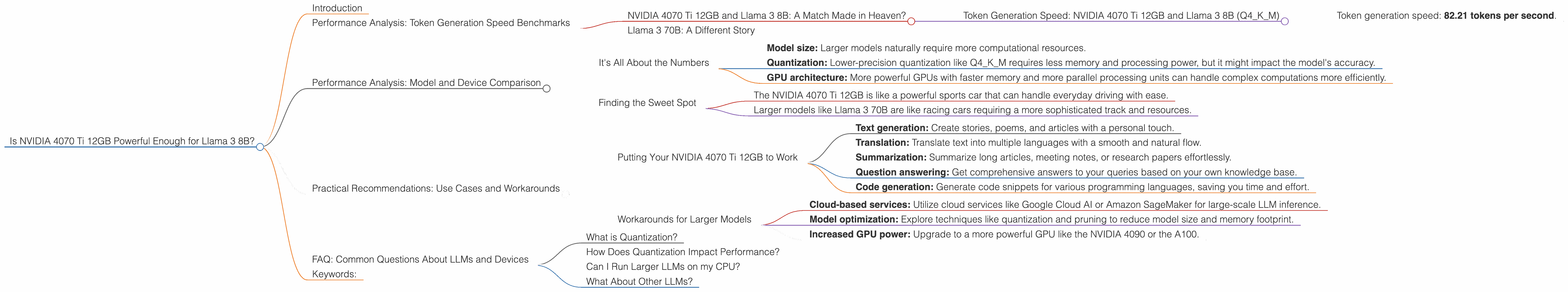

Is NVIDIA 4070 Ti 12GB Powerful Enough for Llama3 8B?

Introduction

The world of large language models (LLMs) is buzzing with excitement, but the ability to run these models locally can be challenging, even with modern hardware. If you're considering using a powerful graphics card like the NVIDIA 4070 Ti 12GB for running the Llama 3 8B model locally, you're in the right place! We'll dive deep into its performance with different quantization formats, explore whether it meets your needs, and offer practical recommendations.

Imagine a world where you can access the immense power of LLMs without relying on cloud services. This not only saves you money on cloud computing costs but also allows for faster response times and greater control over your data. While the appeal is undeniable, the question remains: Can your NVIDIA 4070 Ti 12GB handle Llama 3 8B without breaking a sweat? Let's find out.

Performance Analysis: Token Generation Speed Benchmarks

NVIDIA 4070 Ti 12GB and Llama 3 8B: A Match Made in Heaven?

Let's cut to the chase. The NVIDIA 4070 Ti 12GB delivers impressive performance with the Llama 3 8B model, especially when using the Q4KM quantization format.

Token Generation Speed: NVIDIA 4070 Ti 12GB and Llama 3 8B (Q4KM)

- Token generation speed: 82.21 tokens per second.

This speed means you can generate text, translate languages, and engage in creative writing tasks with a noticeable level of responsiveness. Think of it as your personal AI assistant generating text at a speed that rivals the typing of a seasoned writer!

Important Note: We don't have performance data for the F16 quantization format with Llama 3 8B on the NVIDIA 4070 Ti 12GB, so we can't compare the two.

Llama 3 70B: A Different Story

While the NVIDIA 4070 Ti 12GB performs well with Llama 3 8B, we don't have performance data available for the Llama 3 70B model. This suggests that the 4070 Ti 12GB might not be powerful enough for the larger Llama 3 70B model, especially for real-time, interactive use cases.

Performance Analysis: Model and Device Comparison

It's All About the Numbers

The performance of a GPU for running LLMs like Llama 3 depends on several factors:

- Model size: Larger models naturally require more computational resources.

- Quantization: Lower-precision quantization like Q4KM requires less memory and processing power, but it might impact the model's accuracy.

- GPU architecture: More powerful GPUs with faster memory and more parallel processing units can handle complex computations more efficiently.

Finding the Sweet Spot

While the NVIDIA 4070 Ti 12GB might not be the ideal choice for running the largest LLM models, it's a great option for more manageable models like Llama 3 8B.

Think of it this way:

- The NVIDIA 4070 Ti 12GB is like a powerful sports car that can handle everyday driving with ease.

- Larger models like Llama 3 70B are like racing cars requiring a more sophisticated track and resources.

For now, the focus is on Llama 3 8B, and the NVIDIA 4070 Ti 12GB is proving to be a great companion for it.

Practical Recommendations: Use Cases and Workarounds

Putting Your NVIDIA 4070 Ti 12GB to Work

The NVIDIA 4070 Ti 12GB paired with Llama 3 8B is a powerful combination suitable for a variety of tasks:

- Text generation: Create stories, poems, and articles with a personal touch.

- Translation: Translate text into multiple languages with a smooth and natural flow.

- Summarization: Summarize long articles, meeting notes, or research papers effortlessly.

- Question answering: Get comprehensive answers to your queries based on your own knowledge base.

- Code generation: Generate code snippets for various programming languages, saving you time and effort.

Workarounds for Larger Models

If you're dead set on using Llama 3 70B or other larger models, consider these solutions:

- Cloud-based services: Utilize cloud services like Google Cloud AI or Amazon SageMaker for large-scale LLM inference.

- Model optimization: Explore techniques like quantization and pruning to reduce model size and memory footprint.

- Increased GPU power: Upgrade to a more powerful GPU like the NVIDIA 4090 or the A100.

FAQ: Common Questions About LLMs and Devices

What is Quantization?

Quantization is a technique used to reduce the size of a model and its memory requirements by reducing the number of bits used to represent the model's weights. Think of it like compressing an image file. You lose some detail, but the file becomes smaller and faster to load.

How Does Quantization Impact Performance?

Lower-precision quantization like Q4KM (4 bits) can increase performance by reducing the number of calculations needed, but it can also slightly impact the model's accuracy.

Can I Run Larger LLMs on my CPU?

While CPUs can handle LLMs, they're generally not as efficient as GPUs for these tasks. GPUs are specifically designed for parallel computations, allowing them to process vast amounts of data simultaneously.

What About Other LLMs?

The performance of different LLMs can vary depending on their size, architecture, and training data. The provided data focuses on the performance of Llama 3 8B with the NVIDIA 4070 Ti 12GB for illustrative purposes.

Keywords:

NVIDIA 4070 Ti 12GB, Llama 3 8B, Llama 3 70B, LLM, Large Language Model, Token Generation Speed, Quantization, Q4KM, F16, GPU, GPU Performance, Local Inference, Device Comparison, Practical Recommendations, Use Cases, Workarounds, Cloud Services, Model Optimization, CPU, GPU Architecture.