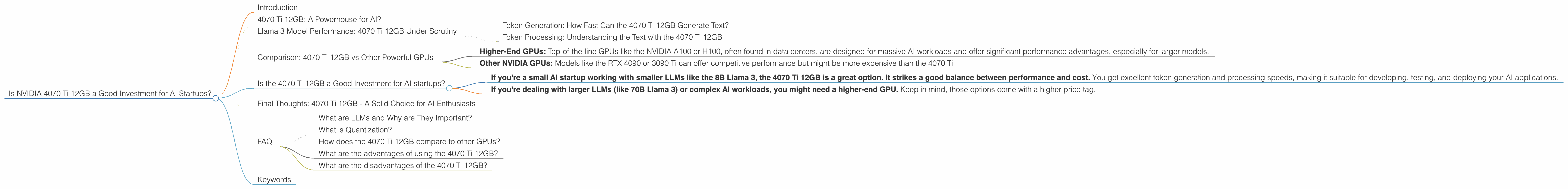

Is NVIDIA 4070 Ti 12GB a Good Investment for AI Startups?

Introduction

The world of AI is buzzing with excitement, driven by the rapid advancements in large language models (LLMs) like ChatGPT and Bard. These models can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running these LLMs requires powerful hardware, and finding the right balance between performance and cost is crucial, especially for AI startups.

This article delves into the world of NVIDIA's 4070 Ti 12GB GPU, a popular choice for developers and AI enthusiasts, and evaluates its suitability for AI startups looking to deploy LLMs. We'll analyze its performance with various LLM models, focusing on the Llama 3 family, using real-world benchmarks. Buckle up, this journey might be a bit geeky, but stick with us; you'll learn some cool stuff about AI and GPUs.

4070 Ti 12GB: A Powerhouse for AI?

Let's start with the basics. The NVIDIA 4070 Ti 12GB is a powerful graphics processing unit (GPU) specifically designed for demanding tasks like gaming, video editing, and yes, you guessed it, AI development. It boasts a hefty amount of video memory (12 GB of GDDR6X), which is crucial for storing and processing large AI models.

Llama 3 Model Performance: 4070 Ti 12GB Under Scrutiny

To gauge the 4070 Ti 12GB's AI capabilities, we're focusing on the Llama 3 family, a popular open-source LLM. The Llama 3 family includes models of varying sizes, from 7B to 70B parameters, each with its own processing demands. We'll be looking at the 8B model in particular, and we'll analyze the performance of the 4070 Ti 12GB in two key scenarios:

- Token Generation: This measures how quickly the GPU can generate new text tokens (think of them as building blocks of language) based on the input prompt.

- Token Processing: This refers to how fast the GPU can process existing tokens, which is crucial for tasks like understanding the meaning of text or translating languages.

Token Generation: How Fast Can the 4070 Ti 12GB Generate Text?

The 4070 Ti 12GB delivers impressive token generation speeds with the Llama 3 8B model, particularly when using quantization techniques. Quantization is like a "diet" for AI models, making them smaller and more efficient by reducing the precision of the model's mathematical calculations.

The 4070 Ti 12GB achieves a remarkable 82.21 tokens per second when running the Llama 3 8B model with Q4KM quantization. This translates to 1.37 tokens per millisecond – pretty speedy! Unfortunately, there's no data available for the F16 (half-precision) setting for the Llama 3 8B model, so we can't compare the speeds in this case.

Token Processing: Understanding the Text with the 4070 Ti 12GB

For token processing, the 4070 Ti 12GB shines again. With the Llama 3 8B model using Q4KM quantization, it achieves a blazing 3653.07 tokens per second, or about 0.27 milliseconds per token. This is lightning fast! No data is available for F16 precision or for larger models like Llama 3 70B, so we can't compare its performance in these scenarios.

Comparison: 4070 Ti 12GB vs Other Powerful GPUs

While the 4070 Ti 12GB puts up impressive numbers, how does it stack up against other popular GPUs used for AI work? Unfortunately, we don't have performance data for other GPUs on the Llama 3 8B model to make a direct comparison. However, here's what we know from benchmarks:

- Higher-End GPUs: Top-of-the-line GPUs like the NVIDIA A100 or H100, often found in data centers, are designed for massive AI workloads and offer significant performance advantages, especially for larger models.

- Other NVIDIA GPUs: Models like the RTX 4090 or 3090 Ti can offer competitive performance but might be more expensive than the 4070 Ti.

Remember, the right GPU choice depends on your specific needs and budget. If you're working with smaller models like the Llama 3 8B, the 4070 Ti 12GB offers a compelling balance of performance and cost. However, if you're tackling larger models or dealing with demanding AI workloads, you might need to consider beefier hardware.

Is the 4070 Ti 12GB a Good Investment for AI startups?

So, here's the answer in a nutshell:

- If you're a small AI startup working with smaller LLMs like the 8B Llama 3, the 4070 Ti 12GB is a great option. It strikes a good balance between performance and cost. You get excellent token generation and processing speeds, making it suitable for developing, testing, and deploying your AI applications.

- If you're dealing with larger LLMs (like 70B Llama 3) or complex AI workloads, you might need a higher-end GPU. Keep in mind, those options come with a higher price tag.

Final Thoughts: 4070 Ti 12GB - A Solid Choice for AI Enthusiasts

The NVIDIA 4070 Ti 12GB is a solid choice for AI startups and enthusiasts working with smaller LLMs. Its performance, especially with quantization techniques like Q4KM, makes it a worthy investment for developing and deploying your AI projects. Remember to consider your specific needs and resources before making a final decision. The AI landscape is constantly evolving, so stay tuned for new hardware and software advancements that could change the game.

FAQ

What are LLMs and Why are They Important?

LLMs are large language models, essentially computer programs that can understand and generate human-like text. They are revolutionizing various fields, from customer service chatbots to medical diagnosis tools.

What is Quantization?

Quantization is a technique used to reduce the size of AI models and make them run faster. It involves converting the model's parameters (the numbers representing the model's knowledge) to lower precision formats. Think of it like using a smaller ruler to measure something; you might lose some precision, but you get a much more compact and efficient model.

How does the 4070 Ti 12GB compare to other GPUs?

Unfortunately, we don't have the necessary benchmark data to make a direct comparison. However, high-end GPUs like the NVIDIA A100 and H100 are designed specifically for massive AI workloads and typically offer superior performance.

What are the advantages of using the 4070 Ti 12GB?

The 4070 Ti 12GB offers a good balance of performance and price. It's a cost-effective solution for developers and startups working with smaller LLMs, especially those utilizing quantization techniques.

What are the disadvantages of the 4070 Ti 12GB?

While it's a powerful GPU, it might not be powerful enough for AI tasks involving larger LLMs or complex AI applications. Also, keep in mind, the GPU market is dynamic, and newer models with better performance might emerge in the future.

Keywords

NVIDIA 4070 Ti 12GB, AI startups, LLM, Llama 3, token generation, token processing, quantization, Q4KM, F16, GPU, AI, deep learning, machine learning, natural language processing, GPT-3, ChatGPT, Bard, performance, cost, efficiency, investment, development, deployment, benchmarks