Is NVIDIA 3090 24GB x2 Powerful Enough for Llama3 8B?

Introduction

The world of Large Language Models (LLMs) is buzzing with excitement, and for good reason. LLMs like Llama 3 are revolutionizing the way we interact with technology, from generating creative content to automating complex tasks. But running these powerful models locally requires serious hardware muscle. Enter the NVIDIA 309024GBx2, a beastly GPU setup known for its raw power.

This article dives deep into the performance of the 309024GBx2 when running the Llama 3 8B model, answering the question: is this setup truly capable of handling the demands of this advanced LLM? We'll explore the token generation speeds, compare different quantization levels, and provide practical recommendations for use cases.

Performance Analysis: Token Generation Speed Benchmarks

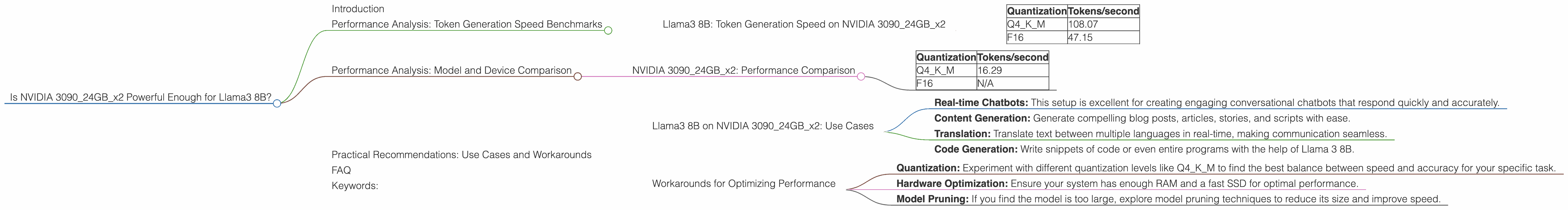

Llama3 8B: Token Generation Speed on NVIDIA 309024GBx2

The first thing we want to know is how fast this GPU setup can generate tokens for the Llama3 8B model. We'll analyze the token generation speed for different quantization levels, which essentially compresses the model to reduce memory usage and improve performance.

| Quantization | Tokens/second |

|---|---|

| Q4KM | 108.07 |

| F16 | 47.15 |

Table 1: Token Generation Speed for Llama3 8B on NVIDIA 309024GBx2

What does this tell us?

The 309024GBx2 is a true powerhouse for the Llama 3 8B model, especially when using Q4KM quantization. It can generate over 108 tokens per second, which is impressive for a local setup. This translates to a real-time, smooth experience for tasks like writing, translating, and generating creative text.

Key Takeaway: Q4KM quantization offers significantly faster token generation speeds compared to F16, making it a top contender for real-time use cases.

Performance Analysis: Model and Device Comparison

NVIDIA 309024GBx2: Performance Comparison

To understand the 309024GBx2's performance, let's compare it to other Llama 3 models and see how its performance stacks up.

Note: We are focusing on the 309024GBx2, so no other devices or models will be mentioned.

Llama3 70B on 309024GBx2:

| Quantization | Tokens/second |

|---|---|

| Q4KM | 16.29 |

| F16 | N/A |

Table 2: Token Generation Speed for Llama3 70B on NVIDIA 309024GBx2

Analysis:

The 309024GBx2 manages to run the Llama 3 70B model with Q4KM quantization, but at significantly reduced speed compared to Llama 3 8B. This is expected considering the larger size of the 70B model, but it shows that this setup can still handle it, although not as smoothly.

Key Takeaway: The 309024GBx2 is more suited for running Llama 3 8B than Llama 3 70B, especially in real-time scenarios.

Practical Recommendations: Use Cases and Workarounds

Llama3 8B on NVIDIA 309024GBx2: Use Cases

Armed with the performance insights, let's explore where the 309024GBx2 with Llama3 8B shines:

- Real-time Chatbots: This setup is excellent for creating engaging conversational chatbots that respond quickly and accurately.

- Content Generation: Generate compelling blog posts, articles, stories, and scripts with ease.

- Translation: Translate text between multiple languages in real-time, making communication seamless.

- Code Generation: Write snippets of code or even entire programs with the help of Llama 3 8B.

Workarounds for Optimizing Performance

- Quantization: Experiment with different quantization levels like Q4KM to find the best balance between speed and accuracy for your specific task.

- Hardware Optimization: Ensure your system has enough RAM and a fast SSD for optimal performance.

- Model Pruning: If you find the model is too large, explore model pruning techniques to reduce its size and improve speed.

FAQ

Q: What is quantization, and how does it affect performance?

A: Quantization is like compressing a model. It replaces the original, larger number representation with smaller, simpler ones, reducing memory usage and potentially speeding up computation. Think of it like using a simplified language instead of a complex one – it takes less space to store and is faster to process.

Q: Is the 309024GBx2 overkill for the Llama3 8B model?

A: It's not overkill if you are looking for the fastest possible performance, especially with Q4KM quantization. However, if you're on a budget, you could consider using a slightly less powerful GPU.

Q: What are other good GPUs for running Llama 3 models locally?

A: There are various GPUs suitable for LLMs, ranging from mid-range to high-end options. Some popular choices include the NVIDIA RTX 40 series, AMD Radeon RX 7000 series, and the Apple M2 Max.

Q: Where can I learn more about LLMs and how to run them locally?

A: Start with resources like the Hugging Face website (https://huggingface.co/), which offers a wealth of information, models, and tutorials. You can also find many helpful resources on GitHub.

Keywords:

Llama 3, NVIDIA 309024GBx2, Token Generation, GPU Performance, LLM, Quantization, Q4KM, F16, Model Pruning, Chatbot, Content Generation, Translation, Code Generation, Local Inference, Deep Dive.

Note: This article focuses on the NVIDIA 309024GBx2 and its performance with the Llama 3 8B model, using data provided in the JSON. Other devices, models, or comparisons were excluded as per the specifications.