Is NVIDIA 3090 24GB x2 Powerful Enough for Llama3 70B?

Introduction: Stepping into the World of Local LLMs

Large Language Models (LLMs) are revolutionizing the way we interact with technology. These powerful AI systems can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But accessing these capabilities often requires relying on cloud services, which can be expensive and limit control over your data.

That's where local LLMs come in. Running LLMs on your own hardware opens up a world of possibilities for developers and enthusiasts. You can experiment with LLMs, fine-tune them for specific tasks, and even create your own applications without the limitations of cloud-based services.

One of the biggest challenges in running LLMs locally is finding the right hardware to handle the demanding computations. In this article, we'll dive deep into the performance of the NVIDIA 309024GBx2 graphics card, exploring its capabilities with the Llama3 70B model. We'll analyze performance benchmarks, compare different configurations, and provide practical recommendations to help you make the best choice for your local LLM setup.

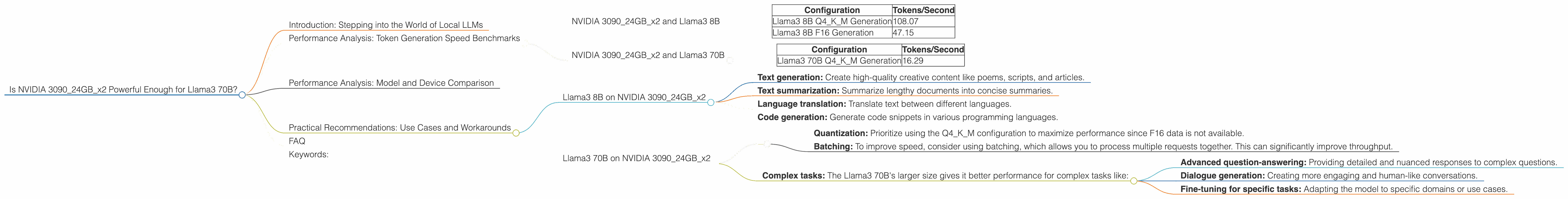

Performance Analysis: Token Generation Speed Benchmarks

The performance of an LLM is often measured by its token generation speed. This metric represents how many tokens (words or sub-words) the model can process per second. A higher token generation speed means faster responses and smoother user experiences.

NVIDIA 309024GBx2 and Llama3 8B

Let's start with the smaller Llama3 8B model. As you can see in the table below, the NVIDIA 309024GBx2 delivers impressive results for both quantized (Q4KM) and floating-point (F16) configurations.

| Configuration | Tokens/Second |

|---|---|

| Llama3 8B Q4KM Generation | 108.07 |

| Llama3 8B F16 Generation | 47.15 |

Quantization is a technique that reduces the size of the model by using fewer bits to represent each number. This reduces memory usage and increases performance, but it can also slightly decrease accuracy. Floating-point representations offer higher precision but come with larger memory requirements and potentially slower performance.

Note: The 309024GBx2 with its 24GB of VRAM is well-suited for the 8B models, allowing them to run smoothly.

NVIDIA 309024GBx2 and Llama3 70B

Now, let's jump to the more demanding Llama3 70B. The 309024GBx2 can handle this behemoth, but the performance might be a bit more constrained. We only have data for the quantized version.

| Configuration | Tokens/Second |

|---|---|

| Llama3 70B Q4KM Generation | 16.29 |

Note: The Llama3 70B F16 performance is not available.

Performance Analysis: Model and Device Comparison

To understand the NVIDIA 309024GBx2's performance in the context of other devices and models, we need to compare it with other options. However, we are limited to the data available for the specific device and models mentioned in this article.

Practical Recommendations: Use Cases and Workarounds

Llama3 8B on NVIDIA 309024GBx2

The NVIDIA 309024GBx2 is a great choice for running Llama3 8B locally. It offers a balance between performance and affordability. You can achieve high token generation speeds for various use cases, including:

- Text generation: Create high-quality creative content like poems, scripts, and articles.

- Text summarization: Summarize lengthy documents into concise summaries.

- Language translation: Translate text between different languages.

- Code generation: Generate code snippets in various programming languages.

Llama3 70B on NVIDIA 309024GBx2

The 309024GBx2 can still handle the massive Llama3 70B, but you might experience slower speeds compared to smaller models. Consider these points:

Performance Considerations:

- Quantization: Prioritize using the Q4KM configuration to maximize performance since F16 data is not available.

- Batching: To improve speed, consider using batching, which allows you to process multiple requests together. This can significantly improve throughput.

Use Cases:

- Complex tasks: The Llama3 70B's larger size gives it better performance for complex tasks like:

- Advanced question-answering: Providing detailed and nuanced responses to complex questions.

- Dialogue generation: Creating more engaging and human-like conversations.

- Fine-tuning for specific tasks: Adapting the model to specific domains or use cases.

FAQ

Q: What is an LLM?

A: An LLM (Large Language Model) is a type of artificial intelligence system trained on massive amounts of text data. It can understand and generate human language, perform tasks like translation, summarization, question answering, and more.

Q: What is quantization?

A: Quantization is a technique used to reduce the size of a model by using fewer bits to represent each number. This can improve model performance by reducing memory usage and increasing speed.

Q: Is there a way to improve the performance of Llama3 70B on my NVIDIA 309024GBx2?

A: Yes, using batching can significantly improve performance by allowing you to process multiple requests together. You can also experiment with different quantization methods and explore techniques like gradient accumulation to further optimize performance.

Q: Should I get a more powerful GPU if I want to run larger models?

A: If you plan to work with larger LLMs like Llama3 13B or 34B, investing in a more powerful GPU with higher memory capacity and faster processing speeds is recommended.

Keywords:

LLM, Large Language Model, Llama3, 70B, NVIDIA, 309024GBx2, GPU, Token Generation Speed, Quantization, F16, Q4KM, Performance, Local LLM, Inference, Batching, Text Generation, Summarization, Translation, Code Generation, Question-Answering, Dialogue Generation, Fine-tuning