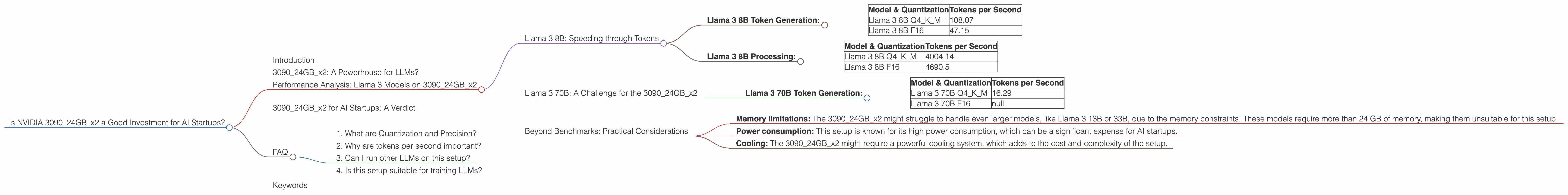

Is NVIDIA 3090 24GB x2 a Good Investment for AI Startups?

Introduction

The AI landscape is evolving rapidly, with large language models (LLMs) becoming increasingly popular. While the cloud offers powerful solutions, many startups are looking to train and run LLMs locally to gain more control and reduce costs. One of the key questions that arises is what hardware is best suited for this task.

This article explores the potential of the NVIDIA 309024GBx2 setup, a popular choice for AI enthusiasts, for AI startups looking to implement LLMs locally. We'll dive into the performance of this setup with various LLM models and dive into the practicality of this setup for AI startups.

309024GBx2: A Powerhouse for LLMs?

The NVIDIA 309024GBx2 configuration boasts impressive specs: two of the powerful NVIDIA GeForce RTX 3090 GPUs, each equipped with 24 GB of GDDR6X memory. This setup offers a remarkable amount of processing power and memory that can handle large models efficiently. Let's see how this setup performs with different LLM models.

Performance Analysis: Llama 3 Models on 309024GBx2

We'll start by focusing on the Llama 3 series, a popular family of open-source LLMs known for their impressive performance and versatility.

Llama 3 8B: Speeding through Tokens

Let's start with the Llama 3 8B model. This model is known for its efficiency, making it suitable for a wide array of tasks.

Llama 3 8B Token Generation:

| Model & Quantization | Tokens per Second |

|---|---|

| Llama 3 8B Q4KM | 108.07 |

| Llama 3 8B F16 | 47.15 |

Let's break down these numbers:

- Llama 3 8B Q4KM: This setup generates 108.07 tokens per second using quantized (Q4KM) weights. Quantization is like taking a high-resolution photo and compressing it without losing too much detail - it reduces the size of the model while preserving accuracy. Here, the performance is impressive for a relatively small model like Llama 3 8B, allowing for fast and efficient processing.

- Llama 3 8B F16: Using 16-bit floating-point (F16) precision, the setup achieves 47.15 tokens per second. While not as fast as Q4KM, this configuration provides an excellent balance between speed and accuracy.

Think of it this way: If you can generate 108 tokens per second, you could create a text as long as a 100-word tweet in less than a second! That's pretty impressive for a local setup.

Llama 3 8B Processing:

| Model & Quantization | Tokens per Second |

|---|---|

| Llama 3 8B Q4KM | 4004.14 |

| Llama 3 8B F16 | 4690.5 |

The 309024GBx2 setup demonstrates impressive performance in processing tokens, where the model analyzes input and produces an output.

- Llama 3 8B Q4KM: This setup processes an astounding 4004.14 tokens per second. This translates to very fast response times when interacting with an LLM like this.

- Llama 3 8B F16: The use of F16 precision results in 4690.5 tokens per second for processing, showcasing the effectiveness of the 309024GBx2 for resource-intensive tasks.

Imagine you're having a conversation with a chatbot powered by Llama 3 8B running on this setup. You can expect incredibly quick responses, even for complex prompts.

Llama 3 70B: A Challenge for the 309024GBx2

Llama 3 70B Token Generation:

| Model & Quantization | Tokens per Second |

|---|---|

| Llama 3 70B Q4KM | 16.29 |

| Llama 3 70B F16 | null |

The 309024GBx2 faces a more challenging task with the larger Llama 3 70B model.

- Llama 3 70B Q4KM: The setup achieves 16.29 tokens per second. This signifies a considerable decrease in speed compared to the 8B model, but still a respectable performance for a model of this scale.

- Llama 3 70B F16: Unfortunately, data for F16 precision is not available, so we cannot compare performance with different precision levels for the larger 70B model.

Beyond Benchmarks: Practical Considerations

The performance benchmarks are important, but they don't tell the full story. Here's the deal:

- Memory limitations: The 309024GBx2 might struggle to handle even larger models, like Llama 3 13B or 33B, due to the memory constraints. These models require more than 24 GB of memory, making them unsuitable for this setup.

- Power consumption: This setup is known for its high power consumption, which can be a significant expense for AI startups.

- Cooling: The 309024GBx2 might require a powerful cooling system, which adds to the cost and complexity of the setup.

309024GBx2 for AI Startups: A Verdict

The 309024GBx2 setup offers impressive performance for smaller LLMs like Llama 3 8B, making it a solid option for AI startups looking to run these models locally. The setup might be challenging for larger LLMs, so careful consideration of model size and memory limitations is crucial.

FAQ

1. What are Quantization and Precision?

Quantization is a technique used to reduce the size of an LLM by representing its weights with fewer bits. This makes training and running the model more efficient. Precision refers to the number of bits used to represent the weights in a model. Higher precision offers more accuracy but requires more memory and processing power.

2. Why are tokens per second important?

Tokens per second is a measure of how fast a model can generate or process text. A higher number indicates faster performance, which is essential for real-time applications like chatbots.

3. Can I run other LLMs on this setup?

While the performance data is specific to Llama 3 models, you can explore the performance of other LLMs like GPT-3, GPT-Neo, and others on this setup. However, keep in mind that the performance may vary depending on the model's size and complexity.

4. Is this setup suitable for training LLMs?

The 309024GBx2 setup is suitable for training smaller LLMs, but you might need a more powerful setup for larger models. Training large LLMs requires significant computational resources and time.

Keywords

NVIDIA 309024GBx2, AI Startups, LLM, Llama 3, Performance, Token Generation, Token Processing, Quantization, Precision, Memory Limitations, Power Consumption, Cooling, Local LLMs, AI Hardware, GPU, Benchmark, Inference, Deep Learning