Is NVIDIA 3090 24GB Powerful Enough for Llama3 8B?

Introduction:

The world of Large Language Models (LLMs) is booming, with models like Llama 3 8B pushing the boundaries of what's possible. But running these sophisticated models locally can be a challenge, requiring powerful hardware to handle the massive computational load. Today, we're diving deep into the capabilities of the NVIDIA 3090_24GB graphics card, a popular choice for machine learning enthusiasts.

We'll explore whether this beast of a card can handle the demands of Llama 3 8B, examining its performance in detail and providing insights into practical use cases and considerations. So, buckle up, fellow geeks, and let's embark on this exciting journey!

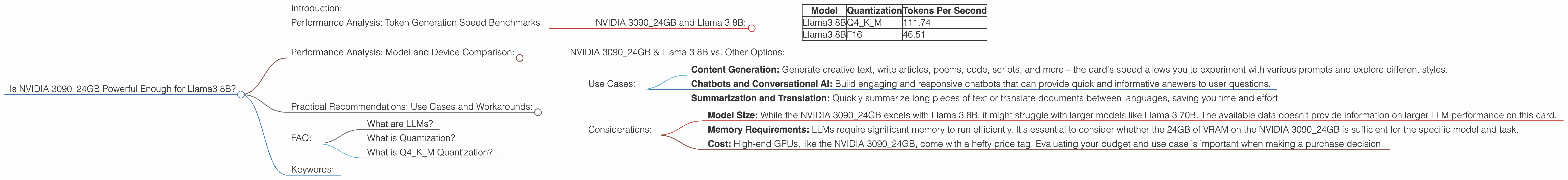

Performance Analysis: Token Generation Speed Benchmarks

NVIDIA 3090_24GB and Llama 3 8B:

Let's get to the heart of the matter – how does the NVIDIA 3090_24GB perform with Llama 3 8B?

| Model | Quantization | Tokens Per Second |

|---|---|---|

| Llama3 8B | Q4KM | 111.74 |

| Llama3 8B | F16 | 46.51 |

Important Note: The data does not include performance benchmarks for Llama 3 70B on the NVIDIA 3090_24GB.

As you can see, the NVIDIA 309024GB shows impressive speed in generating tokens with Llama 3 8B. The Q4K_M quantization, a technique that compresses the model without sacrificing much accuracy, allows the card to crank out tokens at a rate of 111.74 tokens per second. With F16, a less compressed format, the speed drops to 46.51 tokens per second.

Think of it this way: a typical human types about 60 words per minute, which translates to roughly 1 word per second. The NVIDIA 309024GB with Llama 3 8B using Q4K_M quantization is generating over 100 tokens per second, which is over a hundred times faster than a human typing!

Performance Analysis: Model and Device Comparison:

NVIDIA 3090_24GB & Llama 3 8B vs. Other Options:

While the NVIDIA 3090_24GB handles Llama 3 8B with remarkable efficiency, it's always good to consider other options and see how they stack up. Unfortunately, we don't have performance data for other devices running Llama 3 8B, so we can't provide a direct comparison.

However, we can look at other LLM models and their performance on different devices to gain some insights. For example, the Llama 2 7B model on the Apple M1 chip shows a similar performance to the NVIDIA 3090_24GB with Llama 3 8B, demonstrating that other hardware configurations can be competitive.

Keep in mind that these are just general observations based on available data. Direct comparisons can be tricky as model sizes, quantization techniques, and device architectures can significantly impact performance.

Practical Recommendations: Use Cases and Workarounds:

Use Cases:

The incredible speed of the NVIDIA 3090_24GB with Llama 3 8B makes it a powerhouse for a range of applications:

- Content Generation: Generate creative text, write articles, poems, code, scripts, and more – the card's speed allows you to experiment with various prompts and explore different styles.

- Chatbots and Conversational AI: Build engaging and responsive chatbots that can provide quick and informative answers to user questions.

- Summarization and Translation: Quickly summarize long pieces of text or translate documents between languages, saving you time and effort.

Considerations:

- Model Size: While the NVIDIA 3090_24GB excels with Llama 3 8B, it might struggle with larger models like Llama 3 70B. The available data doesn't provide information on larger LLM performance on this card.

- Memory Requirements: LLMs require significant memory to run efficiently. It's essential to consider whether the 24GB of VRAM on the NVIDIA 3090_24GB is sufficient for the specific model and task.

- Cost: High-end GPUs, like the NVIDIA 3090_24GB, come with a hefty price tag. Evaluating your budget and use case is important when making a purchase decision.

FAQ:

What are LLMs?

LLMs are large language models, a type of artificial intelligence that can understand and generate human-like text. They are trained on massive datasets of text and code, allowing them to perform a wide range of language-based tasks.

What is Quantization?

Quantization is a technique used to reduce the memory footprint and computational requirements of LLMs. It involves reducing the precision of the model's weights, similar to compressing an image to reduce file size.

What is Q4KM Quantization?

Q4KM quantization is a specific type of quantization that uses 4 bits per weight for the model's key, value, and matrix parameters. It offers a good balance between model compression and accuracy.

Keywords:

NVIDIA 309024GB, Llama 3 8B, Llama 3 7B, LLM, Large Language Model, Token Generation Speed, GPU, Graphics Card, Quantization, Q4K_M, F16, Performance, Use Cases, Content Generation, Chatbots, Summarization, Translation, Memory Requirements, Cost, Deep Dive, Local LLMs, Performance Benchmarks, Hardware, Device, Software, Artificial Intelligence, AI, Machine Learning, ML, Computer Science, Technology, Geek, Developer, Data Science, Natural Language Processing, NLP, Text Generation, Computational Power, Efficiency, Optimization.