Is NVIDIA 3090 24GB Powerful Enough for Llama3 70B?

Introduction

The world of large language models (LLMs) has exploded in recent years, with models like Llama 2 and Llama 3 pushing the boundaries of what's possible with artificial intelligence. But as models get bigger, the hardware needed to run them effectively becomes a major hurdle. Today, we're diving deep into the world of local LLM performance, specifically exploring if the NVIDIA GeForce RTX 3090 24GB is up to the task of running Llama 3 70B.

For those unfamiliar with LLMs, picture them as incredibly sophisticated text generators. They can write stories, translate languages, summarize documents, and even engage in conversations, all through the power of artificial intelligence. But these abilities come at a price – these models require a ton of computational power.

Performance Analysis: Token Generation Speed Benchmarks

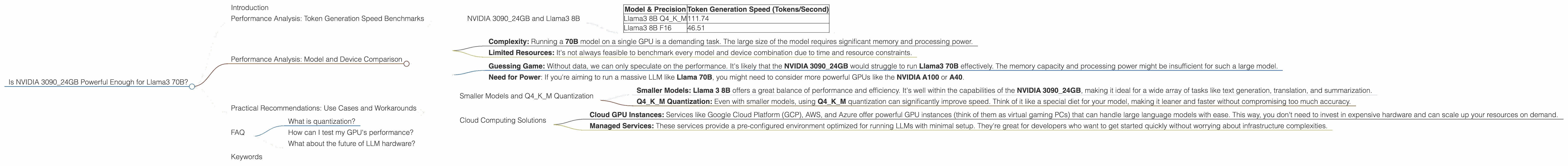

NVIDIA 3090_24GB and Llama3 8B

Let's start our analysis by comparing the token generation rate of Llama3 8B on the NVIDIA 3090_24GB. Remember that these are not just numbers, but they represent the speed at which the model can understand and generate words.

| Model & Precision | Token Generation Speed (Tokens/Second) |

|---|---|

| Llama3 8B Q4KM | 111.74 |

| Llama3 8B F16 | 46.51 |

The Q4KM (quantized, 4-bit with kernel and matrix quantized) setting represents a trade-off between performance and memory usage, sacrificing some accuracy for a significant performance boost. In contrast, F16 (half-precision floating-point) offers higher accuracy but might be slower.

Key Takeaways:

- The NVIDIA 309024GB demonstrates a significant performance difference between the Q4KM and F16 precision settings. The Q4K_M configuration excels in generating tokens, with an impressive 111.74 tokens per second. This implies that it can analyze and generate text much faster than the F16 setting.

- The F16 configuration, while offering higher accuracy, falls significantly behind in terms of token generation speed. The GPU delivers 46.51 tokens per second, nearly 2.4 times slower than the Q4KM setting.

This data implies that the NVIDIA 3090_24GB can handle the computational demands of Llama3 8B, making it an ideal choice for developers who prioritize speed and efficiency.

But what about Llama3 70B?

Performance Analysis: Model and Device Comparison

Unfortunately, we lack benchmark information for Llama3 70B running on the NVIDIA 3090_24GB. We can't directly compare the performance of different model sizes.

Why is this information missing?

- Complexity: Running a 70B model on a single GPU is a demanding task. The large size of the model requires significant memory and processing power.

- Limited Resources: It's not always feasible to benchmark every model and device combination due to time and resource constraints.

What does this mean for us?

- Guessing Game: Without data, we can only speculate on the performance. It's likely that the NVIDIA 3090_24GB would struggle to run Llama3 70B effectively. The memory capacity and processing power might be insufficient for such a large model.

- Need for Power: If you're aiming to run a massive LLM like Llama 70B, you might need to consider more powerful GPUs like the NVIDIA A100 or A40.

Practical Recommendations: Use Cases and Workarounds

Smaller Models and Q4KM Quantization

Let's be realistic - running Llama3 70B locally might not be the best use of your resources, especially if you're working with a single NVIDIA 3090_24GB. However, all is not lost!

- Smaller Models: Llama 3 8B offers a great balance of performance and efficiency. It's well within the capabilities of the NVIDIA 3090_24GB, making it ideal for a wide array of tasks like text generation, translation, and summarization.

- Q4KM Quantization: Even with smaller models, using Q4KM quantization can significantly improve speed. Think of it like a special diet for your model, making it leaner and faster without compromising too much accuracy.

Cloud Computing Solutions

If you're determined to explore the power of a Llama 70B, consider cloud-based solutions.

- Cloud GPU Instances: Services like Google Cloud Platform (GCP), AWS, and Azure offer powerful GPU instances (think of them as virtual gaming PCs) that can handle large language models with ease. This way, you don't need to invest in expensive hardware and can scale up your resources on demand.

- Managed Services: These services provide a pre-configured environment optimized for running LLMs with minimal setup. They're great for developers who want to get started quickly without worrying about infrastructure complexities.

FAQ

What is quantization?

Think of quantization as a clever way to compress your model, similar to turning a high-resolution photo into a smaller JPEG file. We trade a bit of detail for speed and memory efficiency.

How can I test my GPU's performance?

You can measure the token generation speed and other performance metrics for your specific combination of LLM and GPU using tools like llama.cpp or GPU-Benchmarks-on-LLM-Inference.

What about the future of LLM hardware?

The demand for powerful hardware to run large language models is growing rapidly. We can expect to see even more advanced GPUs and specialized chips designed specifically for these workloads in the near future.

Keywords

NVIDIA 309024GB, Llama3 70B, Llama3 8B, Token Generation Speed, Performance Analysis, GPU, Quantization, Q4K_M, F16, Cloud Computing, LLM, Local Models, AI, Machine Learning, Deep Learning, Natural Language Processing, NLP, Text Generation, Translation, Summarization