Is NVIDIA 3090 24GB a Good Investment for AI Startups?

Introduction

In the fast-paced world of AI, startups are constantly seeking cutting-edge technology to gain an edge. Large Language Models (LLMs) have taken the world by storm, and training and running them efficiently is paramount. One of the key components in this race is the choice of hardware, particularly the GPU (Graphics Processing Unit) used for inference. Let's dive into the world of LLMs and examine if the NVIDIA 3090 24GB GPU is the right choice for your startup.

Think of LLMs as intelligent assistants that can understand and generate human-like text. They power everything from chatbots to personalized content creation, and their capabilities are on the rise.

But let's be real, running these models on your laptop is like trying to fit a full-grown elephant in a shoebox. You need a powerful machine, and the 3090 24GB is a heavyweight contender in the GPU arena.

This article will specifically focus on the NVIDIA 3090 24GB GPU and explore its performance with the Llama 3 family of LLMs. We'll dive into the numbers, comparing token speed and processing efficiency, and help you decide if this behemoth of a GPU is the right fit for your AI startup.

NVIDIA 3090 24GB: Performance Analysis

To understand the true value of a GPU for LLMs, we need to look beyond raw specs and delve into real-world performance. The NVIDIA 3090 24GB is a powerful GPU, boasting 10,752 CUDA cores and 24GB of GDDR6X memory. But how does it translate to actual performance with different LLM models?

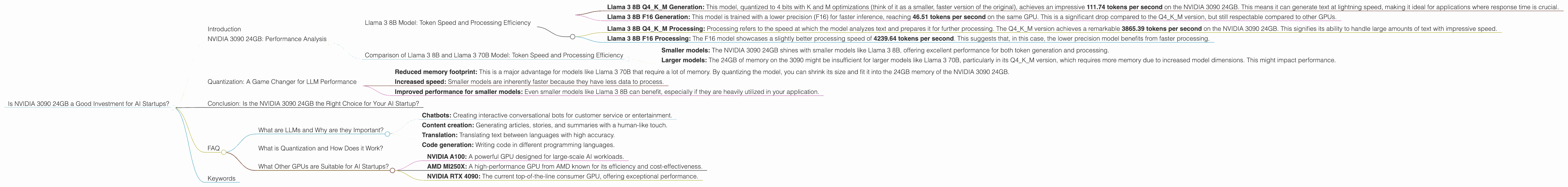

Llama 3 8B Model: Token Speed and Processing Efficiency

For our first test case, we'll analyze the Llama 3 8B model, a popular open-source LLM known for its versatility and performance.

Token Speed:

- Llama 3 8B Q4KM Generation: This model, quantized to 4 bits with K and M optimizations (think of it as a smaller, faster version of the original), achieves an impressive 111.74 tokens per second on the NVIDIA 3090 24GB. This means it can generate text at lightning speed, making it ideal for applications where response time is crucial.

- Llama 3 8B F16 Generation: This model is trained with a lower precision (F16) for faster inference, reaching 46.51 tokens per second on the same GPU. This is a significant drop compared to the Q4KM version, but still respectable compared to other GPUs.

Processing Efficiency:

- Llama 3 8B Q4KM Processing: Processing refers to the speed at which the model analyzes text and prepares it for further processing. The Q4KM version achieves a remarkable 3865.39 tokens per second on the NVIDIA 3090 24GB. This signifies its ability to handle large amounts of text with impressive speed.

- Llama 3 8B F16 Processing: The F16 model showcases a slightly better processing speed of 4239.64 tokens per second. This suggests that, in this case, the lower precision model benefits from faster processing.

Summary:

The NVIDIA 3090 24GB demonstrates strong performance with the Llama 3 8B model, achieving both high token generation speeds and impressive processing efficiency.

Comparison of Llama 3 8B and Llama 3 70B Model: Token Speed and Processing Efficiency

But what about larger models? We need to consider the scalability of the NVIDIA 3090 24GB.

Unfortunately, no data for the Llama 3 70B model on the NVIDIA 3090 24GB is currently available. This is a common issue with larger models and the lack of publicly available benchmark data.

However, we can draw some inferences based on the available data for the Llama 3 8B model.

- Smaller models: The NVIDIA 3090 24GB shines with smaller models like Llama 3 8B, offering excellent performance for both token generation and processing.

- Larger models: The 24GB of memory on the 3090 might be insufficient for larger models like Llama 3 70B, particularly in its Q4KM version, which requires more memory due to increased model dimensions. This might impact performance.

This is where quantization comes into play (think of it as a diet for your model). By reducing the precision of the model's weights (the numbers that define the model), you can make it smaller and faster, thus requiring less memory. LLMs like Llama 3 70B may benefit significantly from quantization techniques, allowing them to run efficiently on the NVIDIA 3090 24GB.

Quantization: A Game Changer for LLM Performance

Quantization is a powerful tool that can dramatically impact the performance of LLMs, especially on GPUs with limited memory. It works by converting the high-precision numbers used to represent the network weights to lower precision.

Imagine you're building a house: using high precision is like using bricks with intricate details. Quantization is like using simpler, more general-purpose blocks. You might lose some of the nuance, but the house is still built, and it's faster and more efficient to construct.

Here's why quantization is a game changer for LLM performance:

- Reduced memory footprint: This is a major advantage for models like Llama 3 70B that require a lot of memory. By quantizing the model, you can shrink its size and fit it into the 24GB memory of the NVIDIA 3090 24GB.

- Increased speed: Smaller models are inherently faster because they have less data to process.

- Improved performance for smaller models: Even smaller models like Llama 3 8B can benefit, especially if they are heavily utilized in your application.

Therefore, while the lack of data for Llama 3 70B might seem like a limitation, it underscores the importance of quantization. By using techniques like Q4KM, you can potentially achieve good performance even with larger models on the NVIDIA 3090 24GB.

Conclusion: Is the NVIDIA 3090 24GB the Right Choice for Your AI Startup?

The NVIDIA 3090 24GB is a powerful GPU, and it demonstrates impressive performance with the Llama 3 8B model. Its high token speed and processing efficiency make it well-suited for applications demanding quick responses and handling large amounts of text.

However, the lack of data for the Llama 3 70B model suggests that the NVIDIA 3090 24GB might not be the ideal choice for every AI startup. The limited memory could pose challenges with larger models unless techniques like quantization are applied.

For startups working with smaller models or those willing to explore quantization, the NVIDIA 3090 24GB can be a valuable asset. Its performance and cost-effectiveness makes it a tempting solution for many.

But ultimately, the best choice for your startup depends on your specific needs and resources. Evaluate your project's requirements, consider the potential for quantization, and factor in the cost of hardware and software before making your decision.

FAQ

What are LLMs and Why are they Important?

LLMs, or Large Language Models, are sophisticated AI systems that can understand and generate human-like text. They can be used for tasks like:

- Chatbots: Creating interactive conversational bots for customer service or entertainment.

- Content creation: Generating articles, stories, and summaries with a human-like touch.

- Translation: Translating text between languages with high accuracy.

- Code generation: Writing code in different programming languages.

LLMs are transforming the way we interact with technology, opening up new possibilities in many areas of our lives.

What is Quantization and How Does it Work?

Quantization is a technique that reduces the precision of the numbers used to represent the network weights in a model. Imagine you have a ruler with a lot of tiny markings. Quantization is like using a ruler with fewer markings.

It reduces the memory footprint of the model, making it smaller and faster.

What Other GPUs are Suitable for AI Startups?

The GPU market is constantly evolving, with new models released regularly. Other high-performance GPUs worth exploring include:

- NVIDIA A100: A powerful GPU designed for large-scale AI workloads.

- AMD MI250X: A high-performance GPU from AMD known for its efficiency and cost-effectiveness.

- NVIDIA RTX 4090: The current top-of-the-line consumer GPU, offering exceptional performance.

The best choice depends on your budget, performance requirements, and the specific models you intend to run.

Keywords

NVIDIA 3090 24GB, GPU, AI startups, LLM, Llama 3, token speed, processing efficiency, quantization, Q4KM, F16, model inference, performance comparison, memory usage, cost-effectiveness, AMD MI250X, NVIDIA A100, NVIDIA RTX 4090, LLM benchmark, large language model.