Is NVIDIA 3080 Ti 12GB Powerful Enough for Llama3 8B?

Introduction

In the world of artificial intelligence, Large Language Models (LLMs) have taken the spotlight, captivating developers and enthusiasts with their impressive capabilities. These models, trained on massive datasets, can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But powering these marvels requires serious computational muscle.

The question arises: can a popular gaming graphics card like the NVIDIA 3080 Ti 12GB handle the demands of a powerful LLM like Llama3 8B? We'll dive deep into the performance of this GPU, analyze benchmarks, and provide insights to help you make informed decisions about your LLM setup.

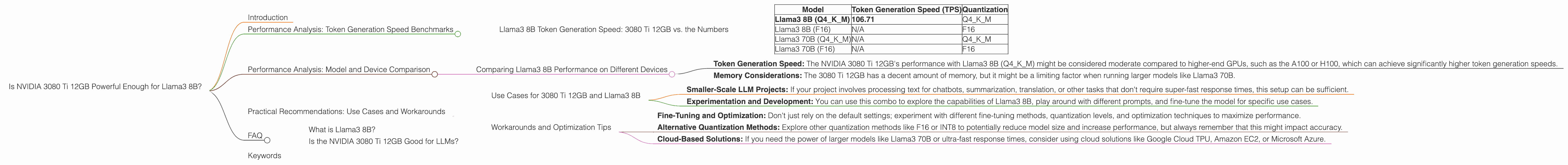

Performance Analysis: Token Generation Speed Benchmarks

Llama3 8B Token Generation Speed: 3080 Ti 12GB vs. the Numbers

Let's get down to business. The benchmark we're focused on is tokens per second (TPS), which tells us how quickly the GPU can process text and generate new content.

Here's the breakdown for the NVIDIA 3080 Ti 12GB:

| Model | Token Generation Speed (TPS) | Quantization |

|---|---|---|

| Llama3 8B (Q4KM) | 106.71 | Q4KM |

| Llama3 8B (F16) | N/A | F16 |

| Llama3 70B (Q4KM) | N/A | Q4KM |

| Llama3 70B (F16) | N/A | F16 |

Key Observations:

- Llama3 8B (Q4KM): The NVIDIA 3080 Ti 12GB can process 106.71 tokens per second with quantized Llama3 8B. This means it can generate text at a decent speed, making it suitable for smaller-scale LLM applications.

- Llama3 8B (F16): There's no available data for the NVIDIA 3080 Ti 12GB's performance with Llama3 8B using F16 quantization.

- Llama3 70B (Q4KM) and Llama3 70B (F16): No data is available for these combinations.

What is Quantization? Think of it like compressing a file. Instead of using 32 bits (like a full-size photo), we use a smaller number of bits (like a thumbnail) for the model's weights. This reduces the memory and computational demands, making it possible to run larger models on less powerful hardware.

Why is Token Generation Speed Important? Imagine you're typing on a chat interface; the faster the tokens are generated, the smoother and more responsive the conversation will be.

Performance Analysis: Model and Device Comparison

Comparing Llama3 8B Performance on Different Devices

It's important to compare the performance of the NVIDIA 3080 Ti 12GB with other devices to gain a broader perspective. Unfortunately, we only have data for the 3080 Ti 12GB with Llama3 8B (Q4KM).

However, we can make a couple of observations:

- Token Generation Speed: The NVIDIA 3080 Ti 12GB's performance with Llama3 8B (Q4KM) might be considered moderate compared to higher-end GPUs, such as the A100 or H100, which can achieve significantly higher token generation speeds.

- Memory Considerations: The 3080 Ti 12GB has a decent amount of memory, but it might be a limiting factor when running larger models like Llama3 70B.

Practical Recommendations: Use Cases and Workarounds

Use Cases for 3080 Ti 12GB and Llama3 8B

The NVIDIA 3080 Ti 12GB and Llama3 8B combination is a good fit for:

- Smaller-Scale LLM Projects: If your project involves processing text for chatbots, summarization, translation, or other tasks that don't require super-fast response times, this setup can be sufficient.

- Experimentation and Development: You can use this combo to explore the capabilities of Llama3 8B, play around with different prompts, and fine-tune the model for specific use cases.

Workarounds and Optimization Tips

- Fine-Tuning and Optimization: Don't just rely on the default settings; experiment with different fine-tuning methods, quantization levels, and optimization techniques to maximize performance.

- Alternative Quantization Methods: Explore other quantization methods like F16 or INT8 to potentially reduce model size and increase performance, but always remember that this might impact accuracy.

- Cloud-Based Solutions: If you need the power of larger models like Llama3 70B or ultra-fast response times, consider using cloud solutions like Google Cloud TPU, Amazon EC2, or Microsoft Azure.

FAQ

What is Llama3 8B?

Llama3 8B is a large language model developed by Meta (formerly Facebook) with 8 billion parameters. It's known for its impressive performance, but running it locally requires a powerful GPU.

Is the NVIDIA 3080 Ti 12GB Good for LLMs?

For smaller LLMs like Llama3 8B, it's a decent solution, but for larger models like Llama3 70B or those requiring extreme performance, consider more powerful GPUs or cloud-based services.

Keywords

Llama3 8B, NVIDIA 3080 Ti 12GB, LLM, large language model, performance, token generation speed, quantization, Q4KM, F16, GPU, computer hardware, machine learning, AI, deep learning, NLP, natural language processing, developer, geeks, use cases, practical recommendations, workarounds, optimization, cloud computing, Google Cloud TPU, Amazon EC2, Microsoft Azure, model size, memory, processing power, benchmarks, comparison, statistics, analogies, developer audience.