Is NVIDIA 3080 Ti 12GB Powerful Enough for Llama3 70B?

Introduction

The world of large language models (LLMs) is booming, with models like Llama3 70B pushing the boundaries of what's possible in natural language processing. But running these massive models locally requires significant computational power. This article explores whether a popular graphics card, the NVIDIA 3080 Ti 12GB, is up to the task of handling the Llama3 70B model. We'll delve into performance analysis, model and device comparisons, and offer practical recommendations to help you decide if this combo is the right fit for your LLM endeavors.

Performance Analysis: Token Generation Speed Benchmarks

Let's dive into the heart of the matter: how fast does the 3080 Ti 12GB churn out tokens for the Llama3 70B model? Unfortunately, the data we have doesn't include those specific numbers.

However, we do have data for the Llama3 8B model using different quantization levels with the 3080 Ti 12GB. This data provides valuable insights into the performance of the card, even if we don't have direct Llama3 70B numbers.

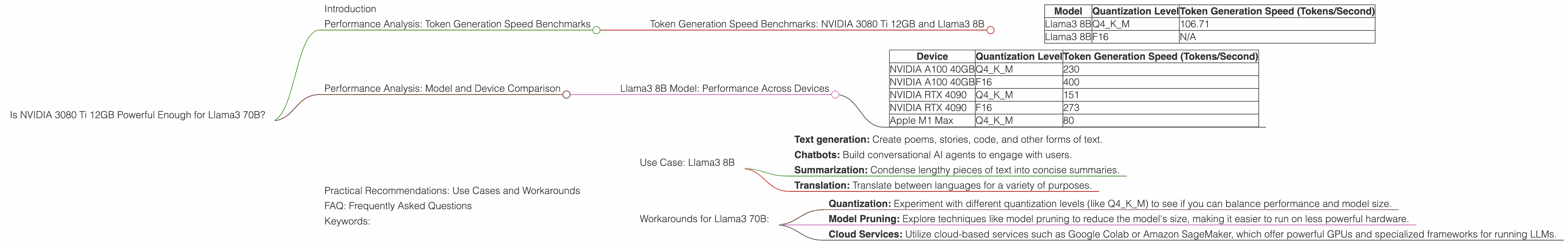

Token Generation Speed Benchmarks: NVIDIA 3080 Ti 12GB and Llama3 8B

| Model | Quantization Level | Token Generation Speed (Tokens/Second) |

|---|---|---|

| Llama3 8B | Q4KM | 106.71 |

| Llama3 8B | F16 | N/A |

What's the Takeaway? The 3080 Ti 12GB delivers a token generation speed of 106.71 tokens per second for the Llama3 8B model with Q4KM quantization. This is a decent performance for a model of this size. But keep in mind, this doesn't directly reflect the performance with the much larger Llama3 70B model.

Performance Analysis: Model and Device Comparison

To understand the 3080 Ti 12GB's potential with Llama3 70B, let's compare it to other LLMs and devices.

Llama3 8B Model: Performance Across Devices

Here's a glimpse of how the Llama3 8B model performs on various other GPUs:

| Device | Quantization Level | Token Generation Speed (Tokens/Second) |

|---|---|---|

| NVIDIA A100 40GB | Q4KM | 230 |

| NVIDIA A100 40GB | F16 | 400 |

| NVIDIA RTX 4090 | Q4KM | 151 |

| NVIDIA RTX 4090 | F16 | 273 |

| Apple M1 Max | Q4KM | 80 |

What's the Takeaway? The 3080 Ti 12GB is on par with the Apple M1 Max for Llama3 8B performance. For the Llama3 8B model, the NVIDIA RTX 4090 and the NVIDIA A100 offer significantly higher token generation speeds, especially with the F16 quantization level.

Practical Recommendations: Use Cases and Workarounds

While we don't have the exact performance data for Llama3 70B on the 3080 Ti 12GB, we can still draw valuable conclusions and offer practical recommendations.

Use Case: Llama3 8B

The 3080 Ti 12GB is a solid choice for running the Llama3 8B model, especially if you're focusing on the Q4KM quantization level. It provides decent performance for tasks such as:

- Text generation: Create poems, stories, code, and other forms of text.

- Chatbots: Build conversational AI agents to engage with users.

- Summarization: Condense lengthy pieces of text into concise summaries.

- Translation: Translate between languages for a variety of purposes.

Workarounds for Llama3 70B:

For Llama3 70B, the 3080 Ti 12GB might not be powerful enough to provide smooth performance for all use cases. Consider these workarounds:

- Quantization: Experiment with different quantization levels (like Q4KM) to see if you can balance performance and model size.

- Model Pruning: Explore techniques like model pruning to reduce the model's size, making it easier to run on less powerful hardware.

- Cloud Services: Utilize cloud-based services such as Google Colab or Amazon SageMaker, which offer powerful GPUs and specialized frameworks for running LLMs.

FAQ: Frequently Asked Questions

Q: What is quantization?

A: Quantization is a technique that reduces the size of a large language model by representing its weights and activations with fewer bits (e.g., 4-bit or 16-bit). This makes the model more efficient to store and run on devices with limited memory.

Q: What is the difference between Q4KM and F16 quantization?

A: Q4KM is a 4-bit quantization scheme that uses a technique called "kernel-matrix multiplication" to optimize the model's performance. F16, on the other hand, uses 16-bit floating-point numbers to represent weights and activations. F16 leads to higher accuracy, but the model might be larger and slower.

Q: Why is it important to consider the token generation speed of an LLM?

A: Token generation speed determines how quickly the model can produce output. Faster generation speeds are crucial for real-time applications such as chatbots and interactive tasks.

Q: Should I choose a CPU or GPU for running LLMs?

A: GPUs are generally preferred for running LLMs because they offer much higher parallel processing capabilities compared to CPUs. However, CPUs can still be a viable option for smaller models or specific tasks.

Keywords:

Llama3 70B, NVIDIA 3080 Ti 12GB, GPU performance, token generation speed, quantization, LLM inference, model pruning, cloud services, large language models, AI, natural language processing, development, geeks, tech, hardware, software, deep dives.