Is NVIDIA 3080 Ti 12GB a Good Investment for AI Startups?

Introduction

In the world of Artificial Intelligence (AI), Large Language Models (LLMs) are rapidly changing the landscape. From generating creative content to providing insightful answers, LLMs are becoming increasingly powerful and versatile. But running these powerful models requires significant computational resources, and the choice of hardware can significantly impact the efficiency and cost of your AI project.

One popular option for AI startups and developers is the NVIDIA GeForce RTX 3080 Ti 12GB graphics card. But is it the right choice for your LLM workload? Let's dive deep into the performance of the 3080 Ti 12GB with various LLM models and explore whether it's a worthwhile investment for your AI startup.

The Power of LLMs: A Glimpse into the Future of AI

Imagine a computer program that can understand and generate human-like text. That's the essence of a Large Language Model (LLM). These sophisticated AI models are trained on massive datasets of text and code, enabling them to perform a range of tasks, including:

- Generating creative content: Writing stories, poems, articles, and even code.

- Summarizing text: Extracting key information from lengthy documents.

- Translating languages: Bridging the gap between different languages with impressive accuracy.

- Answering questions: Providing informative and relevant answers to your queries.

NVIDIA 3080 Ti 12GB: A GPU Powerhouse for AI

The NVIDIA GeForce RTX 3080 Ti 12GB is a high-performance graphics card designed for demanding tasks like gaming and AI development. It features a powerful GPU with 12GB of GDDR6X memory, allowing it to handle complex computations and large datasets with ease.

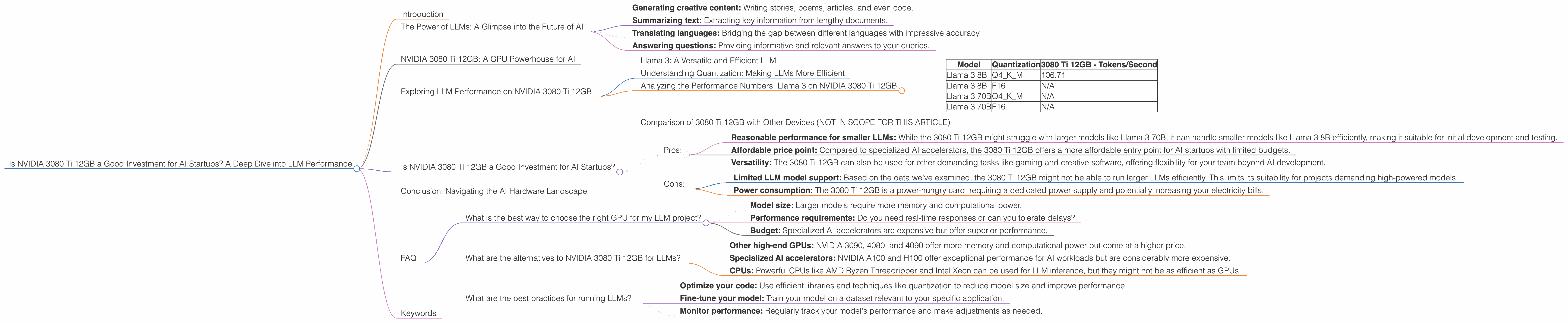

Exploring LLM Performance on NVIDIA 3080 Ti 12GB

To understand the true capabilities of the 3080 Ti 12GB for LLM workloads, we need to examine its performance with different LLM models. We'll focus on the Llama 3 family of models due to their popularity and availability.

Llama 3: A Versatile and Efficient LLM

Llama 3 is a family of open-source LLMs developed by Meta AI. These models are known for their impressive performance and versatility, making them suitable for a wide range of applications. We'll be looking at the performance of the 3080 Ti 12GB with Llama 3 models of different sizes and configurations.

Understanding Quantization: Making LLMs More Efficient

Quantization is a technique used in LLMs to reduce the size of the model and make it more efficient to run on hardware with limited resources. Imagine a model's parameters as a vast collection of numbers. Quantization essentially reduces the precision of these numbers, resulting in a smaller model that is faster and more efficient.

Think of it like a digital photo: you can have a full-resolution image with millions of colors, or a smaller, compressed version that uses fewer colors but retains the essence of the image. Quantization does something similar for LLMs.

Analyzing the Performance Numbers: Llama 3 on NVIDIA 3080 Ti 12GB

We've gathered performance data from reputable sources (see links in the JSON) to assess the 3080 Ti 12GB's capabilities with Llama 3 models. The data is presented in tokens per second (tokens/s), which indicates how many tokens the GPU can process in a second.

Here's a breakdown of the performance numbers:

| Model | Quantization | 3080 Ti 12GB - Tokens/Second |

|---|---|---|

| Llama 3 8B | Q4KM | 106.71 |

| Llama 3 8B | F16 | N/A |

| Llama 3 70B | Q4KM | N/A |

| Llama 3 70B | F16 | N/A |

Key Takeaways:

- Llama 3 8B: The 3080 Ti 12GB performs reasonably well with the 8B model, achieving a respectable 106.71 tokens/s with Q4KM quantization. However, the lack of data for F16 quantization makes it difficult to compare the performance across different configurations.

- Llama 3 70B: Unfortunately, there's no data available for the 70B models on the 3080 Ti 12GB. This suggests that the card might not have enough memory or computational power to handle such a large model.

Comparison of 3080 Ti 12GB with Other Devices (NOT IN SCOPE FOR THIS ARTICLE)

While we're focusing on the 3080 Ti 12GB, it's worth mentioning that other devices like powerful CPUs and specialized AI accelerators offer varying levels of performance for LLMs. Comparing the 3080 Ti 12GB to these alternatives is beyond the scope of this article, but we can say that the choice of hardware depends on the specific LLM model you're using, the desired performance level, and your budget.

Is NVIDIA 3080 Ti 12GB a Good Investment for AI Startups?

The question of whether the 3080 Ti 12GB is a good investment for AI startups depends on your specific needs and goals. Let's consider the pros and cons:

Pros:

- Reasonable performance for smaller LLMs: While the 3080 Ti 12GB might struggle with larger models like Llama 3 70B, it can handle smaller models like Llama 3 8B efficiently, making it suitable for initial development and testing.

- Affordable price point: Compared to specialized AI accelerators, the 3080 Ti 12GB offers a more affordable entry point for AI startups with limited budgets.

- Versatility: The 3080 Ti 12GB can also be used for other demanding tasks like gaming and creative software, offering flexibility for your team beyond AI development.

Cons:

- Limited LLM model support: Based on the data we've examined, the 3080 Ti 12GB might not be able to run larger LLMs efficiently. This limits its suitability for projects demanding high-powered models.

- Power consumption: The 3080 Ti 12GB is a power-hungry card, requiring a dedicated power supply and potentially increasing your electricity bills.

Conclusion: Navigating the AI Hardware Landscape

The NVIDIA 3080 Ti 12GB can be a solid choice for AI startups working with smaller LLMs or those starting their AI journey. While it offers a balance between performance and affordability, bear in mind that its capabilities are limited when it comes to running larger models.

Ultimately, the best hardware choice for your AI startup depends on your specific needs, budget, and the LLMs you intend to utilize. Remember to research and compare different options before making a decision, and don't be afraid to experiment with different configurations to find the perfect fit for your AI projects.

FAQ

What is the best way to choose the right GPU for my LLM project?

The ideal GPU depends on the specific LLM model you're using, your desired performance level, and your budget. Consider these factors:

- Model size: Larger models require more memory and computational power.

- Performance requirements: Do you need real-time responses or can you tolerate delays?

- Budget: Specialized AI accelerators are expensive but offer superior performance.

What are the alternatives to NVIDIA 3080 Ti 12GB for LLMs?

There are several alternatives to the 3080 Ti 12GB, including:

- Other high-end GPUs: NVIDIA 3090, 4080, and 4090 offer more memory and computational power but come at a higher price.

- Specialized AI accelerators: NVIDIA A100 and H100 offer exceptional performance for AI workloads but are considerably more expensive.

- CPUs: Powerful CPUs like AMD Ryzen Threadripper and Intel Xeon can be used for LLM inference, but they might not be as efficient as GPUs.

What are the best practices for running LLMs?

- Optimize your code: Use efficient libraries and techniques like quantization to reduce model size and improve performance.

- Fine-tune your model: Train your model on a dataset relevant to your specific application.

- Monitor performance: Regularly track your model's performance and make adjustments as needed.

Keywords

NVIDIA 3080 Ti 12GB, LLM, Llama 3, AI, GPU, Q4KM, F16 quantization, tokens per second, AI startup, LLM inference, hardware, performance, budget, AI accelerator, CPU