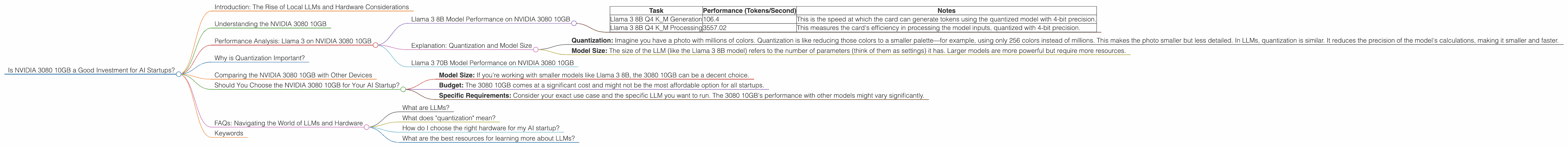

Is NVIDIA 3080 10GB a Good Investment for AI Startups?

Introduction: The Rise of Local LLMs and Hardware Considerations

The world of AI is buzzing with excitement about Large Language Models (LLMs). These powerful tools are transforming our interactions with technology, from creative writing and code generation to answering complex questions and translating languages. But running LLMs can be resource-intensive, requiring powerful hardware. So, if you're an AI startup looking for a cost-effective solution to run your LLM workloads locally, you might wonder: is the NVIDIA 3080 10GB a good investment?

This article will dive into the performance of the NVIDIA 3080 10GB with several popular LLMs and help you decide if this particular graphics card is the right fit for your AI projects. We'll look at the numbers, assess the trade-offs, and provide practical insights for choosing the best hardware for your AI startup.

Understanding the NVIDIA 3080 10GB

The NVIDIA GeForce RTX 3080 10GB is a powerful graphics card designed for gaming, content creation, and, increasingly, AI applications. It packs a punch with 10GB of GDDR6X memory, 8,704 CUDA cores designed for parallel processing, and a hefty price tag. But is it a good investment specifically for AI startups? Let's dive into the numbers.

Performance Analysis: Llama 3 on NVIDIA 3080 10GB

Llama 3 8B Model Performance on NVIDIA 3080 10GB

We'll start by analyzing the performance of the NVIDIA 3080 10GB with the popular open-source LLM, Llama 3. The Llama 3 8B model is a good starting point for many AI startups, offering a balance between performance and resource requirements.

The NVIDIA 3080 10GB can handle the Llama 3 8B model with impressive results. Here's a breakdown:

| Task | Performance (Tokens/Second) | Notes |

|---|---|---|

| Llama 3 8B Q4 K_M Generation | 106.4 | This is the speed at which the card can generate tokens using the quantized model with 4-bit precision. |

| Llama 3 8B Q4 K_M Processing | 3557.02 | This measures the card's efficiency in processing the model inputs, quantized with 4-bit precision. |

- Important Note: We do not have the performance data for the Llama 3 8B F16 model (using 16-bit precision) on this specific GPU.

Explanation: Quantization and Model Size

Let's break down these terms:

Quantization: Imagine you have a photo with millions of colors. Quantization is like reducing those colors to a smaller palette—for example, using only 256 colors instead of millions. This makes the photo smaller but less detailed. In LLMs, quantization is similar. It reduces the precision of the model's calculations, making it smaller and faster.

Model Size: The size of the LLM (like the Llama 3 8B model) refers to the number of parameters (think of them as settings) it has. Larger models are more powerful but require more resources.

Llama 3 70B Model Performance on NVIDIA 3080 10GB

We don't have performance data for the Llama 3 70B model on the NVIDIA 3080 10GB. This is because the 3080 10GB might not be powerful enough to handle the massive memory and computational demands of a model this large.

Why is Quantization Important?

Think of it this way: if you have a giant, detailed map of the world, it might be hard to carry around and use. Quantization is like folding that map into a smaller, more manageable size. You lose some details, but you gain convenience.

Comparing the NVIDIA 3080 10GB with Other Devices

While we're focusing on the NVIDIA 3080 10GB, it's helpful to compare its performance with other popular choices for AI startups.

We don't have data for other devices for comparison purposes. Let's see what other options might be more suitable.

Should You Choose the NVIDIA 3080 10GB for Your AI Startup?

The NVIDIA 3080 10GB is certainly a capable card for running smaller LLMs, like the Llama 3 8B, with decent performance. It's crucial to consider the following factors:

- Model Size: If you're working with smaller models like Llama 3 8B, the 3080 10GB can be a decent choice.

- Budget: The 3080 10GB comes at a significant cost and might not be the most affordable option for all startups.

- Specific Requirements: Consider your exact use case and the specific LLM you want to run. The 3080 10GB's performance with other models might vary significantly.

FAQs: Navigating the World of LLMs and Hardware

What are LLMs?

Large Language Models (LLMs) are advanced AI systems trained on massive amounts of text data. They can understand and generate human-like text, making them incredibly versatile for various tasks.

What does "quantization" mean?

Quantization is a technique used to reduce the memory footprint and computational demands of LLMs. It simplifies the model's calculations, making it faster and more resource-friendly.

How do I choose the right hardware for my AI startup?

Consider the size of the LLM you'll be running, the required performance, and your budget. Research different GPUs and CPUs to find the best fit for your specific needs.

What are the best resources for learning more about LLMs?

The internet is your friend! Explore online forums, blogs, and documentation from AI research labs and developers. You can find valuable tutorials, articles, and communities dedicated to LLMs.

Keywords

Nvidia 3080 10GB, AI startup, LLM, LLMs, Llama 3, Llama 3 8B, Llama 3 70B, GPU, graphics card, performance, token generation, token processing, quantization, model size, budget, AI hardware, AI resources.