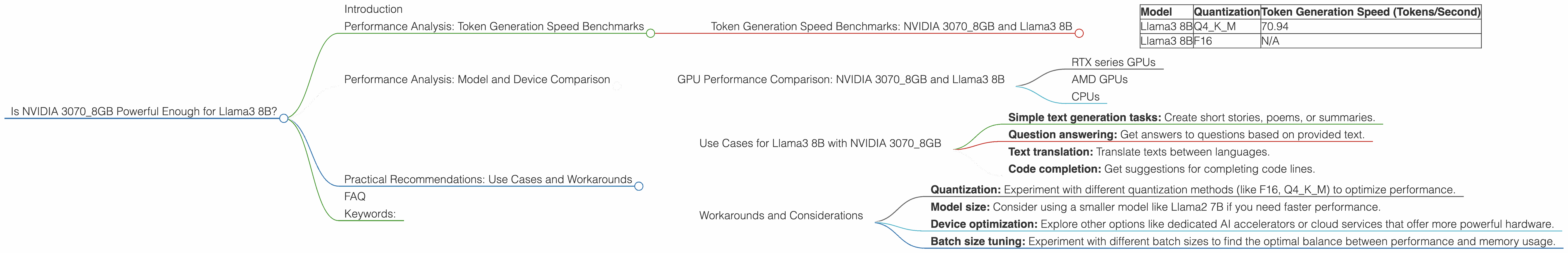

Is NVIDIA 3070 8GB Powerful Enough for Llama3 8B?

Introduction

Are you a developer, a data scientist, or just a tech enthusiast with a burning desire to explore the exciting world of Large Language Models (LLMs)? You probably want to know if your NVIDIA 30708GB can handle the demands of Llama3 8B, a powerful and versatile LLM. This article delves into the performance of Llama3 8B on the NVIDIA 30708GB, providing you with the insights you need to decide if it's the right fit for your projects.

This deep dive will explore the speed at which your NVIDIA 3070_8GB can generate tokens using Llama3 8B, compare its performance with other devices and models, and offer practical recommendations for use cases and potential workarounds.

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: NVIDIA 3070_8GB and Llama3 8B

This table shows the token generation speed of Llama3 8B on the NVIDIA 3070_8GB.

| Model | Quantization | Token Generation Speed (Tokens/Second) |

|---|---|---|

| Llama3 8B | Q4KM | 70.94 |

| Llama3 8B | F16 | N/A |

What does this information tell us?

The NVIDIA 30708GB can generate 70.94 tokens per second when using Llama3 8B with Q4K_M quantization. This represents a decent performance level, but it's important to consider the limitations of this specific setup.

Q4KM is a quantization technique that reduces the size of the model, which can improve performance on certain devices. F16 quantization, on the other hand, uses a different approach that may yield different results. We do not have data about the performance of Llama3 8B with F16 quantization on this specific device.

Performance Analysis: Model and Device Comparison

GPU Performance Comparison: NVIDIA 3070_8GB and Llama3 8B

Unfortunately, we only have performance data for the NVIDIA 3070_8GB with Llama3 8B. This means we cannot compare it to other devices or models.

For a complete comparison, we need data for other devices like:

- RTX series GPUs

- AMD GPUs

- CPUs

Practical Recommendations: Use Cases and Workarounds

Use Cases for Llama3 8B with NVIDIA 3070_8GB

The NVIDIA 3070_8GB can be a suitable choice for use cases that involve:

- Simple text generation tasks: Create short stories, poems, or summaries.

- Question answering: Get answers to questions based on provided text.

- Text translation: Translate texts between languages.

- Code completion: Get suggestions for completing code lines.

Note: While the NVIDIA 3070_8GB can handle these tasks, it's important to remember that its performance is limited by the available data.

Workarounds and Considerations

- Quantization: Experiment with different quantization methods (like F16, Q4KM) to optimize performance.

- Model size: Consider using a smaller model like Llama2 7B if you need faster performance.

- Device optimization: Explore other options like dedicated AI accelerators or cloud services that offer more powerful hardware.

- Batch size tuning: Experiment with different batch sizes to find the optimal balance between performance and memory usage.

FAQ

Q: What is quantization?

A: Quantization is a technique used to reduce the size of a model by representing its weights using a smaller number of bits. This can improve performance on devices with limited memory or processing power.

Q: How can I further improve the performance of Llama3 8B on my NVIDIA 3070_8GB?

A: You can try experimenting with different optimization techniques like model parallelism, mixed precision training, and code optimization.

Q: What are the limitations of using a local LLM model compared to cloud-based services?

A: Local LLMs offer more control and privacy but may lack the resources and scalability of cloud-based services.

Keywords:

Llama3 8B, NVIDIA 3070_8GB, Token Generation Speed, Quantization, Performance Comparison, Use Cases, Workarounds, Large Language Models, LLM, GPU, GPU Performance, Local LLM, Text Generation, Question Answering, Text Translation, Code Completion.