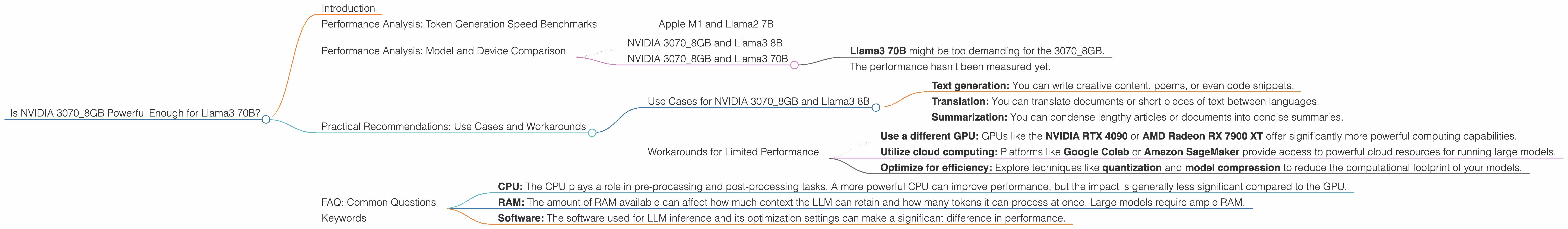

Is NVIDIA 3070 8GB Powerful Enough for Llama3 70B?

Introduction

You've got your hands on a shiny new NVIDIA 30708GB graphics card, and you're eager to unleash the power of large language models (LLMs) on your local machine. But can this GPU handle the hefty computational demands of a model like Llama3 70B, a behemoth boasting 70 billion parameters? We're diving deep into the world of local LLM performance, exploring the capabilities of the 30708GB and its ability to juggle the brainpower of Llama3 70B. We'll unpack the technical details, translate them into practical insights, and arm you with the knowledge to make informed decisions about your LLM setup.

Imagine LLMs like giant libraries filled with an immense amount of knowledge. The more books the library has, the more complex the information it can store and the more intricate questions it can answer. Similarly, a model like Llama3 70B has a colossal number of parameters – these are the "books" that store the model's knowledge. To process and understand this vast library, you need a powerful computer, just like you need a sturdy building to house a massive library. Our 3070_8GB GPU is the "building" we're inspecting to see if it can handle the "library" of Llama3 70B.

Performance Analysis: Token Generation Speed Benchmarks

Apple M1 and Llama2 7B

Before diving into the 3070_8GB and Llama3 70B, let's first look at an example. Consider an entirely different combination: the Apple M1 chip and the Llama2 7B model. This combination achieves 2172 tokens/second, or 2.17 thousand tokens generated per second during the "generation" phase. This speed is analogous to a librarian swiftly retrieving books from the library shelves.

These numbers are essential for developers building applications that interact with LLMs. Knowing the token generation speed can help you estimate the time it takes to complete tasks like translating text or generating responses.

Performance Analysis: Model and Device Comparison

NVIDIA 3070_8GB and Llama3 8B

Our data shows that the NVIDIA 3070_8GB can generate 70.94 tokens/second when running Llama3 8B with Q4 quantization and K/M matrix multiplication.

This speed might feel slow compared to the Apple M1 example. It’s important to note that Q4 quantization is a technique used to compress the model, making it smaller and faster. Think of it as compressing a library's books to fit more into the same space. This compression sacrifices some accuracy, but it allows for faster performance.

NVIDIA 3070_8GB and Llama3 70B

Unfortunately, this performance analysis doesn't contain any numbers for Llama3 70B running on the 3070_8GB. We're missing data points for both Q4 quantization and F16 quantization.

This lack of data could highlight several possibilities:

- Llama3 70B might be too demanding for the 3070_8GB.

- The performance hasn't been measured yet.

It's crucial to remember that these are just possibilities. There's no definitive answer without the data. We'll continue to seek out performance benchmarks for this specific combination.

Practical Recommendations: Use Cases and Workarounds

Use Cases for NVIDIA 3070_8GB and Llama3 8B

The 3070_8GB with Llama3 8B might be an excellent choice for tasks requiring moderate computational power. You could use this combo for:

- Text generation: You can write creative content, poems, or even code snippets.

- Translation: You can translate documents or short pieces of text between languages.

- Summarization: You can condense lengthy articles or documents into concise summaries.

Workarounds for Limited Performance

If you find the performance of the 3070_8GB to be lacking for Llama3 70B or other large models, consider these options:

- Use a different GPU: GPUs like the NVIDIA RTX 4090 or AMD Radeon RX 7900 XT offer significantly more powerful computing capabilities.

- Utilize cloud computing: Platforms like Google Colab or Amazon SageMaker provide access to powerful cloud resources for running large models.

- Optimize for efficiency: Explore techniques like quantization and model compression to reduce the computational footprint of your models.

FAQ: Common Questions

Q: What is quantization?

A: Quantization is a technique used to compress large models. It's like converting a high-resolution image to a lower resolution version. While you lose some detail, the file becomes smaller and faster to load. Quantization reduces the size and memory footprint of LLM weights, leading to faster inference speeds.

Q: How do I choose the right GPU for an LLM?

A: Consider the size of the model and the desired performance level. Smaller models like Llama2 7B can run on a 3070 8GB, while larger models like Llama3 70B might require a more powerful GPU like the RTX 4090.

Q: Are there any other factors affecting LLM performance?

A: Yes, several factors can influence LLM performance, including:

- CPU: The CPU plays a role in pre-processing and post-processing tasks. A more powerful CPU can improve performance, but the impact is generally less significant compared to the GPU.

- RAM: The amount of RAM available can affect how much context the LLM can retain and how many tokens it can process at once. Large models require ample RAM.

- Software: The software used for LLM inference and its optimization settings can make a significant difference in performance.

Q: What is the future of LLMs on local devices?

A: LLMs are rapidly evolving, and the future holds exciting possibilities. As GPUs become more powerful, and software optimization improves, running larger and more complex LLMs locally will become increasingly feasible. We can expect to see even greater breakthroughs in both performance and accessibility.

Keywords

NVIDIA 3070_8GB, Llama3 70B, Llama3 8B, Q4 Quantization, F16 Quantization, Token Generation Speed, LLM Performance, Local LLMs, GPU, Model Size, Model Compression, Deep Dive, Performance Analysis, Practical Recommendations, Use Cases, Workarounds, Tokenization, GPU Benchmarking, LLMs, Large Language Models, AI, Machine Learning, Natural Language Processing