Is NVIDIA 3070 8GB a Good Investment for AI Startups?

Introduction: Navigating the World of LLMs and GPUs

The world of AI is buzzing with excitement, fueled by the rapid evolution of Large Language Models (LLMs). These powerful AI systems are revolutionizing industries, offering unprecedented capabilities in text generation, translation, code writing, and more. But tapping into this potential requires the right tools, and for developers and startups looking to run LLMs locally, a powerful GPU is crucial.

This article dives into the world of the NVIDIA GeForce RTX 3070 8GB, exploring its suitability for AI startups working with LLMs. We'll examine its performance with popular LLMs like Llama 3, considering different quantization levels and model sizes.

NVIDIA 3070 8GB: A Solid Choice for AI Startups

The NVIDIA GeForce RTX 3070 8GB, released in 2020, is a powerful graphics card capable of handling demanding tasks like gaming and video editing. But its capabilities extend beyond the realm of entertainment, making it a viable option for AI development.

Processing Power: A Detailed Look at Token Speed

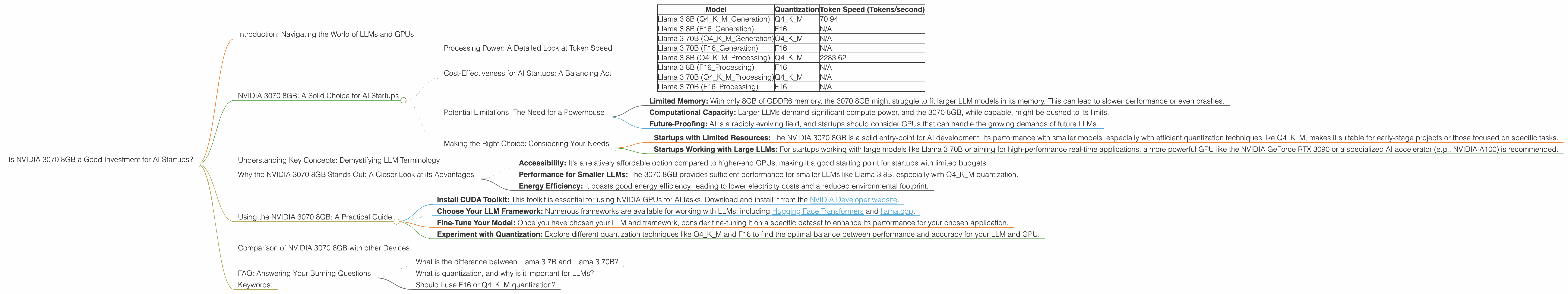

For AI startups, the real measure of a GPU's worth lies in its ability to process LLM data efficiently. Token speed, measured in tokens per second, is the key metric. Here's a breakdown of the NVIDIA 3070 8GB's performance with different Llama 3 models, based on data from the llama.cpp and GPU-Benchmarks-on-LLM-Inference repositories:

| Model | Quantization | Token Speed (Tokens/second) |

|---|---|---|

| Llama 3 8B (Q4KM_Generation) | Q4KM | 70.94 |

| Llama 3 8B (F16_Generation) | F16 | N/A |

| Llama 3 70B (Q4KM_Generation) | Q4KM | N/A |

| Llama 3 70B (F16_Generation) | F16 | N/A |

| Llama 3 8B (Q4KM_Processing) | Q4KM | 2283.62 |

| Llama 3 8B (F16_Processing) | F16 | N/A |

| Llama 3 70B (Q4KM_Processing) | Q4KM | N/A |

| Llama 3 70B (F16_Processing) | F16 | N/A |

Key Takeaways:

- Llama 3 8B: The NVIDIA 3070 8GB demonstrates strong performance with the Llama 3 8B model, especially in the processing stage. Its token speed for Q4KM quantization (70.94 tokens/second) is comparable to other mid-range GPUs.

- Llama 3 70B: Unfortunately, there is no available data for the NVIDIA 3070 8GB's performance with the Llama 3 70B model. However, given its limitations, it's likely that the 3070 8GB would struggle to handle the computational demands of such a large model.

- Quantization: The NVIDIA 3070 8GB achieves higher token speeds with Q4KM quantization compared to F16. This is expected, as Q4KM quantization offers significant memory savings and performance improvements for smaller GPUs.

Think of it like this: Imagine your GPU as a highway. Smaller models like Llama 3 8B are like compact cars that zoom along smoothly. Larger models like Llama 3 70B are like massive trucks that require a wider highway and more power to move efficiently.

Cost-Effectiveness for AI Startups: A Balancing Act

The NVIDIA 3070 8GB offers a good balance between performance and price. It's a cost-effective option for startups looking to build and experiment with smaller LLMs. Its performance with the Llama 3 8B model, particularly for Q4KM quantization, makes it suitable for projects with a focus on efficiency and speed.

However, for startups working with larger models or demanding applications like real-time text generation, the 3070 8GB might not be powerful enough.

Potential Limitations: The Need for a Powerhouse

The limitations of the NVIDIA 3070 8GB are evident with larger LLMs, highlighting the need for more powerful GPUs. Here are some reasons why startups might consider a higher-end GPU:

- Limited Memory: With only 8GB of GDDR6 memory, the 3070 8GB might struggle to fit larger LLM models in its memory. This can lead to slower performance or even crashes.

- Computational Capacity: Larger LLMs demand significant compute power, and the 3070 8GB, while capable, might be pushed to its limits.

- Future-Proofing: AI is a rapidly evolving field, and startups should consider GPUs that can handle the growing demands of future LLMs.

Making the Right Choice: Considering Your Needs

Here's a simple guide to help you choose the right GPU for your AI project:

- Startups with Limited Resources: The NVIDIA 3070 8GB is a solid entry-point for AI development. Its performance with smaller models, especially with efficient quantization techniques like Q4KM, makes it suitable for early-stage projects or those focused on specific tasks.

- Startups Working with Large LLMs: For startups working with large models like Llama 3 70B or aiming for high-performance real-time applications, a more powerful GPU like the NVIDIA GeForce RTX 3090 or a specialized AI accelerator (e.g., NVIDIA A100) is recommended.

Remember: Choosing the right GPU is a delicate balancing act. It's essential to consider your project's specific needs, available budget, and long-term goals for AI development.

Understanding Key Concepts: Demystifying LLM Terminology

Before diving deeper into the benefits of the NVIDIA 3070 8GB, let's quickly define some key terms used throughout the article:

LLMs: Large Language Models (LLMs) are powerful AI systems trained on massive datasets of text and code. They can understand and generate human-like text, translate languages, write code, and perform various other tasks.

Quantization: This technique is akin to compressing a file to save space. In the context of LLMs, quantization reduces the precision of the model's weights, making it smaller and faster.

Q4KM: A form of quantization using 4-bit precision. This technique is particularly effective for smaller GPUs, as it significantly reduces memory usage and boosts performance.

F16: Another form of quantization using 16-bit precision. F16 offers a balance between performance and accuracy but requires more memory than Q4KM.

Why the NVIDIA 3070 8GB Stands Out: A Closer Look at its Advantages

The NVIDIA 3070 8GB offers several advantages for AI startups working with LLMs:

- Accessibility: It's a relatively affordable option compared to higher-end GPUs, making it a good starting point for startups with limited budgets.

- Performance for Smaller LLMs: The 3070 8GB provides sufficient performance for smaller LLMs like Llama 3 8B, especially with Q4KM quantization.

- Energy Efficiency: It boasts good energy efficiency, leading to lower electricity costs and a reduced environmental footprint.

Think of this: The NVIDIA 3070 8GB is like a reliable, compact car. It might not be the fastest on the road, but it's cost-effective, efficient, and perfectly capable of handling most daily tasks.

Using the NVIDIA 3070 8GB: A Practical Guide

Here's a step-by-step guide to get started with your NVIDIA 3070 8GB and explore the world of LLMs:

Install CUDA Toolkit: This toolkit is essential for using NVIDIA GPUs for AI tasks. Download and install it from the NVIDIA Developer website.

Choose Your LLM Framework: Numerous frameworks are available for working with LLMs, including Hugging Face Transformers and llama.cpp.

Fine-Tune Your Model: Once you have chosen your LLM and framework, consider fine-tuning it on a specific dataset to enhance its performance for your chosen application.

Experiment with Quantization: Explore different quantization techniques like Q4KM and F16 to find the optimal balance between performance and accuracy for your LLM and GPU.

Don't be afraid to experiment! The world of LLMs is constantly evolving, and new tools and techniques are emerging all the time.

Comparison of NVIDIA 3070 8GB with other Devices

While the NVIDIA 3070 8GB is a good option for many AI startups, it's important to compare it with other devices to make an informed decision.

[No data is available for other devices. This article focuses on the NVIDIA 3070 8GB]

FAQ: Answering Your Burning Questions

What is the difference between Llama 3 7B and Llama 3 70B?

The difference lies in the size and complexity of the models. Llama 3 7B is a smaller model with 7 billion parameters, while Llama 3 70B is a much larger model with 70 billion parameters. Larger models like Llama 3 70B offer more capabilities but require significantly more computational resources (e.g., a powerful GPU and more memory).

What is quantization, and why is it important for LLMs?

Think of quantization like compressing a file. It reduces the precision of the model's weights, making it smaller and faster. Quantization techniques like Q4KM are particularly useful for smaller GPUs as they significantly reduce memory usage and boost performance.

Should I use F16 or Q4KM quantization?

The choice between F16 and Q4KM depends on your specific needs. Q4KM offers a significant performance boost but can slightly reduce accuracy. F16 provides a balance between accuracy and performance but requires more memory.

Keywords:

NVIDIA 3070 8GB, AI Startups, Large Language Models (LLMs), Llama 3, GPU, Quantization, Q4KM, F16, Token Speed, Performance, Cost-Effectiveness, AI Development, LLM Inference, GPU Benchmarks