Is It Worth Buying Apple M3 Pro for Machine Learning Projects?

Introduction

The world of Machine Learning (ML) is exploding with new and exciting possibilities. You may have heard of Large Language Models (LLMs), which have taken the world by storm. But what if you want to run these powerful models locally on your own machine? That's where the Apple M3 Pro comes in.

This powerful chip, built for performance, is a popular choice for developers and data scientists. But how does the M3 Pro handle the demanding task of running LLMs? Today, we'll dive deep into the performance of the Apple M3 Pro with various LLMs, especially the popular Llama 2 models. Whether you're a seasoned developer or just starting your journey into the world of LLMs, this article will help you understand if the M3 Pro is the right chip for your machine learning projects.

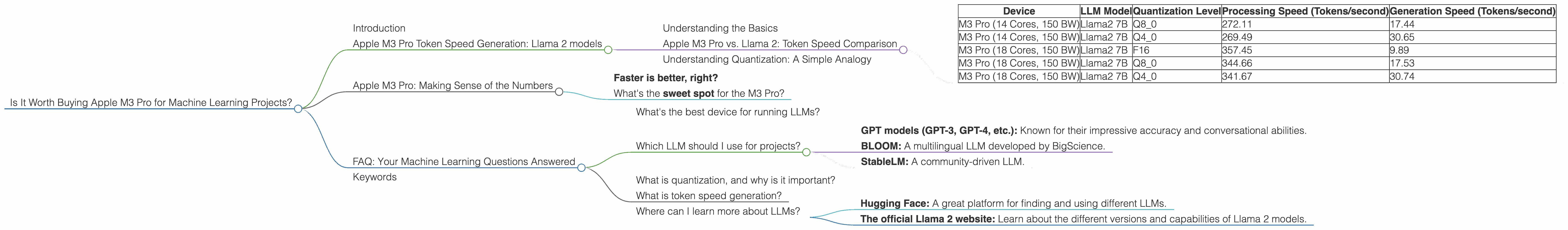

Apple M3 Pro Token Speed Generation: Llama 2 models

Understanding the Basics

Imagine LLMs as incredibly smart robots. They can understand and generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But how do they do it? They process information in the form of "tokens" which are basically small pieces of words or punctuation marks.

The "token speed generation" refers to how many of these tokens your machine can process per second. The higher the number, the faster your LLM will generate text, answer questions, and perform various tasks.

Apple M3 Pro vs. Llama 2: Token Speed Comparison

Let's get down to the nitty-gritty. We'll focus on the performance of the Apple M3 Pro with Llama 2 models in different quantization levels:

- F16: This is the highest precision. It's like using a super detailed blueprint for the LLM, but it can be slower.

- Q8_0: A lower precision, like using a less detailed blueprint. It's faster but might compromise some accuracy.

- Q4_0: The lowest precision, very fast, but can sacrifice even more accuracy.

We'll analyze the token speed per second for both processing and generation tasks:

| Device | LLM Model | Quantization Level | Processing Speed (Tokens/second) | Generation Speed (Tokens/second) |

|---|---|---|---|---|

| M3 Pro (14 Cores, 150 BW) | Llama2 7B | Q8_0 | 272.11 | 17.44 |

| M3 Pro (14 Cores, 150 BW) | Llama2 7B | Q4_0 | 269.49 | 30.65 |

| M3 Pro (18 Cores, 150 BW) | Llama2 7B | F16 | 357.45 | 9.89 |

| M3 Pro (18 Cores, 150 BW) | Llama2 7B | Q8_0 | 344.66 | 17.53 |

| M3 Pro (18 Cores, 150 BW) | Llama2 7B | Q4_0 | 341.67 | 30.74 |

Things to consider:

- Data limitations: Some data is unavailable for specific configurations. We'll focus on the data we have and clearly state which combinations are missing.

- Bandwidth (BW): This indicates how fast data moves between the CPU and the GPU. It's important for how effectively the M3 Pro can handle the LLM.

- Cores: More cores offer increased processing power. This generally means faster performance.

Key Takeaways:

- Q8_0 vs. Q4_0:

- Q8_0 is generally faster than Q4_0 for processing.

- Q4_0 seems to perform better for generation, especially on the 14-core M3 Pro.

- F16 (available only for the 18-core M3 Pro) is the fastest for processing but significantly slower for generation.

- The M3 Pro with 18 cores generally delivers higher token speeds compared to the 14-core version, especially for processing tasks.

Understanding Quantization: A Simple Analogy

Think of it like this: Imagine trying to understand a new language. You could learn every single word (F16), which is very accurate but takes a long time. You could learn some words but use them in different ways, which is faster (Q80). Or, you could learn only the most basic words and phrases (Q40) and use them to communicate, which is the fastest but less accurate.

Quantization is like choosing different levels of "language learning" for your LLM. Lower precision (Q80, Q40) makes the model faster but might compromise accuracy, while higher precision (F16) is more accurate but slower.

Apple M3 Pro: Making Sense of the Numbers

Faster is better, right?

Not always. In the world of LLMs, there's a balancing act between speed and accuracy. This is where quantization comes in. If speed is your top priority, you might choose a lower precision level like Q80 or Q40. But if you need the most accuracy, F16 will be your best bet.

What's the sweet spot for the M3 Pro?

It depends on your needs. The 18-core M3 Pro with F16 offers the highest processing speed but may be slower for generation. If you prioritize quick generation, the 14-core M3 Pro with Q4_0 appears to be a good option.

FAQ: Your Machine Learning Questions Answered

What's the best device for running LLMs?

The "best" device depends on your budget, the LLM you're using, and your specific needs. The Apple M3 Pro is a great option for local LLM inference, especially if you need fast processing speeds.

Which LLM should I use for projects?

That depends on your application! Llama 2 is a popular choice, but other great LLMs are available, including:

- GPT models (GPT-3, GPT-4, etc.): Known for their impressive accuracy and conversational abilities.

- BLOOM: A multilingual LLM developed by BigScience.

- StableLM: A community-driven LLM.

What is quantization, and why is it important?

Quantization is a technique that reduces the size of LLM models, which makes them faster and more efficient, especially for local processing. It's like using smaller building blocks to build something, but you might lose some detail.

What is token speed generation?

Token speed generation is the number of tokens (small pieces of words or punctuation marks) that a device can process per second. The higher the number, the faster the LLM will generate text, answer questions, and complete tasks.

Where can I learn more about LLMs?

There are lots of resources online! Here are a few:

- Hugging Face: A great platform for finding and using different LLMs.

- The official Llama 2 website: Learn about the different versions and capabilities of Llama 2 models.

Keywords

Apple M3 Pro, Machine Learning, LLMs, Large Language Models, Llama 2, Token Speed Generation, Quantization, F16, Q80, Q40, Processing Speed, Generation Speed, Bandwidth, GPU Cores, Performance, GPU Benchmarks, Local Inference, Inference, Model Size, Accuracy, Project, Development, Data Science, AI, Artificial Intelligence, Hugging Face, GPT, BLOOM, StableLM.