Is It Worth Buying Apple M3 Max for Machine Learning Projects?

Introduction

The world of large language models (LLMs) is booming, with new models and applications popping up every day. But running these models locally can be a challenge, requiring powerful hardware and a deep understanding of the technical details. For those looking to explore LLMs on their own machines, Apple's M3 Max chip is a tempting option. With its impressive performance and dedicated GPU, it promises to deliver the speed and efficiency needed to train and run even the most demanding models.

But is the M3 Max worth the investment for machine learning projects? In this article, we'll dive deep into the performance of the Apple M3 Max with various LLM models, comparing it to other popular options and analyzing the impact of different quantization levels.

Apple M3 Max: A Powerhouse for LLMs?

The M3 Max is Apple's top-of-the-line chip, designed to deliver exceptional performance for demanding tasks like video editing, 3D rendering, and, yes, you guessed it – machine learning! It boasts a whopping 40 GPU cores, a massive bandwidth of 400 GB/s, and a ton of RAM (up to 96GB!). This translates to impressive speeds when processing and generating text, making it a potential game-changer for local LLM enthusiasts.

Performance Breakdown: Apple M3 Max vs. LLM Models

We'll be looking at the performance of the Apple M3 Max using the "llama.cpp" framework, a popular choice for running LLMs locally.

To get a clearer picture, we'll analyze the performance of the M3 Max with different LLM models and compare it to other devices. We'll not only look at the overall speed but also explore how the performance changes with different quantization levels.

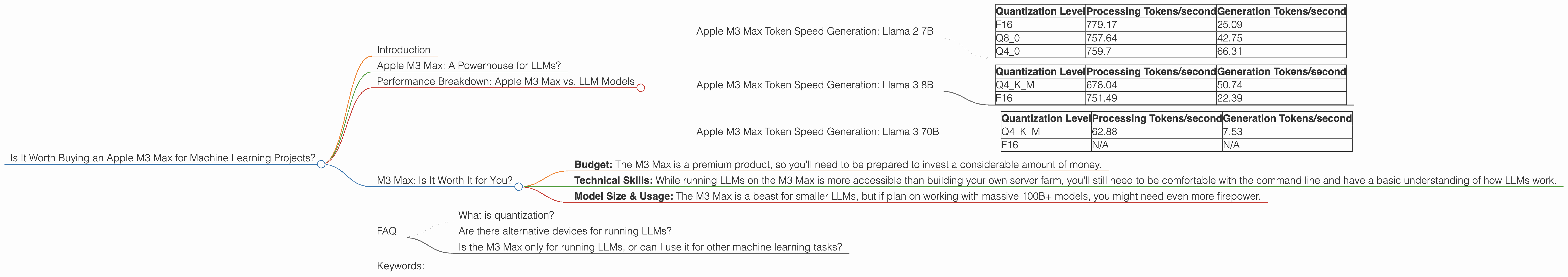

Apple M3 Max Token Speed Generation: Llama 2 7B

Let's start with the Llama 2 7B model. This model is a popular choice for local experimentation due to its size and impressive performance.

The Apple M3 Max delivers impressive speeds with the Llama 2 7B model, both in terms of processing and generation:

| Quantization Level | Processing Tokens/second | Generation Tokens/second |

|---|---|---|

| F16 | 779.17 | 25.09 |

| Q8_0 | 757.64 | 42.75 |

| Q4_0 | 759.7 | 66.31 |

As you can see, the M3 Max achieves a significant performance boost with quantization levels like Q80 and Q40. Think of quantization as a way to compress the model to make it less resource-hungry. It's like using a smaller backpack for a hike – you carry less weight but might have to make a few extra stops to refill your supplies. The same goes for LLM quantization – sacrificing a bit of accuracy to gain significant speed.

Apple M3 Max Token Speed Generation: Llama 3 8B

Now, let's move to the Llama 3 8B model. This model is a bit larger and more sophisticated than its 7B counterpart, offering even more exciting possibilities for local exploration.

Here's how the M3 Max handles the Llama 3 8B:

| Quantization Level | Processing Tokens/second | Generation Tokens/second |

|---|---|---|

| Q4KM | 678.04 | 50.74 |

| F16 | 751.49 | 22.39 |

While the numbers are slightly lower than the Llama 2 7B, the M3 Max still delivers impressive speeds with this larger model. The Q4KM quantization level is especially interesting, showing a good balance between accuracy and speed.

Apple M3 Max Token Speed Generation: Llama 3 70B

Now, things get interesting with the Llama 3 70B model. This is a behemoth of an LLM, pushing the boundaries of local experimentation.

| Quantization Level | Processing Tokens/second | Generation Tokens/second |

|---|---|---|

| Q4KM | 62.88 | 7.53 |

| F16 | N/A | N/A |

Unfortunately, we don't have data for Llama 3 70B with F16 quantization, but the numbers for the Q4KM level are still impressive, considering the massive size of this model. Think of this like running a marathon with a heavy backpack – it's definitely doable, but you'll need more stamina and time. The M3 Max proves it can handle the load, just at a slower pace.

M3 Max: Is It Worth It for You?

The M3 Max is a powerful machine, no doubt about it. But does it make sense for everyone diving into the LLM world? Let's break down the key things to consider:

- Budget: The M3 Max is a premium product, so you'll need to be prepared to invest a considerable amount of money.

- Technical Skills: While running LLMs on the M3 Max is more accessible than building your own server farm, you'll still need to be comfortable with the command line and have a basic understanding of how LLMs work.

- Model Size & Usage: The M3 Max is a beast for smaller LLMs, but if plan on working with massive 100B+ models, you might need even more firepower.

FAQ

What is quantization?

Imagine you have a map of your city. You can have a super detailed map with every single street and building or a simplified version with only the main roads and landmarks. Quantization works similarly with LLMs. It reduces the size of the model by simplifying the numbers within it. This allows for faster processing but can slightly reduce the model's accuracy.

Are there alternative devices for running LLMs?

Yes! Several options are available, including powerful GPUs from Nvidia and AMD, as well as specialized chips designed for machine learning, like Google's Tensor Processing Units (TPUs).

Is the M3 Max only for running LLMs, or can I use it for other machine learning tasks?

Absolutely! The M3 Max is a versatile machine that excels in various machine learning tasks, including training and deploying models, working with datasets, and experimenting with new algorithms.

Keywords:

Apple M3 Max, Machine Learning, Large Language Models (LLMs), Llama 2, Llama 3, Token Speed, GPU, Quantization, Performance Benchmark, Local LLMs