Is It Worth Buying Apple M3 for Machine Learning Projects?

Introduction

The world of artificial intelligence, particularly large language models (LLMs), is booming. These models can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way, making them incredibly useful for a vast range of applications. Running these models locally on your machine offers benefits like faster response times, data privacy, and the ability to experiment without relying on cloud services.

If you're a developer or a tech enthusiast interested in exploring local LLMs, you might be considering purchasing new hardware to power your projects. The Apple M3 chip, known for its powerful performance, is a tempting choice. But is it really worth the investment for machine learning? Let's dive into the data and find out!

Apple M3 for Llama 2: Speed and Efficiency

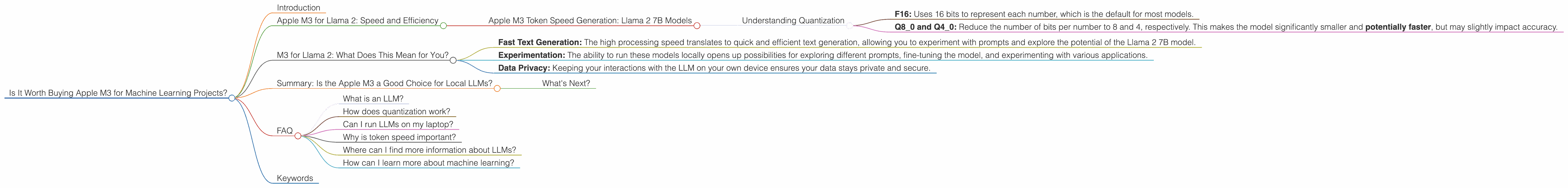

This analysis focuses on the Apple M3 chip's performance with the Llama 2 family of LLMs. We'll explore the speed at which M3 can generate tokens for different Llama 2 models and quantization settings.

Apple M3 Token Speed Generation: Llama 2 7B Models

The table below shows the token speed in tokens per second (tokens/s) for various configurations of Llama 2 7B models on the Apple M3 chip.

| Configuration | Speed (tokens/s) |

|---|---|

| Llama 2 7B F16 Processing | N/A |

| Llama 2 7B F16 Generation | N/A |

| Llama 2 7B Q8_0 Processing (quantization) | 187.52 |

| Llama 2 7B Q8_0 Generation (quantization) | 12.27 |

| Llama 2 7B Q4_0 Processing (quantization) | 186.75 |

| Llama 2 7B Q4_0 Generation (quantization) | 21.34 |

Understanding Quantization

Quantization is like compressing a file to make it smaller. It reduces the size of the LLM model (think of it as the blueprint for generating text) without compromising too much on accuracy.

- F16: Uses 16 bits to represent each number, which is the default for most models.

- Q80 and Q40: Reduce the number of bits per number to 8 and 4, respectively. This makes the model significantly smaller and potentially faster, but may slightly impact accuracy.

Key Observation: The Apple M3 excels on the Llama 2 7B model with Q80 and Q40 quantization, demonstrating impressive token processing speed. The generation speed, while lower than full precision (F16), is still respectable.

M3 for Llama 2: What Does This Mean for You?

Think of token speed like the speed of a typing contest. The higher the token/s, the faster your LLM can "type" out its response. Now, let's connect these numbers to real-world scenarios:

Fast Text Generation: The high processing speed translates to quick and efficient text generation, allowing you to experiment with prompts and explore the potential of the Llama 2 7B model.

Experimentation: The ability to run these models locally opens up possibilities for exploring different prompts, fine-tuning the model, and experimenting with various applications.

Data Privacy: Keeping your interactions with the LLM on your own device ensures your data stays private and secure.

Important Note: While the M3 performs well with Llama 2 7B, data for other sizes and models (e.g., Llama 2 13B, Llama 2 70B) is currently unavailable.

Summary: Is the Apple M3 a Good Choice for Local LLMs?

Buying the Apple M3 for Llama 2 7B model, especially with Q80 or Q40 quantization, seems like a worthwhile investment. The performance for this specific model with these quantizations is excellent, making it suitable for various machine learning tasks.

However, the lack of data for other LLM models on the M3 chip makes it difficult to definitively say whether it's the best choice for every LLM enthusiast.

What's Next?

Stay tuned for updates and benchmarks as more data becomes available. Testing different hardware configurations is crucial for finding the sweet spot between price, performance, and the specific LLM model you aim to run locally.

FAQ

What is an LLM?

An LLM, or Large Language Model, is a type of artificial intelligence that excels at understanding and generating human-like text. It learns patterns from a huge amount of text data, and can then use this knowledge to write stories, translate languages, write code, and much more.

How does quantization work?

Think of it like compressing a file. It's a way to make the model smaller without completely losing its abilities. This makes it faster and uses less memory, but the trade-off is a slight potential decrease in accuracy.

Can I run LLMs on my laptop?

Depending on your laptop's hardware, you can run some LLMs locally. The Apple M3 chip is a good example of a powerful processor designed for machine learning tasks.

Why is token speed important?

The token speed of an LLM is like its typing speed. The higher the token speed, the faster it can process information and respond to your prompts.

Where can I find more information about LLMs?

A great resource is the website Hugging Face, which hosts a large collection of pre-trained LLMs. The documentation for the Llama 2 model provides detailed information about its features.

How can I learn more about machine learning?

Google AI and Stanford University's Machine Learning Course offer excellent introductory resources for machine learning.

Keywords

Apple M3, Llama 2, LLM, Large Language Model, Token Speed, Quantization, Machine Learning, Local Inference, Token/s, GPU, AI, Deep Learning