Is It Worth Buying Apple M2 Pro for Machine Learning Projects?

Introduction

The world of machine learning is exploding, and with it, the demand for powerful hardware. Large language models (LLMs), like the popular Llama 2 series, are revolutionizing how we interact with computers, and their computational requirements are insatiable. Apple's M2 Pro chip, with its impressive performance and energy efficiency, has emerged as a potential game-changer for LLM enthusiasts. But is it truly worth the investment for your machine learning projects? Let's dive into the details to find out!

Apple M2 Pro: A Glimpse into the Beast

Before we dive into the performance numbers, let's get a grasp on the M2 Pro chip itself. It's a powerful beast, offering a substantial upgrade over its predecessor, the M1 Pro. This chip is specifically tailored for demanding tasks like video editing, 3D rendering, and yes, even machine learning. Powered by a 12-core CPU and a 19-core GPU, it boasts a significant leap in performance compared to its predecessors.

Benchmarking the M2 Pro for LLMs

To truly understand the potential of the M2 Pro for machine learning, we need to dig into some hard numbers. We will focus on the Llama 2 model, a popular choice for developers, and analyze its performance on the M2 Pro chip using various quantization levels (think of it as compressing the model to make it run faster). We will be looking at two main aspects:

- Processing: How fast the chip can process the input text (think of this as the reading speed of the LLM).

- Generation: How fast the chip can generate the output text (think of this as the writing speed of the LLM).

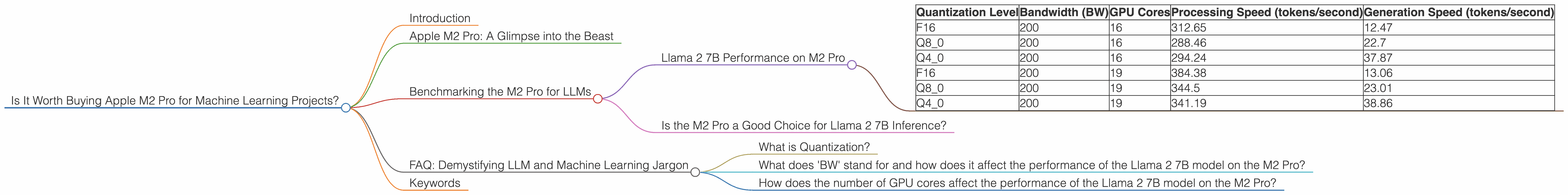

Llama 2 7B Performance on M2 Pro

Let's start with the Llama 2 7B model, a smaller and more manageable LLM that's perfect for experimenting and developing smaller projects. Here's what we found:

| Quantization Level | Bandwidth (BW) | GPU Cores | Processing Speed (tokens/second) | Generation Speed (tokens/second) |

|---|---|---|---|---|

| F16 | 200 | 16 | 312.65 | 12.47 |

| Q8_0 | 200 | 16 | 288.46 | 22.7 |

| Q4_0 | 200 | 16 | 294.24 | 37.87 |

| F16 | 200 | 19 | 384.38 | 13.06 |

| Q8_0 | 200 | 19 | 344.5 | 23.01 |

| Q4_0 | 200 | 19 | 341.19 | 38.86 |

Note: We don't have data for Llama 2 7B on M2 Pro with different GPU core configurations.

Key Observations from the Llama 2 7B Data:

- Processing: The M2 Pro can handle a significant amount of text processing for the Llama 2 7B model, even with different quantization levels. This can impact how quickly your LLM can understand and analyze your input text.

- Generation: The generation speed is quite impressive as well, especially with Q4_0 quantization. This means that the model is generating text quite quickly, making real-time interactions and responses more feasible.

- Quantization Impact: As you can see, the choice of quantization level significantly affects both processing and generation speeds. While F16 offers the highest processing speed, Q4_0 shines when it comes to generation speed. This trade-off highlights the importance of considering your project's specific needs.

Analogy: Think of it like reading a really long book quickly. The M2 Pro with the Llama 2 7B model is capable of "reading" the text (processing) and summarizing it (generation) pretty fast!

Is the M2 Pro a Good Choice for Llama 2 7B Inference?

Based on the numbers, the M2 Pro does a commendable job with the Llama 2 7B model. It's capable of providing smooth and fast performance, making it a solid option for developing and deploying smaller LLM applications.

However, remember that the optimal choice depends on your specific needs. If you prioritize speed and efficiency for text generation tasks, then Q4_0 quantization might be your best bet. On the other hand, if you need to process large chunks of input text quickly, F16 quantization might be the way to go.

FAQ: Demystifying LLM and Machine Learning Jargon

What is Quantization?

Quantization is the process of compressing the LLM model, which results in reduced file size and faster execution times. The catch is that this compression can sometimes affect the accuracy of the model.

Imagine you have a huge library of books, but you want to move them to a smaller space. Quantization is like using a special machine to shrink the books, making them smaller but potentially losing some details in the process!

What does 'BW' stand for and how does it affect the performance of the Llama 2 7B model on the M2 Pro?

'BW' stands for Bandwidth, which essentially measures how quickly data can be transferred between different components of the computer. A higher bandwidth means faster data transfer and can significantly impact the overall performance of the LLM model.

Think of it like a superhighway versus a narrow road. Higher bandwidth is like a superhighway, allowing for faster movement of data, while lower bandwidth would be like taking a narrow road, slowing down the process.

How does the number of GPU cores affect the performance of the Llama 2 7B model on the M2 Pro?

GPU cores serve as the processing powerhouses of the chip. More GPU cores mean more simultaneous calculations, leading to faster performance. This is especially crucial for tasks like LLM inference, where a lot of computations are required.

Imagine a team of workers trying to complete a complex task. More workers mean more tasks can be completed at the same time, leading to a faster overall completion time!

Keywords

Machine learning, Large language models, Apple M2 Pro, Llama 2, Llama 2 7B, LLMs, inference, quantization, GPU cores, bandwidth, token speed, processing speed, generation speed, performance, efficiency, developers