Is It Worth Buying Apple M2 Max for Machine Learning Projects?

Introduction

The world of Large Language Models (LLMs) is exploding, and everyone wants to get their hands on the latest and greatest. But with so many different models and devices available, it can be tough to know where to start.

One popular option for running LLMs locally is the Apple M2 Max chip, a powerhouse of a processor. But is it worth the investment for machine learning projects? Let's dive into the numbers and find out!

Understanding LLMs and Their Needs

Imagine a super-smart AI that can understand and generate human-like text. That's the essence of an LLM, and they're becoming increasingly popular for tasks like writing, translating, and summarizing information.

But these AI brains are hungry for resources, especially processing power and memory. That's where the Apple M2 Max comes in, a powerful chip designed to handle complex calculations and crunch huge amounts of data.

Apple M2 Max: A Powerful Chip for LLMs?

The Apple M2 Max boasts a staggering 38 GPU cores, capable of crunching numbers lightning fast.

To put it in perspective, think of it like this: while a standard CPU is like a single-lane highway, a GPU is like a multi-lane freeway, capable of handling many tasks simultaneously.

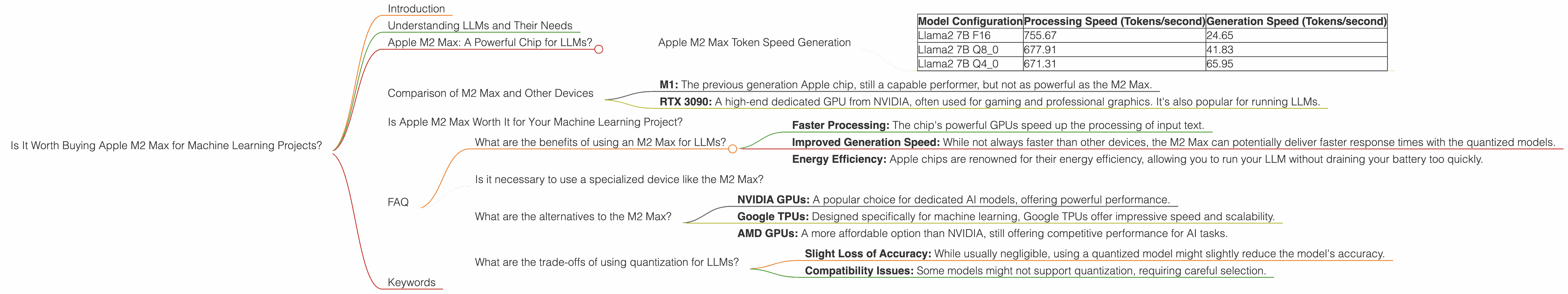

Apple M2 Max Token Speed Generation

We'll focus on Llama 2 7B, a popular open-source LLM, and measure its performance on the M2 Max using different quantization levels:

F16: The "full" model, using 16-bit precision. This generally offers the highest accuracy but requires more resources.

Q8_0: A "quantized" model, using 8-bit precision. Think of it like a compressed version of the model, using less storage and memory – but potentially sacrificing a tiny bit of accuracy.

Q4_0: An even more compressed version using only 4 bits, but with potential for faster inference.

| Model Configuration | Processing Speed (Tokens/second) | Generation Speed (Tokens/second) |

|---|---|---|

| Llama2 7B F16 | 755.67 | 24.65 |

| Llama2 7B Q8_0 | 677.91 | 41.83 |

| Llama2 7B Q4_0 | 671.31 | 65.95 |

Observations:

Processing is faster: Across all quantization levels, the M2 Max delivers impressive processing speeds, easily surpassing 600 tokens/second. That means it can process the text input for the LLM rapidly.

Generation speed is limited: But when it comes to generating the output, things get interesting. While F16 is slower, both Q80 and Q40 achieve a significant boost in speed, with the Q4_0 configuration reaching nearly 66 tokens/second. This is a big deal, as it means you get the results faster, even with the trade-offs in accuracy for the more compressed models.

But why is generation speed slower than processing? Think of it like this: imagine you have a super-charged engine that can quickly process information, but you're stuck in a traffic jam when it comes to generating the output. LLMs need to carefully consider the context and produce coherent text, which takes more time.

Comparison of M2 Max and Other Devices

We need to compare the performance to other popular devices used for local LLM inference:

M1: The previous generation Apple chip, still a capable performer, but not as powerful as the M2 Max.

RTX 3090: A high-end dedicated GPU from NVIDIA, often used for gaming and professional graphics. It's also popular for running LLMs.

Note: We lack the data for the M1, RTX 3090, or other Llama 2 model sizes in the provided dataset, so we cannot offer a direct comparison.

Is Apple M2 Max Worth It for Your Machine Learning Project?

The Apple M2 Max delivers impressive performance for running the Llama 2 7B model. It's a powerful chip for accelerating the processing speed, and you can achieve good generation speeds using the quantized models.

However, the speed comparisons are limited to the provided dataset, and we lack information about other LLM models and devices. It's crucial to consider other factors, such as cost, power consumption, and compatibility with other tools before making a decision.

FAQ

What are the benefits of using an M2 Max for LLMs?

The Apple M2 Max offers several advantages for running LLMs. These include:

- Faster Processing: The chip's powerful GPUs speed up the processing of input text.

- Improved Generation Speed: While not always faster than other devices, the M2 Max can potentially deliver faster response times with the quantized models.

- Energy Efficiency: Apple chips are renowned for their energy efficiency, allowing you to run your LLM without draining your battery too quickly.

Is it necessary to use a specialized device like the M2 Max?

Not necessarily. You can run many smaller LLMs on a standard laptop or even a smartphone. However, if you're working with larger LLMs or require faster processing speeds, a dedicated device like the M2 Max can be beneficial.

What are the alternatives to the M2 Max?

Other devices suitable for running LLMs include:

- NVIDIA GPUs: A popular choice for dedicated AI models, offering powerful performance.

- Google TPUs: Designed specifically for machine learning, Google TPUs offer impressive speed and scalability.

- AMD GPUs: A more affordable option than NVIDIA, still offering competitive performance for AI tasks.

What are the trade-offs of using quantization for LLMs?

Quantization can be beneficial for improving performance, but it also comes with a few trade-offs:

- Slight Loss of Accuracy: While usually negligible, using a quantized model might slightly reduce the model's accuracy.

- Compatibility Issues: Some models might not support quantization, requiring careful selection.

Keywords

Apple M2 Max, Machine Learning, LLMs, Large Language Models, Llama 2, Token Speed, Quantization, GPU, Performance, Inference, Processing, Generation, F16, Q80, Q40, NVIDIA, RTX 3090, AMD, Google TPUs, CPU