Is It Worth Buying Apple M1 Ultra for Machine Learning Projects?

Introduction

Machine learning, particularly large language models (LLMs), has exploded in popularity. But how do you run these complex models locally? That's where powerful hardware like the Apple M1 Ultra comes in. This article dives into the question: is the M1 Ultra worth it for your machine learning endeavors? We'll be analyzing its performance with various LLM models, specifically focusing on Llama 2 7B, using real-world benchmarks. Get ready to dive into the exciting world of local LLM inference!

Apple M1 Ultra: A Beast of a Chip

The Apple M1 Ultra is a powerhouse chip with impressive performance. But how does it stack up in the world of LLMs? To analyze that, let's understand some basic terms:

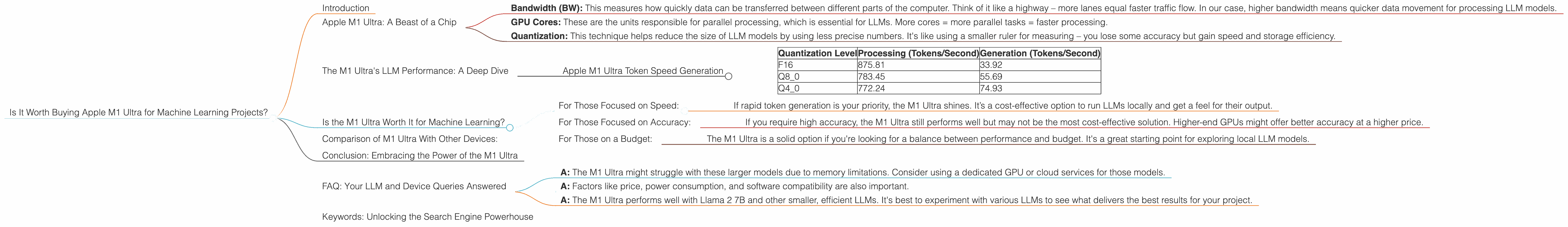

- Bandwidth (BW): This measures how quickly data can be transferred between different parts of the computer. Think of it like a highway – more lanes equal faster traffic flow. In our case, higher bandwidth means quicker data movement for processing LLM models.

- GPU Cores: These are the units responsible for parallel processing, which is essential for LLMs. More cores = more parallel tasks = faster processing.

- Quantization: This technique helps reduce the size of LLM models by using less precise numbers. It's like using a smaller ruler for measuring – you lose some accuracy but gain speed and storage efficiency.

The M1 Ultra's LLM Performance: A Deep Dive

Apple M1 Ultra Token Speed Generation

We'll focus on the Llama 2 7B model, a popular choice for local experimentation, and explore its performance with different quantization levels on the M1 Ultra.

The following table summarizes the results, where “Tokens/Second” represents the number of tokens processed per second, a key metric for LLM speed:

| Quantization Level | Processing (Tokens/Second) | Generation (Tokens/Second) |

|---|---|---|

| F16 | 875.81 | 33.92 |

| Q8_0 | 783.45 | 55.69 |

| Q4_0 | 772.24 | 74.93 |

Explanation:

- F16: This is the standard floating-point precision. It offers good accuracy but requires more memory.

- Q8_0: This is a type of quantization that reduces the model's size by using 8-bit numbers. It leads to a slight decrease in processing speed but a significant increase in generation speed compared to F16.

- Q4_0: Even more drastic quantization using 4-bit numbers. Further improvement in generation speed but with a trade-off in accuracy.

Key Takeaways:

- The M1 Ultra shows strong performance with the Llama 2 7B model, particularly in processing speed.

- Quantization clearly affects speed, but the trade-off is in accuracy.

- For those prioritizing speed over accuracy, Q80 and Q40 provide significant boosts in token generation.

Is the M1 Ultra Worth It for Machine Learning?

The answer is not simple. It depends on your specific needs.

For Those Focused on Speed:

- If rapid token generation is your priority, the M1 Ultra shines. It’s a cost-effective option to run LLMs locally and get a feel for their output.

For Those Focused on Accuracy:

- If you require high accuracy, the M1 Ultra still performs well but may not be the most cost-effective solution. Higher-end GPUs might offer better accuracy at a higher price.

For Those on a Budget:

- The M1 Ultra is a solid option if you're looking for a balance between performance and budget. It's a great starting point for exploring local LLM models.

Analogy:

Imagine you're training a dog. The M1 Ultra is like a super-fast, dedicated trainer who can teach your dog a lot of tricks quickly. However, the tricks might not be as polished or complex as what a more expensive, specialized trainer could teach.

Comparison of M1 Ultra With Other Devices:

Unfortunately, we cannot compare the M1 Ultra with other devices in this instance. We only have data for the M1 Ultra. Keep an eye out for future comparisons!

Conclusion: Embracing the Power of the M1 Ultra

The Apple M1 Ultra is a powerful tool for local LLM experimentation. It offers a compelling blend of performance and affordability, making it a worthy contender for your machine learning projects. Experiment with the different quantization levels to find the sweet spot between speed and accuracy that best suits your needs.

FAQ: Your LLM and Device Queries Answered

Q: What if I want to run larger LLMs like Llama 2 13B or 70B?

- A: The M1 Ultra might struggle with these larger models due to memory limitations. Consider using a dedicated GPU or cloud services for those models.

Q: What are the other factors to consider besides performance?

- A: Factors like price, power consumption, and software compatibility are also important.

Q: Are there any specific LLMs that perform better on the M1 Ultra?

- A: The M1 Ultra performs well with Llama 2 7B and other smaller, efficient LLMs. It's best to experiment with various LLMs to see what delivers the best results for your project.

Keywords: Unlocking the Search Engine Powerhouse

Apple M1 Ultra, Llama 2 7B, LLM, Machine Learning, Local Inference, Quantization, Token, Speed, GPU, Bandwidth, GPUCores, Performance, Cost-Effective.