Is Apple M3 Pro Powerful Enough for Llama2 7B?

Introduction

The world of large language models (LLMs) is booming, and with it comes the need for powerful hardware to handle their processing demands. LLMs like Llama2 7B, with their impressive text generation capabilities, require a dedicated processing engine to deliver smooth and efficient performance. But can a device like the Apple M3Pro, known for its efficient design and powerful performance, handle the heavy lifting? Let's dive into the performance analysis and see how the M3Pro fares against Llama2 7B.

Performance Analysis: Token Generation Speed Benchmarks

Token generation speed is a crucial metric for evaluating the performance of an LLM. Faster token generation translates to quicker responses, making the model more interactive and user-friendly. Let's examine the token generation speed benchmarks for different configurations of Llama2 7B on the Apple M3_Pro.

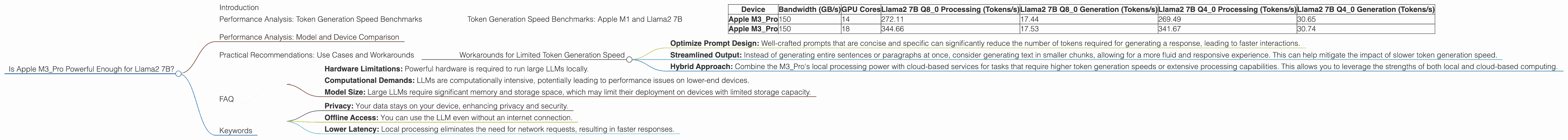

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

| Device | Bandwidth (GB/s) | GPU Cores | Llama2 7B Q8_0 Processing (Tokens/s) | Llama2 7B Q8_0 Generation (Tokens/s) | Llama2 7B Q4_0 Processing (Tokens/s) | Llama2 7B Q4_0 Generation (Tokens/s) |

|---|---|---|---|---|---|---|

| Apple M3_Pro | 150 | 14 | 272.11 | 17.44 | 269.49 | 30.65 |

| Apple M3_Pro | 150 | 18 | 344.66 | 17.53 | 341.67 | 30.74 |

Q80 and Q40 represent quantization levels, a technique to compress the model size and speed up inference. Essentially, it's like using a shorter dictionary to represent the same information. In this case, Q80 uses 8 bits to store data and is faster than Q40, which uses 4 bits.

As we can see, the Apple M3_Pro delivers impressive token generation speeds for both processing and generation, even with the Llama2 7B model. Let's break down the results further:

- Llama2 7B Q80 Processing: At 272 to 344 tokens per second, depending on the GPU Core count, the M3Pro showcases significant performance in processing the Llama2 7B model. This means the model can quickly analyze and understand prompts, paving the way for swift responses.

- Llama2 7B Q8_0 Generation: While the processing speed is impressive, the token generation speed falls behind. Generating text at around 17 tokens per second might feel slightly sluggish for some applications.

- Llama2 7B Q40 Processing: The processing remains strong with Q40, reaching speeds of 269 to 341 tokens per second. The slower token generation speed in this configuration could be attributed to the increased complexity of managing the smaller data representation.

- Llama2 7B Q40 Generation: Similar to Q80, the token generation speed is around 30 tokens per second, demonstrating the trade-offs in speed and accuracy associated with different quantization levels.

To put these numbers in perspective, imagine trying to write a 1000-word article. At a token generation speed of 30 tokens per second, it would take roughly 33 seconds to generate the entire article. This might be fine for short interactions, but could feel slow for more intensive text generation tasks.

Performance Analysis: Model and Device Comparison

The Apple M3_Pro is a formidable candidate for running LLMs, but how does it stack up against other devices and models? Unfortunately, due to the lack of available data, we can't offer a direct comparison with other devices in this article.

However, based on the numbers we have, the Apple M3_Pro emerges as a strong contender in the realm of local LLM deployment.

Practical Recommendations: Use Cases and Workarounds

The Apple M3_Pro, paired with Llama2 7B, opens up a world of possibilities for developers seeking to implement local LLM applications. Here are some potential use cases and strategies to optimize the performance of your applications:

- Chatbots and Conversational AI: The M3Pro's processing speed is well-suited for building responsive conversational AI systems. However, the token generation speed may limit the length and complexity of interactions, especially when using Q40 quantization.

- Text Summarization and Analysis: For tasks that involve analyzing large chunks of text and extracting key information, the M3_Pro's performance shine. You can leverage its processing power to quickly analyze and summarize lengthy documents, making it ideal for content creation tools or knowledge management systems.

- Code Generation and Assistance: The M3_Pro's capability in processing code snippets and generating code completions can be a valuable asset to developers. While the token generation speed might not be optimal for generating large blocks of code, it can significantly enhance the coding experience by providing real-time suggestions and auto-completion.

Workarounds for Limited Token Generation Speed

To address the limitations of token generation speed, you can explore these strategies:

- Optimize Prompt Design: Well-crafted prompts that are concise and specific can significantly reduce the number of tokens required for generating a response, leading to faster interactions.

- Streamlined Output: Instead of generating entire sentences or paragraphs at once, consider generating text in smaller chunks, allowing for a more fluid and responsive experience. This can help mitigate the impact of slower token generation speed.

- Hybrid Approach: Combine the M3_Pro's local processing power with cloud-based services for tasks that require higher token generation speeds or extensive processing capabilities. This allows you to leverage the strengths of both local and cloud-based computing.

FAQ

Q: What does "quantization" mean in the context of LLMs? A: Quantization is a technique used to compress the size of an LLM by using a shorter representation of the model's data. This can significantly improve inference speed, as the model has less information to process. Think of it like using a smaller dictionary to represent the same words—you can access the information faster, but might have less precision.

Q: How do I choose the right quantization level for my application? A: The choice of quantization level depends on the specific requirements of your application. If you prioritize speed, Q80 might be the best choice. But if accuracy is paramount, Q40 might be more suitable. You'll need to experiment with different quantization levels to find the best balance for your needs.

Q: What are the limitations of local LLM deployment? A: Local LLM deployment can face challenges related to:

- Hardware Limitations: Powerful hardware is required to run large LLMs locally.

- Computational Demands: LLMs are computationally intensive, potentially leading to performance issues on lower-end devices.

- Model Size: Large LLMs require significant memory and storage space, which may limit their deployment on devices with limited storage capacity.

Q: What are the benefits of using a local LLM? A: Deploying an LLM locally offers several advantages:

- Privacy: Your data stays on your device, enhancing privacy and security.

- Offline Access: You can use the LLM even without an internet connection.

- Lower Latency: Local processing eliminates the need for network requests, resulting in faster responses.

Keywords

Apple M3Pro, Llama2 7B, Large Language Model, LLM, Token Generation Speed, Performance Analysis, Quantization, Q80, Q4_0, Bandwidth, GPU Cores, Local LLM Deployment, Use Cases, Workarounds, Chatbot, Conversational AI, Text Summarization, Code Generation, Offline Processing, Privacy, Latency, Hardware Limitations