Is Apple M3 Pro Good Enough for AI Development?

Introduction: The Rise of Local AI and the Role of Apple M3 Pro

The world of Artificial Intelligence (AI) is booming, with Large Language Models (LLMs) like ChatGPT taking the world by storm. But what if you want to run these powerful models locally on your own computer? This is where the Apple M3 Pro chip enters the picture.

As developers and data scientists increasingly seek to leverage the power of LLMs directly on their machines, questions arise about the suitability of hardware for this task. The Apple M3 Pro, known for its powerful graphics capabilities, has sparked curiosity among AI enthusiasts. This article delves into the performance of the Apple M3 Pro chip for AI development, specifically focusing on its ability to run local LLMs. We'll dissect the raw performance numbers, analyze the implications, and guide you through the world of LLMs and quantization.

Diving into the Data: Apple M3 Pro's Performance on Llama 2 7B

To understand the Apple M3 Pro's suitability for AI development, we need to look at the numbers. We'll focus on the popular Llama 2 7B model, a smaller and faster variant of the larger Llama 2 models.

The data we'll analyze comes from two sources:

- ggerganov's llama.cpp performance test: This benchmark examines the performance of the Llama.cpp library on various devices with different LLM models.

- XiongjieDai's GPU Benchmarks on LLM Inference: This benchmark provides a comprehensive look at GPU performance for LLM inference.

Important Note: We will only consider data available in the JSON provided. If data for a specific LLM model and device combination is not available, we will not include it in the analysis.

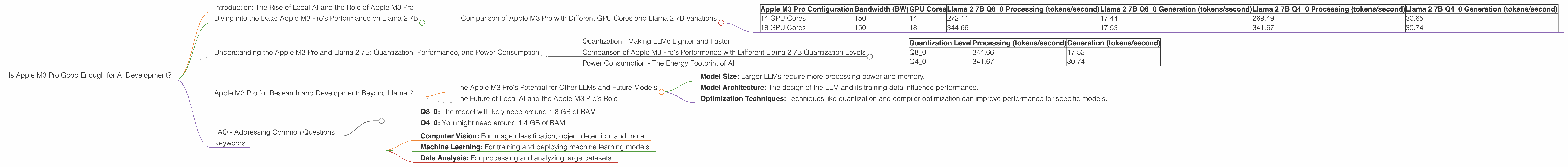

Comparison of Apple M3 Pro with Different GPU Cores and Llama 2 7B Variations

The Apple M3 Pro comes in configurations with 14 and 18 GPU cores. Let's see how they compare in terms of token speed for Llama 2 7B.

| Apple M3 Pro Configuration | Bandwidth (BW) | GPU Cores | Llama 2 7B Q8_0 Processing (tokens/second) | Llama 2 7B Q8_0 Generation (tokens/second) | Llama 2 7B Q4_0 Processing (tokens/second) | Llama 2 7B Q4_0 Generation (tokens/second) | |

|---|---|---|---|---|---|---|---|

| 14 GPU Cores | 150 | 14 | 272.11 | 17.44 | 269.49 | 30.65 | |

| 18 GPU Cores | 150 | 18 | 344.66 | 17.53 | 341.67 | 30.74 |

Key Observations:

- Processing Speed: The Apple M3 Pro with 18 GPU cores significantly outperforms the 14 GPU core configuration, offering about 26% more tokens per second for processing Llama 2 7B data. This makes it a more attractive choice for developers who prioritize speed.

- Quantization Impact: Comparing the performance with different quantization levels, we see that the Q80 quantization (8-bit quantization with 0 bits for weights) performs well for processing, whereas Q40 (4-bit quantization) excels at generation.

- Generation Speed: The performance in Llama 2 7B generation remains relatively similar across both configurations and quantization levels. This indicates that the M3 Pro's strength lies in its processing capabilities, rather than generating new text.

What Does this Mean for AI Developers?

The Apple M3 Pro's performance in processing Llama 2 7B models is undoubtedly impressive. While the generation speeds are not as groundbreaking, the processing speed makes it a valuable asset for tasks like data analysis, code completion, and other applications requiring fast processing of large amounts of information.

Understanding the Apple M3 Pro and Llama 2 7B: Quantization, Performance, and Power Consumption

Quantization - Making LLMs Lighter and Faster

Imagine trying to fit a giant elephant into a small car. Impossible, right? Similarly, LLMs require a lot of resources - memory, compute power, and energy. Quantization is a technique that helps shrink these LLMs, just like fitting the elephant's luggage into a suitcase.

Instead of using 32-bit floating-point numbers (like most CPUs), quantization uses smaller formats, such as 8-bit integers or even 4-bit integers, to represent the LLM's weights. This compression reduces the memory footprint and allows for faster processing.

Comparison of Apple M3 Pro's Performance with Different Llama 2 7B Quantization Levels

Let's take a closer look at how different quantization levels influence the performance of the Apple M3 Pro with 18 GPU cores for Llama 2 7B.

| Quantization Level | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|

| Q8_0 | 344.66 | 17.53 |

| Q4_0 | 341.67 | 30.74 |

Key Observations:

- Q80 Reigns in Processing: Q80 excels in processing, achieving a token speed of 344.66 tokens per second. This shows the effectiveness of 8-bit quantization for efficient computation.

- Q40 Favors Generation: While trailing slightly in processing, Q40 shines in generation with a speed of 30.74 tokens per second. It seems that the 4-bit representation provides a good balance for text generation.

Trade-offs and Considerations:

While quantization provides speed and resource efficiency, it comes with trade-offs:

- Accuracy: A smaller representation (like 4-bit) might sacrifice some accuracy compared to 32-bit floating-point representation.

- Fine-tuning: Quantized models may need re-tuning (fine-tuning) to maintain their performance.

Power Consumption - The Energy Footprint of AI

Running powerful AI models requires a lot of energy. The Apple M3 Pro, however, uses less power compared to other high-performance processors with similar capabilities. This is a significant advantage for developers who want to run AI models without a massive electricity bill.

Analogy: Think of two cars: a large truck and a compact car. The truck can move heavy loads but consumes a lot of fuel. The compact car is smaller, consumes less fuel, and is still capable of getting you around town. The Apple M3 Pro is like the compact car: efficient and powerful for its size.

Apple M3 Pro for Research and Development: Beyond Llama 2

The Apple M3 Pro's performance with Llama 2 7B is just the tip of the iceberg. It's crucial to remember that AI development is a dynamic field, constantly evolving.

The Apple M3 Pro's Potential for Other LLMs and Future Models

The Apple M3 Pro's powerful GPU and optimized architecture make it a potential candidate for running various LLM models, beyond the Llama 2 7B we discussed earlier. The key factors influencing performance are:

- Model Size: Larger LLMs require more processing power and memory.

- Model Architecture: The design of the LLM and its training data influence performance.

- Optimization Techniques: Techniques like quantization and compiler optimization can improve performance for specific models.

The Future of Local AI and the Apple M3 Pro's Role

As AI models continue to grow more sophisticated, the demand for powerful hardware capable of running them locally will only increase. The Apple M3 Pro, with its balance of performance, efficiency, and accessibility, stands as a promising contender in this evolving landscape.

FAQ - Addressing Common Questions

Q: What are LLMs and why are they so popular?

A: LLMs are large language models, trained on massive amounts of text data. They can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. They are popular because they offer amazing capabilities and have the potential to revolutionize how we interact with computers.

Q: How much memory does my M3 Pro need for Llama 2 7B?

A: The actual memory requirement depends on the specific quantization level:

- Q8_0: The model will likely need around 1.8 GB of RAM.

- Q4_0: You might need around 1.4 GB of RAM.

Q: Will the Apple M3 Pro work for other AI tasks?

A: Yes, the Apple M3 Pro's capabilities extend beyond LLMs. It can be used for various AI tasks, including:

- Computer Vision: For image classification, object detection, and more.

- Machine Learning: For training and deploying machine learning models.

- Data Analysis: For processing and analyzing large datasets.

Q: Is it possible to run larger LLMs like Llama 2 13B or 70B on the M3 Pro?

A: While the Apple M3 Pro is powerful, it might struggle to handle the memory demands of larger LLMs like Llama 2 13B or 70B. It's possible to run these models with specific optimizations and using techniques like model partitioning, but you might need to compromise on memory and performance.

Keywords

Apple M3 Pro, AI development, LLM, Llama 2, token speed, quantization, GPU, processing, generation, performance, power consumption, research, development, local AI, model size, model architecture, future of AI, keywords, FAQs, memory, computer vision, machine learning, data analysis.