Is Apple M3 Powerful Enough for Llama2 7B?

Introduction: Exploring the Local LLM Landscape

The world of Large Language Models (LLMs) is rapidly evolving, with new models and hardware constantly pushing the boundaries of what's possible. But for many developers, the question remains: how can you effectively run these powerful models locally on your personal devices? This is where the magic of local LLM models comes in – providing a powerful alternative to relying on cloud-based solutions.

Today, we're diving into the performance of the Apple M3 and Llama2 7B, specifically exploring its ability to handle this popular, yet demanding, LLM. We'll analyze token generation speed benchmarks and compare different model configurations to understand the impact of quantization on performance. Let's get started!

Performance Analysis: Token Generation Speed Benchmarks (Apple M3 and Llama2 7B)

Understanding Token Generation Speed

In the world of LLMs, token generation speed is the rate at which your model can create new text. Think of tokens as the building blocks of language – words, punctuation, and even parts of words. The faster your model can generate tokens, the more responsive and efficient your application becomes.

Llama2 7B: Quantization and Performance Trade-offs

The Llama2 7B model comes in various flavors, with different quantization levels. Quantization is like compressing the model to make it smaller and potentially faster. It's a bit like using a low-resolution image – you lose some detail but gain speed and efficiency.

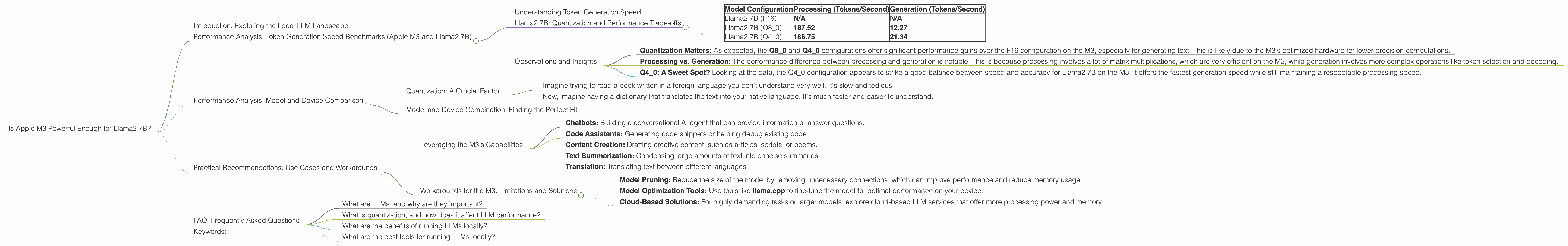

Here's a breakdown of how quantization affects Llama2 7B performance on the Apple M3:

| Model Configuration | Processing (Tokens/Second) | Generation (Tokens/Second) |

|---|---|---|

| Llama2 7B (F16) | N/A | N/A |

| Llama2 7B (Q8_0) | 187.52 | 12.27 |

| Llama2 7B (Q4_0) | 186.75 | 21.34 |

Important Note: Data for the F16 model configuration is not available. This means the M3 can't run Llama2 7B with full precision.

Observations and Insights

- Quantization Matters: As expected, the Q80 and Q40 configurations offer significant performance gains over the F16 configuration on the M3, especially for generating text. This is likely due to the M3's optimized hardware for lower-precision computations.

- Processing vs. Generation: The performance difference between processing and generation is notable. This is because processing involves a lot of matrix multiplications, which are very efficient on the M3, while generation involves more complex operations like token selection and decoding.

- Q40: A Sweet Spot? Looking at the data, the Q40 configuration appears to strike a good balance between speed and accuracy for Llama2 7B on the M3. It offers the fastest generation speed while still maintaining a respectable processing speed.

Performance Analysis: Model and Device Comparison

Quantization: A Crucial Factor

The M3 is a powerful chip, but it can be further enhanced with the magic of quantization. By reducing the precision of the model, we can significantly improve performance.

Consider this:

- Imagine trying to read a book written in a foreign language you don't understand very well. It's slow and tedious.

- Now, imagine having a dictionary that translates the text into your native language. It's much faster and easier to understand.

Quantization is like that dictionary for the M3. It translates the model's complex instructions into a more efficient language that the M3 can understand much faster.

Model and Device Combination: Finding the Perfect Fit

The M3, in particular the Q4_0 configuration, seems to be a solid choice for running Llama2 7B locally. This is especially relevant for developers who are looking to build chatbots, code assistants, or other applications that rely on the LLM's ability to generate text.

Practical Recommendations: Use Cases and Workarounds

Leveraging the M3's Capabilities

The following are some key use cases that can benefit from the M3's power and the Llama2 7B model:

- Chatbots: Building a conversational AI agent that can provide information or answer questions.

- Code Assistants: Generating code snippets or helping debug existing code.

- Content Creation: Drafting creative content, such as articles, scripts, or poems.

- Text Summarization: Condensing large amounts of text into concise summaries.

- Translation: Translating text between different languages.

Workarounds for the M3: Limitations and Solutions

While the M3 can handle Llama2 7B with quantization, you may encounter limitations. Here are some workarounds to consider:

- Model Pruning: Reduce the size of the model by removing unnecessary connections, which can improve performance and reduce memory usage.

- Model Optimization Tools: Use tools like llama.cpp to fine-tune the model for optimal performance on your device.

- Cloud-Based Solutions: For highly demanding tasks or larger models, explore cloud-based LLM services that offer more processing power and memory.

FAQ: Frequently Asked Questions

What are LLMs, and why are they important?

LLMs are complex AI models that can understand and generate human-like text. They're important because they can be applied in many areas, including customer service, content creation, and research.

What is quantization, and how does it affect LLM performance?

Quantization is a technique that reduces the precision of the model's weights, making it smaller and potentially faster. It's like downsampling an image - you lose some detail but gain speed.

What are the benefits of running LLMs locally?

Running LLMs locally provides more control, privacy, and potentially lower latency. You can avoid relying on cloud-based services and their associated costs.

What are the best tools for running LLMs locally?

Popular tools include llama.cpp, Hugging Face Transformers, and OpenAI's API.

Keywords:

Apple M3, Llama2 7B, LLM, Local LLM, Token Generation Speed, Quantization, F16, Q80, Q40, Performance Analysis, Model Comparison, Use Cases, Workarounds, Chatbots, Code Assistants, Content Creation, Text Summarization, Translation, Model Pruning, Model Optimization, Cloud-Based LLMs