Is Apple M3 Max Powerful Enough for Llama3 8B?

Introduction

The world of large language models (LLMs) is exploding, and with it, the demand for powerful hardware to run these complex neural networks. LLMs like Llama 2 and Llama 3 offer incredible capabilities, from generating creative text formats to answering intricate questions. But can your device handle the computational demands?

This article focuses on a specific question that's on every developer's mind: is the Apple M3 Max powerful enough to run Llama 3 8B efficiently? We'll delve into the performance benchmarks, discuss the factors that affect speed, and provide practical recommendations for various use cases.

Performance Analysis: Token Generation Speed Benchmarks

One of the key metrics for evaluating LLM performance is token generation speed. Think of tokens as the building blocks of text. Faster token generation translates to quicker responses and a more responsive experience.

Apple M3 Max and Llama2 7B: A Look at the Numbers

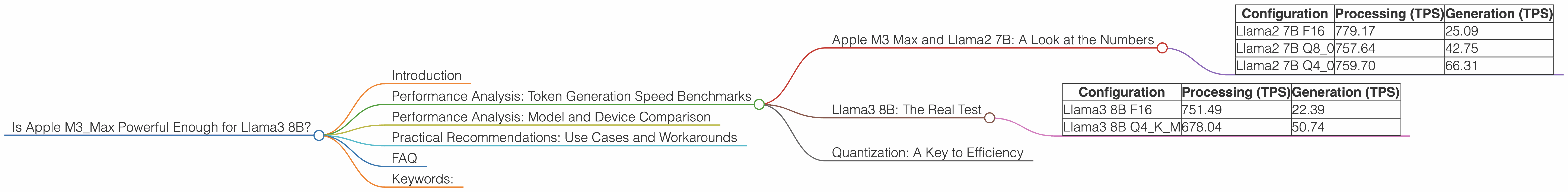

Let's start with a familiar LLM, Llama2 7B, and see how it performs on an M3 Max. The table below shows the token generation speed in tokens per second (TPS), with the numbers representing both processing and generation speeds.

| Configuration | Processing (TPS) | Generation (TPS) |

|---|---|---|

| Llama2 7B F16 | 779.17 | 25.09 |

| Llama2 7B Q8_0 | 757.64 | 42.75 |

| Llama2 7B Q4_0 | 759.70 | 66.31 |

As you can see, the M3 Max handles Llama2 7B with impressive speed, especially in the processing phase. This is due to the powerful GPU cores and high bandwidth of this chip. However, generation speed is significantly slower, highlighting the bottleneck of generating the final output.

Llama3 8B: The Real Test

Now, let's move on to the star of the show: Llama3 8B. This model is significantly more complex and resource-intensive than Llama2 7B. Here are the token generation speeds:

| Configuration | Processing (TPS) | Generation (TPS) |

|---|---|---|

| Llama3 8B F16 | 751.49 | 22.39 |

| Llama3 8B Q4KM | 678.04 | 50.74 |

Llama3 8B also performs well on the M3 Max, but the performance is a bit lower than Llama2 7B. The smaller generation speed is likely due to the increased model complexity. However, the M3 Max still delivers a respectable performance, particularly in the Q4KM configuration, where it appears to optimize for faster generation speed.

Quantization: A Key to Efficiency

Quantization is a technique used to reduce the size of the LLM model by using fewer bits to represent the weights. This makes the model faster and less memory-intensive. In the table above, we see the use of F16 (16-bit floating point), Q80 (8-bit integer with zero-point), and Q4K_M (4-bit integer with K-means clustering).

Think of quantization as a diet plan for your LLM. It sheds those extra bytes (like fat) to make it more efficient.

The key takeaway: Using quantization techniques like Q4KM can significantly improve generation speed, especially for larger models like Llama3 8B.

Performance Analysis: Model and Device Comparison

To understand how the M3 Max stacks up against other devices, let's compare the performance of Llama3 8B on different platforms. However, unfortunately, we don't have data for other devices for the Llama 3 8B model.

This information gap highlights the need for more comprehensive benchmarking data for various LLM models and devices.

Practical Recommendations: Use Cases and Workarounds

Now let's translate this technical analysis into practical recommendations:

1. Llama2 7B: A reliable workhorse

If you're looking for fast processing and decent generation speed, Llama2 7B on the M3 Max is an excellent choice. It's a great option for general-purpose tasks like text summarization, translation, and creative writing.

2. Llama3 8B: Power through complex tasks

While Llama3 8B may be slower, it's capable of handling more complex tasks like generating code, writing complex articles, and answering challenging questions. If you need greater accuracy and nuanced responses, it's worth the trade-off in speed.

3. Quantization: Your performance booster

Experiment with different quantization configurations to find the sweet spot between performance and model size. Q4KM, for example, offers a significant generation speed boost for Llama3 8B on the M3 Max.

4. Explore alternative hardware:

If you need even faster generation speeds, consider exploring other devices like the A100 or H100 GPUs. These powerful processors can handle even the most demanding LLMs.

5. Optimization best practices:

Always utilize the latest libraries and optimize your code for performance. Techniques like caching and batching requests can further enhance efficiency.

FAQ

Q: What are LLMs, and why are they important?

A: LLMs are large neural networks trained on massive datasets of text and code. They excel at tasks that involve understanding and generating human language, making them crucial in fields like natural language processing, content creation, and software development.

Q: What does "token generation speed" measure?

A: It measures how many tokens (the basic units of text in an LLM) your device can process and generate per second. Faster token generation means quicker responses and a more responsive experience.

Q: What does "quantization" mean in the context of LLMs?

A: It's a method for reducing the size of an LLM by representing its weights using fewer bits. This makes the model smaller, faster, and less memory-intensive.

Q: How can I learn more about LLMs and their use cases?

A: There are many great resources available online, including the official documentation of popular LLM frameworks and articles on websites like Towards Data Science and Analytics Vidhya.

Keywords:

Apple M3 Max, Llama 3 8B, LLM, token generation speed, quantization, Q4KM, performance benchmarks, processing, generation, GPU, hardware, use cases, recommendations, optimization, efficiency, LLMs, AI, natural language processing, deep learning, developers, software, coding.