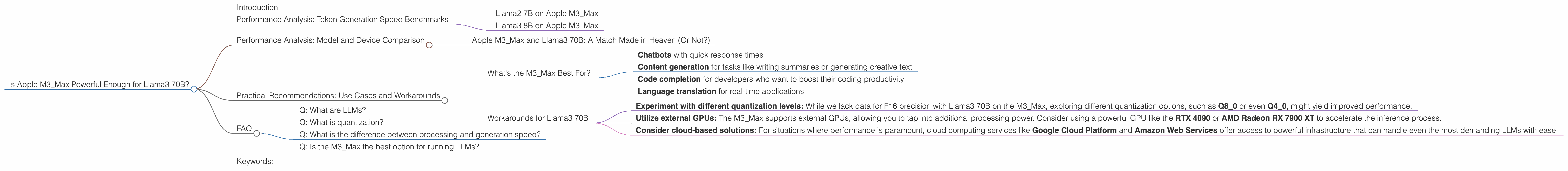

Is Apple M3 Max Powerful Enough for Llama3 70B?

Introduction

The world of large language models (LLMs) is buzzing with excitement, and for good reason. These powerful AI systems have the potential to revolutionize various industries, from content creation to scientific research. But running LLMs locally can be a challenge, requiring powerful hardware to handle their massive computational demands. In this article, we'll dive into the performance of Apple's latest chip, the M3_Max, when paired with the formidable Llama3 70B LLM. We'll analyze the performance metrics, compare the model and the device, and explore practical recommendations for use cases.

Performance Analysis: Token Generation Speed Benchmarks

Llama2 7B on Apple M3_Max

Let's start with a commonly used LLM, the Llama2 7B. When running Llama2 7B on the M3Max, we see promising results. With F16 precision, the M3Max achieves a token generation speed of 25.09 tokens per second. This performance increases to 42.75 tokens per second when using Q80 quantization and reaches 66.31 tokens per second with Q40 quantization.

These numbers tell us that the M3_Max can handle Llama2 7B fairly efficiently, especially with the use of quantization. Quantization is like putting the model on a diet – it reduces the size of the model by using less precise numbers, making it faster and more efficient.

Llama3 8B on Apple M3_Max

Moving on to the larger Llama3 8B model, the M3Max still holds its own. Using Q4K_M quantization, the token generation speed is 50.74 tokens per second. With F16 precision, the performance increases to 22.39 tokens per second.

These stats show that the M3_Max can effectively handle Llama3 8B, making it a viable option for tasks requiring a medium-sized LLM.

Performance Analysis: Model and Device Comparison

Apple M3_Max and Llama3 70B: A Match Made in Heaven (Or Not?)

Now, let's address the elephant in the room – the Llama3 70B model. This behemoth of an LLM demands a massive amount of processing power. Unfortunately, we do not have data for the performance of the M3Max with Llama3 70B in F16 precision. However, the data for Q4K_M quantization shows a token generation speed of 7.53 tokens per second, a significant drop compared to the performance with Llama2 7B and Llama3 8B.

This drastic performance difference suggests that the M3Max, despite being a powerful chip, may not be the ideal choice for running Llama3 70B, especially if you need fast response times. Think of it this way: Using the M3Max with Llama3 70B might be like trying to fit a jumbo jet into a small garage – it might technically fit, but it's not going to be a comfortable or efficient experience.

Practical Recommendations: Use Cases and Workarounds

What's the M3_Max Best For?

The M3_Max is a solid choice for running smaller LLMs like Llama2 7B and Llama3 8B, especially if you prioritize fast token generation speeds. This makes it an excellent option for developers working on projects that require efficient local inference, including:

- Chatbots with quick response times

- Content generation for tasks like writing summaries or generating creative text

- Code completion for developers who want to boost their coding productivity

- Language translation for real-time applications

Workarounds for Llama3 70B

If you're set on using Llama3 70B on your M3_Max, there are a few workarounds you can try:

- Experiment with different quantization levels: While we lack data for F16 precision with Llama3 70B on the M3Max, exploring different quantization options, such as Q80 or even Q4_0, might yield improved performance.

- Utilize external GPUs: The M3_Max supports external GPUs, allowing you to tap into additional processing power. Consider using a powerful GPU like the RTX 4090 or AMD Radeon RX 7900 XT to accelerate the inference process.

- Consider cloud-based solutions: For situations where performance is paramount, cloud computing services like Google Cloud Platform and Amazon Web Services offer access to powerful infrastructure that can handle even the most demanding LLMs with ease.

FAQ

Q: What are LLMs?

A: LLMs, or Large Language Models, are a type of artificial intelligence that can understand and generate human-like text. They are trained on massive datasets of text and code, enabling them to perform various tasks like writing, translating, and summarizing information.

Q: What is quantization?

A: Quantization is a technique used to reduce the size of a model by using less precise numbers. Think of it like using a smaller ruler to measure something – you lose some accuracy, but the tool is lighter and easier to use.

Q: What is the difference between processing and generation speed?

A: Processing speed refers to how quickly the model can analyze and process the input text. Generation speed measures how fast the model can produce output text.

Q: Is the M3_Max the best option for running LLMs?

A: The M3_Max is a powerful chip, but its performance depends on the specific LLM you're using. For smaller LLMs, it's a great choice. For larger LLMs like Llama3 70B, you might need to consider alternative solutions, such as external GPUs or cloud-based services.

Keywords:

Llama3 70B, Apple M3Max, LLM, Large Language Model, token generation speed, performance, quantization, F16, Q4KM, Q80, Q4_0, model, device, benchmark, use case, chatbot, content generation, code completion, language translation, external GPUs, cloud computing, Google Cloud Platform, Amazon Web Services.