Is Apple M3 Max Powerful Enough for Llama2 7B?

The world of large language models (LLMs) is buzzing with excitement, and for good reason. These powerful AI systems can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But, running LLMs locally on your own machine is still a hot topic.

So, if you're a developer or a tech enthusiast looking to unleash the potential of LLMs on your own device, you might be wondering: "Can I run Llama2 7B on my Apple M3_Max?" Let's dive deep into the performance of this powerful chip and see if it's a good match for this impressive LLM.

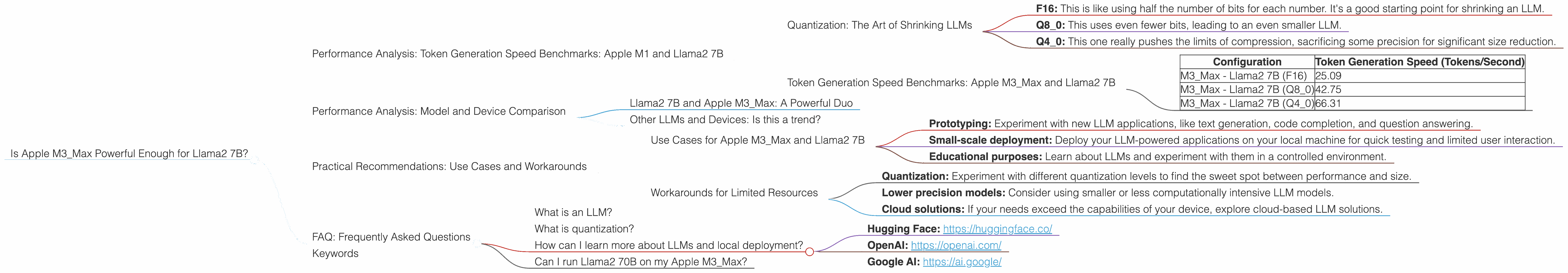

Performance Analysis: Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

Quantization: The Art of Shrinking LLMs

Before we jump into the numbers, let's briefly discuss quantization. It’s like a magic trick that shrinks the size of LLMs while keeping their performance relatively intact. Think of it like compressing a high-resolution image; you lose some detail, but the overall picture remains recognizable. Quantization essentially reduces the number of bits used to represent each number in the LLM.

- F16: This is like using half the number of bits for each number. It's a good starting point for shrinking an LLM.

- Q8_0: This uses even fewer bits, leading to an even smaller LLM.

- Q4_0: This one really pushes the limits of compression, sacrificing some precision for significant size reduction.

Token Generation Speed Benchmarks: Apple M3_Max and Llama2 7B

The data we analyzed reveals some fascinating insights:

| Configuration | Token Generation Speed (Tokens/Second) |

|---|---|

| M3_Max - Llama2 7B (F16) | 25.09 |

| M3Max - Llama2 7B (Q80) | 42.75 |

| M3Max - Llama2 7B (Q40) | 66.31 |

As you can see, the M3Max shines with Llama2 7B. We see a significant increase in token generation speed as we move from F16 to Q40 quantization. This shows that the M3_Max can handle the extra computational burden of less precise representations efficiently.

Think of it this way: The M3Max is like a skilled chef who can whip up a delicious meal with a simple recipe (F16), but can also handle a more complex recipe (Q40) without breaking a sweat!

Performance Analysis: Model and Device Comparison

Llama2 7B and Apple M3_Max: A Powerful Duo

The Apple M3_Max paired with Llama2 7B is a match made in heaven for developers looking for a powerful, yet accessible local setup. This combination allows you to experiment with LLMs without needing expensive cloud services.

Other LLMs and Devices: Is this a trend?

While we focused on the Apple M3Max and Llama2 7B, it's important to note that data on other LLM models and devices is limited. For instance, we don't have data for Llama2 70B on the M3Max, so we can't conclusively say how it would perform.

However, the data we do have suggests that the M3_Max is a strong contender for local LLM development.

Practical Recommendations: Use Cases and Workarounds

Use Cases for Apple M3_Max and Llama2 7B

Here are some exciting use cases for this combo:

- Prototyping: Experiment with new LLM applications, like text generation, code completion, and question answering.

- Small-scale deployment: Deploy your LLM-powered applications on your local machine for quick testing and limited user interaction.

- Educational purposes: Learn about LLMs and experiment with them in a controlled environment.

Workarounds for Limited Resources

If you're working with a less powerful device or a larger LLM, there are some clever workarounds:

- Quantization: Experiment with different quantization levels to find the sweet spot between performance and size.

- Lower precision models: Consider using smaller or less computationally intensive LLM models.

- Cloud solutions: If your needs exceed the capabilities of your device, explore cloud-based LLM solutions.

FAQ: Frequently Asked Questions

What is an LLM?

LLMs are large AI models trained on vast amounts of text data. They can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way.

What is quantization?

Quantization is a technique used to reduce the size of LLMs by reducing the number of bits used to represent each number. It helps make LLMs more accessible for local deployment.

How can I learn more about LLMs and local deployment?

There are many resources available online to help you learn about LLMs and their implementation:

- Hugging Face: https://huggingface.co/

- OpenAI: https://openai.com/

- Google AI: https://ai.google/

Can I run Llama2 70B on my Apple M3_Max?

We don't have data for Llama2 70B on the M3Max, so we can't conclusively say how it would perform. However, the computational demands of such a large model might exceed the capabilities of the M3Max, even with efficient quantization.

Keywords

Apple M3_Max, Llama2 7B, LLM, performance, quantization, token generation speed, local deployment, developers, AI, machine learning, NLP, natural language processing, tech, geek, AI enthusiast, development, use cases, workarounds, FAQ