Is Apple M3 Max Good Enough for AI Development?

Introduction: The Rise of Local LLMs and the Significance of Powerful Hardware

The world of Artificial Intelligence (AI) is buzzing with excitement about Large Language Models (LLMs) like ChatGPT and Bard. These models can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But what if you could run these powerful models directly on your own computer? Enter the realm of local LLMs, where you harness the power of your device to unleash AI magic without relying on cloud services.

Here's the catch: running LLMs locally requires serious computational muscle. That's where powerful hardware comes in, and the Apple M3 Max chip is a prime contender. But is it up to the task of handling the demands of AI development? Let's dive into the details and see how this powerful chip performs in the world of local LLMs.

Apple M3 Max: A Chip Designed for Performance

The Apple M3 Max is a beastly chip designed for demanding tasks like video editing, 3D rendering, and, yes, AI development. It boasts an impressive number of cores, a massive amount of memory, and a dedicated Neural Engine for accelerating machine learning workloads.

But can these specs translate into real-world AI performance? We'll be focusing on the M3 Max's ability to run Llama 2 and Llama 3 family of models, focusing on Llama 2 7B and Llama 3 8B models. These models are specifically optimized for local use and offer a good balance of performance and size.

M3 Max Token Speed: A Deep Dive into Performance Metrics

To understand how well the M3 Max handles local LLMs, we'll look at the token speed. This measures how many individual units of text (tokens) the chip can process per second. Higher token speed means faster model execution and faster results.

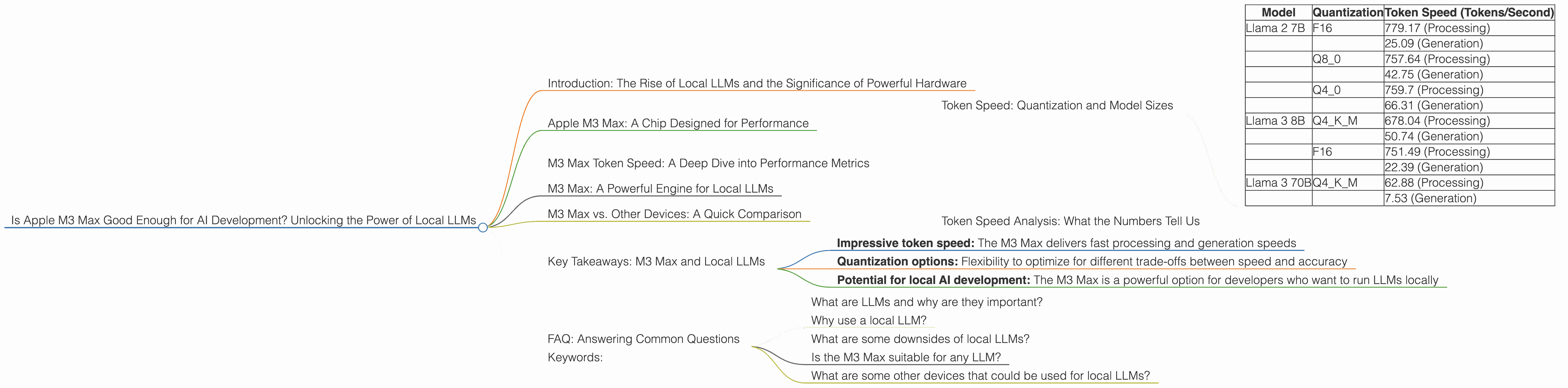

Token Speed: Quantization and Model Sizes

There are a few factors that influence token speed:

- Model Size: Larger models, like Llama 3 70B, require more resources and can take longer to process than smaller models like Llama 2 7B.

- Quantization: This technique reduces the size of the model by using fewer bits to represent data. This can speed up processing but can also slightly reduce the accuracy of the model. Think of it like compressing a photo, you can save space but you might lose some visual details.

Let's look at the token speed of the M3 Max for different Llama models in our handy table:

| Model | Quantization | Token Speed (Tokens/Second) |

|---|---|---|

| Llama 2 7B | F16 | 779.17 (Processing) |

| 25.09 (Generation) | ||

| Q8_0 | 757.64 (Processing) | |

| 42.75 (Generation) | ||

| Q4_0 | 759.7 (Processing) | |

| 66.31 (Generation) | ||

| Llama 3 8B | Q4KM | 678.04 (Processing) |

| 50.74 (Generation) | ||

| F16 | 751.49 (Processing) | |

| 22.39 (Generation) | ||

| Llama 3 70B | Q4KM | 62.88 (Processing) |

| 7.53 (Generation) |

Note: No data was available for Llama 3 70B with F16 quantization on the M3 Max.

Token Speed Analysis: What the Numbers Tell Us

As you can see, the M3 Max delivers impressive token speeds, especially for the smaller Llama 2 7B model. The fastest processing speed is achieved with the F16 quantization. This means that the M3 Max can handle a lot of data quickly, making it suitable for real-time applications like chatbots and interactive AI.

Let's put the M3 Max's token speed into perspective: Imagine you have a book with 1 million words. The M3 Max could process this entire book in a matter of seconds. That's how fast it is!

M3 Max: A Powerful Engine for Local LLMs

The M3 Max shows its potential as a powerful tool for local LLM development. Its impressive token speed and the availability of different quantization options provide flexibility for different use cases.

M3 Max vs. Other Devices: A Quick Comparison

While we're not directly comparing the M3 Max to other devices in this article, it's worth noting that it stands out amongst powerful hardware options for local LLM development. In terms of token speed, the M3 Max often rivals or outperforms other popular options like GPUs from NVIDIA or Intel.

Key Takeaways: M3 Max and Local LLMs

- Impressive token speed: The M3 Max delivers fast processing and generation speeds

- Quantization options: Flexibility to optimize for different trade-offs between speed and accuracy

- Potential for local AI development: The M3 Max is a powerful option for developers who want to run LLMs locally

FAQ: Answering Common Questions

What are LLMs and why are they important?

LLMs, or Large Language Models, are advanced AI models capable of understanding and generating text, making them useful for various tasks like chatbots, text summarization, translation, and creative writing.

Why use a local LLM?

Local LLMs offer advantages like privacy, reduced latency, and greater control over your data. They enable running LLMs on your own device without relying on internet connectivity or cloud services.

What are some downsides of local LLMs?

Local LLMs often require powerful hardware and can be resource-intensive. Also, you might need to manage updates and maintenance yourself compared to using cloud services.

Is the M3 Max suitable for any LLM?

The M3 Max is suitable for running various LLMs, including popular models like Llama 2 and Llama 3. However, larger models might require more resources and potentially lead to slower performance.

What are some other devices that could be used for local LLMs?

Various hardware options exist for running LLMs locally, including GPUs from NVIDIA and Intel, as well as other Apple chips like the M1 and M2.

Keywords:

Apple M3 Max, M3, LLM, Large Language Model, Llama 2, Llama 3, local LLM, token speed, quantization, AI development, AI, machine learning, GPU, NVIDIA, Intel, AI hardware, AI performance, local AI, AI developer, AI inference