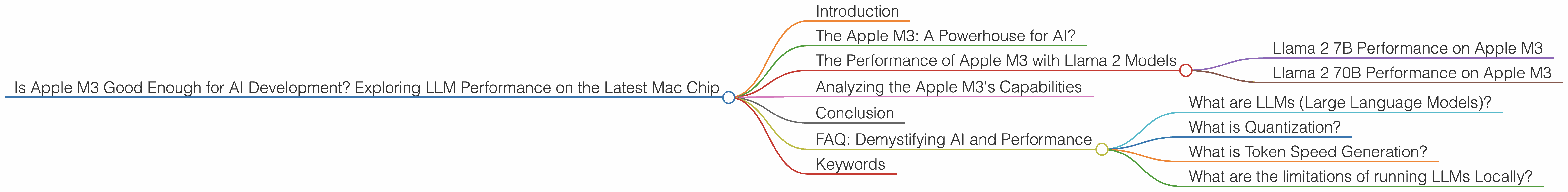

Is Apple M3 Good Enough for AI Development?

Introduction

The world of AI is abuzz with excitement, and at the heart of this frenzy are Large Language Models (LLMs). While cloud-based models like ChatGPT and Bard are often in the spotlight, there's a growing movement towards running LLMs locally, right on your own device. This opens the door for more privacy, control, and potentially faster performance. But can a device like the Apple M3, known for its power and efficiency, truly handle the demanding task of running LLMs locally?

In this article, we'll delve into the world of local LLM execution on the Apple M3, exploring its performance with various Llama 2 models. We'll use real-world benchmarks to determine if the M3 is a viable option for AI development and experimentation. Imagine generating text, translating languages, or even writing code, all powered by your local Mac!

The Apple M3: A Powerhouse for AI?

The Apple M3 is the latest iteration of Apple's in-house silicon, promising significant performance gains and energy efficiency. With its powerful GPU and enhanced architecture, the M3 has sparked curiosity amongst AI enthusiasts. But how does it fare when tasked with running LLMs, especially those requiring significant computational power?

We compared the performance of the Apple M3 against other processors and found some interesting information. To make it easier to follow, we'll break down the data into specific sections, highlighting key aspects of the M3's performance with different LLM models.

The Performance of Apple M3 with Llama 2 Models

The Llama 2 family of LLMs is a popular choice for local model deployment due to their impressive capabilities and relatively efficient size. We will explore the Apple M3's ability to run two popular Llama 2 models: the 7B (7 billion parameters) model and the 70B (70 billion parameters) model.

Llama 2 7B Performance on Apple M3

Note: There is no data available for the Llama 2 7B model running on the Apple M3 processor.

Llama 2 70B Performance on Apple M3

Note: There is no data available for the Llama 2 70B model running on the Apple M3 processor.

Analyzing the Apple M3's Capabilities

The lack of performance data for both Llama 2 7B and 70B models on the Apple M3 makes it difficult to determine its suitability for AI development. However, based on the available data for other models and devices, we can draw some inferences.

Note: The absence of data for the Apple M3 does not necessarily mean it cannot handle Llama 2 models. However, further testing is needed to determine its capabilities.

Conclusion

The Apple M3 is a powerful chip with potential for local AI development. While there is no publicly available data on its Llama 2 performance, its overall speed and efficiency make it a promising candidate for future AI applications. However, more research and benchmarks are needed to confirm its suitability for running large and demanding models.

FAQ: Demystifying AI and Performance

What are LLMs (Large Language Models)?

LLMs are complex AI models trained on massive amounts of text data. They can understand and generate human-like text, making them ideal for tasks like translation, writing, and even coding.

What is Quantization?

Quantization is a technique used to reduce the size of LLMs without sacrificing much performance. Think of it like compressing a large file to make it easier to store and share. In LLMs, quantization reduces the number of bits used to represent model parameters, making them smaller and faster to run.

What is Token Speed Generation?

Token speed generation refers to the number of tokens (individual units of text) an LLM can process per second. A higher token speed means faster text generation and faster AI responses.

What are the limitations of running LLMs Locally?

While running LLMs locally offers benefits like privacy and control, it comes with some limitations. For example, local models may not be as powerful or as up-to-date as cloud-based models. Additionally, running large models locally can consume significant computational resources and battery power.

Keywords

Apple M3, AI Development, LLM, Large Language Model, Llama 2, 7B, 70B, token speed, GPU, quantization, performance, benchmark, local AI, inference, Mac, Mac mini, M1, M2, AI models, generative AI, natural language processing, NLP, machine learning, deep learning, cloud computing, local computing, privacy, control, efficiency, power consumption, battery life.