Is Apple M2 Ultra Powerful Enough for Llama3 8B?

Introduction

The world of large language models (LLMs) is exploding, with new models constantly emerging and pushing the boundaries of what’s possible. One of the latest contenders is Llama3 8B, a powerful and surprisingly compact model released by Meta. But can your hardware keep up? In this deep dive, we'll explore the performance of Llama3 8B on the Apple M2_Ultra chip, analyzing its capabilities and limitations.

This article will be a goldmine for developers and enthusiasts who are eager to experiment with LLMs in real-world applications. We'll decode performance benchmarks, compare different model and device configurations, and provide practical recommendations for using Llama3 8B effectively on the M2_Ultra.

Performance Analysis: Token Generation Speed Benchmarks

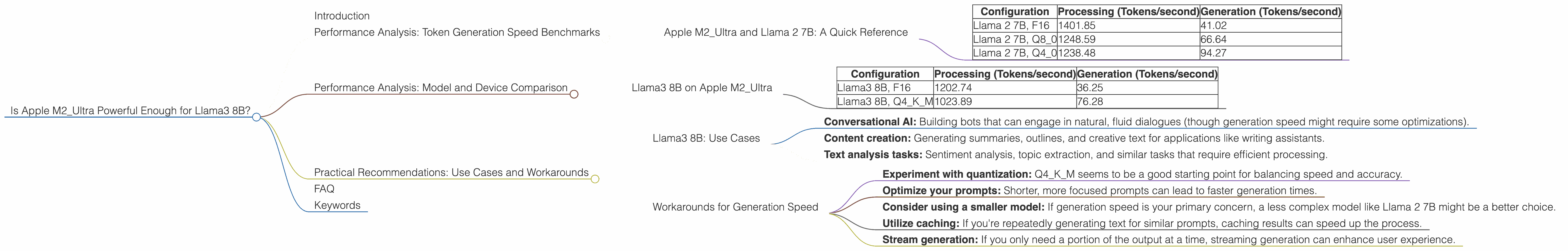

Apple M2_Ultra and Llama 2 7B: A Quick Reference

Before we dive into Llama3 8B, let's take a peek at the performance of the M2_Ultra with Llama 2 7B. This will set the stage for our analysis and highlight the differences when we move to the larger Llama3 8B model.

| Configuration | Processing (Tokens/second) | Generation (Tokens/second) |

|---|---|---|

| Llama 2 7B, F16 | 1401.85 | 41.02 |

| Llama 2 7B, Q8_0 | 1248.59 | 66.64 |

| Llama 2 7B, Q4_0 | 1238.48 | 94.27 |

Key Takeaways:

- Processing speed refers to how fast the model can process input text. As you can see, the M2_Ultra can churn through 1401 tokens per second for Llama 2 7B in F16 precision.

- Generation speed, on the other hand, measures how fast the model can generate output text. This speed is significantly lower, with only 41.02 tokens per second for the same configuration.

- Quantization (Q80 and Q40) reduces the model's size and memory requirements, generally improving processing speeds, but it can also impact generation speeds.

Performance Analysis: Model and Device Comparison

Llama3 8B on Apple M2_Ultra

Now, let's get to the heart of the matter: Llama3 8B on the Apple M2_Ultra.

| Configuration | Processing (Tokens/second) | Generation (Tokens/second) |

|---|---|---|

| Llama3 8B, F16 | 1202.74 | 36.25 |

| Llama3 8B, Q4KM | 1023.89 | 76.28 |

Observations:

- The M2_Ultra can handle Llama3 8B reasonably well.

- The processing speed for Llama3 8B is slightly lower than Llama 2 7B in F16 precision, likely due to the increased model size and complexity.

- Quantization (Q4KM) seems to be a better choice for Llama3 8B, offering a significant boost in generation speed compared to F16, with a less dramatic impact on processing speed.

Think of it like this:

- Processing speed is like a fast car on the highway: It can process text quickly and smoothly.

- Generation speed is like a slow-moving train: It takes time to generate output, even with a powerful engine.

Practical Recommendations: Use Cases and Workarounds

Llama3 8B: Use Cases

Llama3 8B on M2_Ultra is well-suited for:

- Conversational AI: Building bots that can engage in natural, fluid dialogues (though generation speed might require some optimizations).

- Content creation: Generating summaries, outlines, and creative text for applications like writing assistants.

- Text analysis tasks: Sentiment analysis, topic extraction, and similar tasks that require efficient processing.

Workarounds for Generation Speed

If you're dealing with generation speed bottlenecks:

- Experiment with quantization: Q4KM seems to be a good starting point for balancing speed and accuracy.

- Optimize your prompts: Shorter, more focused prompts can lead to faster generation times.

- Consider using a smaller model: If generation speed is your primary concern, a less complex model like Llama 2 7B might be a better choice.

- Utilize caching: If you're repeatedly generating text for similar prompts, caching results can speed up the process.

- Stream generation: If you only need a portion of the output at a time, streaming generation can enhance user experience.

FAQ

1. What is Llama 3?

Llama 3 is a powerful and versatile large language model developed by Meta. It's known for its impressive performance on a wide range of tasks, including text generation, translation, summarization, and question answering.

2. What is quantization?

Quantization is a technique used to reduce the size of a model by converting its weights from high-precision floating-point numbers to lower-precision integers. This allows models to run faster on smaller devices, but it can sometimes impact accuracy.

3. How can I run Llama 3 on my own device?

You can use tools like llama.cpp (https://github.com/ggerganov/llama.cpp) to run Llama 3 locally. However, you'll need a powerful GPU or CPU to handle the model’s processing and generation requirements.

4. Can I use Llama 3 for commercial purposes?

The licensing and usage terms for Llama 3 are still evolving. It's important to consult the official Meta documentation for the latest information.

5. What other devices are suitable for running Llama 3?

While the M2_Ultra is a strong contender, other high-performance GPUs and CPUs can also accommodate Llama 3, depending on the model size and configuration.

6. How can I improve the performance of my LLM on my device?

Besides the tips mentioned earlier, you can explore techniques like model parallelization, optimized inference libraries, and specialized hardware designed for AI workloads.

Keywords

Llama 3 8B, Apple M2_Ultra, LLM, large language model, token generation speed, performance, benchmark, quantization, processing, generation, use cases, workarounds, conversational AI, content creation, text analysis, FAQ, GPU, CPU, inference, optimization.