Is Apple M2 Ultra Powerful Enough for Llama3 70B?

Introduction

The world of large language models (LLMs) is evolving rapidly. These powerful AI systems are capable of generating human-like text, translating languages, writing different kinds of creative content, and answering your questions in an informative way. But running these LLMs locally on your own machine can be a challenge, especially for models with billions of parameters.

This article dives deep into the capabilities of the Apple M2 Ultra chip, a powerful beast in the world of processors, and its ability to handle the demanding Llama3 70B LLM. We'll explore the performance benchmarks, analyze the results, and provide practical recommendations for use cases.

Performance Analysis: Token Generation Speed Benchmarks

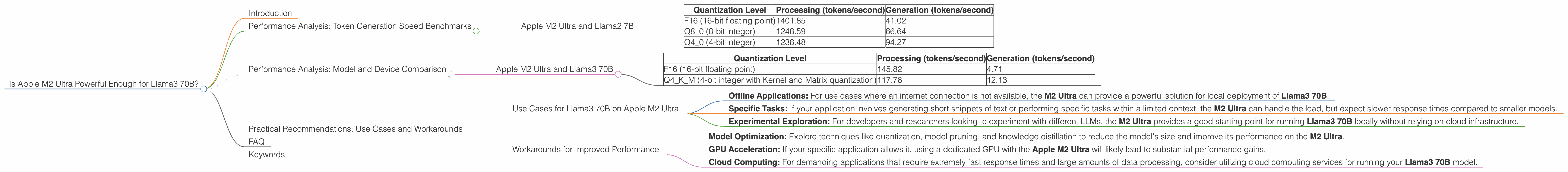

Apple M2 Ultra and Llama2 7B

The Apple M2 Ultra offers impressive performance for smaller LLMs like Llama2 7B. Here's a breakdown of token generation speed benchmarks for different quantization levels:

| Quantization Level | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|

| F16 (16-bit floating point) | 1401.85 | 41.02 |

| Q8_0 (8-bit integer) | 1248.59 | 66.64 |

| Q4_0 (4-bit integer) | 1238.48 | 94.27 |

Key Observations:

- Processing Speed: The M2 Ultra excels in processing tokens, reaching speeds of over 1400 tokens per second for the Llama2 7B model using F16 quantization.

- Generation Speed: While the generation speed is significantly slower than processing, it's still impressive. The Llama2 7B model achieves a generation speed of over 90 tokens per second when using Q4_0 quantization.

Practical Implications:

- The Apple M2 Ultra is a solid choice for local deployments of Llama2 7B. You can achieve fast processing speeds, even for demanding tasks like generating large amounts of text.

- Using lower quantization levels like Q4_0 can significantly improve generation speed, but it might impact the model's accuracy and quality. It's a trade-off that developers need to consider.

Performance Analysis: Model and Device Comparison

Apple M2 Ultra and Llama3 70B

Now, let's turn our attention to the heavyweight champion of LLMs, the Llama3 70B. Here's how the Apple M2 Ultra performs:

| Quantization Level | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|

| F16 (16-bit floating point) | 145.82 | 4.71 |

| Q4KM (4-bit integer with Kernel and Matrix quantization) | 117.76 | 12.13 |

Key Observations:

- Significant Performance Drop: Compared to Llama2 7B, the processing and generation speed for Llama3 70B on the M2 Ultra drops significantly. This is expected, as the larger model has a much larger computational footprint.

- Quantization Impact: The difference in generation speed between F16 and Q4KM quantization is notable. The M2 Ultra can generate text at a rate of over 12 tokens per second using Q4KM, but only around 5 tokens per second using F16.

Practical Implications:

- The Apple M2 Ultra can run Llama3 70B, but performance will be significantly slower than with smaller models. If response speed is crucial, it might not be the ideal choice for this specific LLM.

- Using Q4KM quantization offers a performance boost compared to F16, but it comes with a trade-off in terms of model accuracy. You'll need to carefully consider the right balance between performance and accuracy for your specific use case.

Practical Recommendations: Use Cases and Workarounds

Use Cases for Llama3 70B on Apple M2 Ultra

The Apple M2 Ultra can still be a valuable tool for running Llama3 70B in certain scenarios:

- Offline Applications: For use cases where an internet connection is not available, the M2 Ultra can provide a powerful solution for local deployment of Llama3 70B.

- Specific Tasks: If your application involves generating short snippets of text or performing specific tasks within a limited context, the M2 Ultra can handle the load, but expect slower response times compared to smaller models.

- Experimental Exploration: For developers and researchers looking to experiment with different LLMs, the M2 Ultra provides a good starting point for running Llama3 70B locally without relying on cloud infrastructure.

Workarounds for Improved Performance

- Model Optimization: Explore techniques like quantization, model pruning, and knowledge distillation to reduce the model's size and improve its performance on the M2 Ultra.

- GPU Acceleration: If your specific application allows it, using a dedicated GPU with the Apple M2 Ultra will likely lead to substantial performance gains.

- Cloud Computing: For demanding applications that require extremely fast response times and large amounts of data processing, consider utilizing cloud computing services for running your Llama3 70B model.

FAQ

Q: What is Quantization?

A: Quantization is a technique used to reduce the size of a large language model (LLM) by converting its weights from high-precision floating-point numbers to lower-precision integers. It's like squeezing a large file into a smaller size, saving space and potentially speeding up processing.

Q: What are the different quantization levels?

*A: * Quantization levels refer to the precision of the integer representation used for model weights. Higher levels, like F16 (16-bit floating point), offer higher accuracy but require more memory and computational resources. Lower levels, like Q4KM (4-bit integer with Kernel and Matrix quantization), are more space-efficient but may sacrifice some accuracy.

Q: How does the Apple M2 Ultra compare to other chips?

A: The Apple M2 Ultra is a powerful chip, especially for its combination of CPU and GPU resources. However, other dedicated GPUs like the NVIDIA A100 or H100 offer superior performance specifically for running large language models.

Q: Is the Apple M2 Ultra the best choice for all LLM use cases?

A: No, the Apple M2 Ultra is not the best choice for all LLM use cases. For massive models like Llama3 70B, performance may be limited, and cloud computing might be a better option. However, for smaller models and specific use cases, the M2 Ultra can provide a powerful and cost-effective solution.

Keywords

Apple M2 Ultra, Llama3 70B, LLM, large language model, token generation speed, quantization, performance benchmarks, GPU acceleration, cloud computing, model optimization, offline applications.