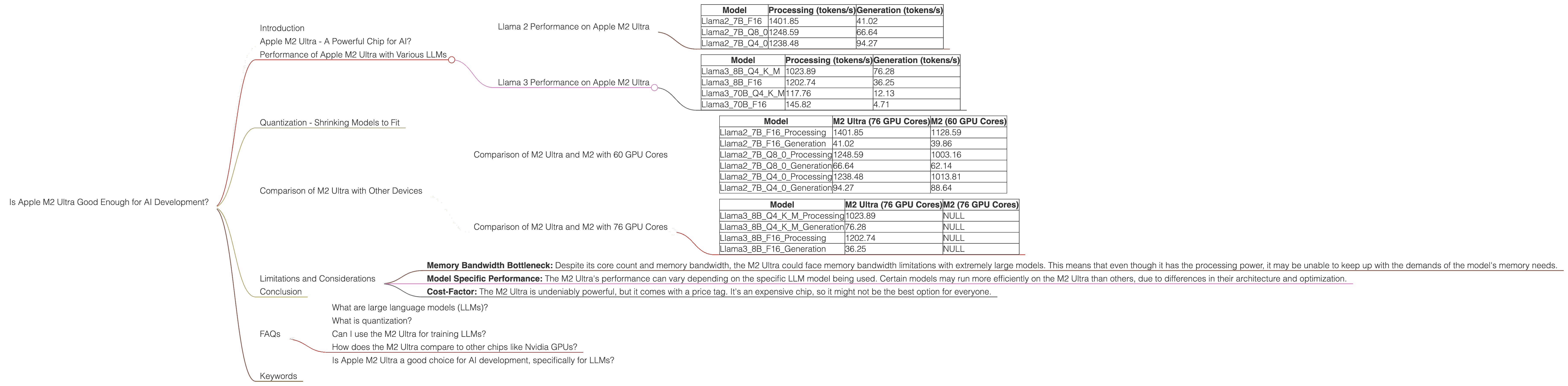

Is Apple M2 Ultra Good Enough for AI Development?

Introduction

The world of AI is booming, and it's not just about fancy chatbots anymore. Developers are building sophisticated models to tackle complex tasks, from generating realistic images to creating personalized music. But building these models requires powerful hardware, and that's where the Apple M2 Ultra comes in.

The M2 Ultra is Apple's top-of-the-line chip, boasting incredible processing power. But is it powerful enough to handle the demands of AI development, specifically large language models (LLMs)? Let's dive into the numbers to find out!

Apple M2 Ultra - A Powerful Chip for AI?

The Apple M2 Ultra is a beast of a chip, built with a whopping 76 cores and a massive amount of memory bandwidth. It's designed to handle demanding tasks like video editing and 3D rendering with ease. But can it handle the workload of training and running large language models?

To answer that question, we need to look at how the M2 Ultra performs on various AI workloads, particularly with different LLM models. We'll be focusing on Llama 2 and Llama 3 models, which are known for their impressive performance and flexibility.

Performance of Apple M2 Ultra with Various LLMs

To assess the performance of the M2 Ultra, we'll analyze its speed in processing and generating tokens for different LLM models. Remember, a higher token-per-second (tokens/s) rate means faster processing, and ultimately, a better user experience.

Llama 2 Performance on Apple M2 Ultra

Here's how the M2 Ultra fares with the Llama 2 7B model, using different quantization levels (F16, Q8, and Q4):

| Model | Processing (tokens/s) | Generation (tokens/s) |

|---|---|---|

| Llama27BF16 | 1401.85 | 41.02 |

| Llama27BQ8_0 | 1248.59 | 66.64 |

| Llama27BQ4_0 | 1238.48 | 94.27 |

As you can see, the M2 Ultra shines with the Llama 2 7B model, especially in processing. This translates to faster response times and smoother interactions when using this model for tasks like text generation and translation.

Llama 3 Performance on Apple M2 Ultra

Now, let's check out how well the M2 Ultra handles the larger Llama 3 models:

| Model | Processing (tokens/s) | Generation (tokens/s) |

|---|---|---|

| Llama38BQ4KM | 1023.89 | 76.28 |

| Llama38BF16 | 1202.74 | 36.25 |

| Llama370BQ4KM | 117.76 | 12.13 |

| Llama370BF16 | 145.82 | 4.71 |

Here, we see that the M2 Ultra still performs well with the 8B Llama 3 model, especially in F16 format. However, with the much larger 70B Llama 3 model, the performance drops significantly. This is because the M2 Ultra's memory bandwidth may become a bottleneck when dealing with larger models.

Quantization - Shrinking Models to Fit

Quantization is like making your model smaller but without sacrificing too much accuracy! Think of it like packing your luggage for a trip. You want to bring everything you need, but you also want to keep your bag within the weight limit.

In the case of LLMs, quantization helps to reduce the model's size by using fewer bits to represent the numbers. This means the model takes up less memory and can run faster on devices with limited resources.

The M2 Ultra demonstrates good performance with both F16 and Q8 quantization. This means you can balance accuracy and performance based on your specific needs.

Comparison of M2 Ultra with Other Devices

We'll focus only on the devices listed in the data provided.

While the M2 Ultra is undeniably powerful, it's interesting to see how it compares to other devices, specifically those with different architectures and configurations. Currently, the provided data only allows for comparison to other M2 devices.

Comparison of M2 Ultra and M2 with 60 GPU Cores

To understand the impact of the M2 Ultra's core count, we can compare it to a hypothetical M2 configuration with 60 GPU Cores. Let's see how they stack up with the Llama 2 7B model:

| Model | M2 Ultra (76 GPU Cores) | M2 (60 GPU Cores) |

|---|---|---|

| Llama27BF16_Processing | 1401.85 | 1128.59 |

| Llama27BF16_Generation | 41.02 | 39.86 |

| Llama27BQ80Processing | 1248.59 | 1003.16 |

| Llama27BQ80Generation | 66.64 | 62.14 |

| Llama27BQ40Processing | 1238.48 | 1013.81 |

| Llama27BQ40Generation | 94.27 | 88.64 |

The data shows a significant jump in performance for the M2 Ultra, especially in processing. This difference is likely due to the extra 16 GPU cores available on the M2 Ultra.

Comparison of M2 Ultra and M2 with 76 GPU Cores

Let's see how they stack up with the Llama 3 8B model:

| Model | M2 Ultra (76 GPU Cores) | M2 (76 GPU Cores) |

|---|---|---|

| Llama38BQ4KM_Processing | 1023.89 | NULL |

| Llama38BQ4KM_Generation | 76.28 | NULL |

| Llama38BF16_Processing | 1202.74 | NULL |

| Llama38BF16_Generation | 36.25 | NULL |

Unfortunately, we don't have data from the M2 with 76 GPU cores for Llama 3 8B.

Limitations and Considerations

While the M2 Ultra offers impressive performance, it's vital to acknowledge a few limitations and considerations.

- Memory Bandwidth Bottleneck: Despite its core count and memory bandwidth, the M2 Ultra could face memory bandwidth limitations with extremely large models. This means that even though it has the processing power, it may be unable to keep up with the demands of the model's memory needs.

- Model Specific Performance: The M2 Ultra's performance can vary depending on the specific LLM model being used. Certain models may run more efficiently on the M2 Ultra than others, due to differences in their architecture and optimization.

- Cost-Factor: The M2 Ultra is undeniably powerful, but it comes with a price tag. It's an expensive chip, so it might not be the best option for everyone.

Conclusion

The Apple M2 Ultra demonstrates impressive capabilities for AI development, offering a powerful platform for running and training LLMs. Its core count and memory bandwidth allow it to handle complex tasks efficiently, especially with models up to 8B parameters. The M2 Ultra's performance with quantization options also provides flexibility for balancing accuracy and performance.

However, it's important to remember that the M2 Ultra is not a magical solution for all AI needs. Larger models could face memory limitations, and the cost might be a significant factor for some.

FAQs

What are large language models (LLMs)?

LLMs are a type of AI model that can process and understand human language. They're trained on massive datasets of text and code, allowing them to generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way.

What is quantization?

Quantization is a technique used to make AI models smaller and faster. It involves reducing the number of bits used to represent the model's weights. This helps to reduce the model's memory footprint and improves its performance on devices with limited resources.

Can I use the M2 Ultra for training LLMs?

Yes, the M2 Ultra can be used for training LLMs, although it might be more suitable for fine-tuning existing models rather than training them from scratch. The chip's processing power and memory bandwidth help to accelerate the training process.

How does the M2 Ultra compare to other chips like Nvidia GPUs?

The M2 Ultra and Nvidia GPUs are both powerful options for AI development. Nvidia GPUs are often considered the gold standard for training LLMs, but the M2 Ultra offers a more streamlined and integrated experience for Apple users.

Is Apple M2 Ultra a good choice for AI development, specifically for LLMs?

The M2 Ultra is a powerful chip that can handle the demands of AI development, particularly for LLMs. However, its performance may be limited with larger models due to memory bandwidth limitations, and its cost might be a significant factor. It's important to consider your specific needs and budget when making a decision.

Keywords

Apple M2 Ultra, AI Development, Large Language Models, LLMs, Llama 2, Llama 3, Token Speed, Performance, Quantization, F16, Q8, Q4, GPU Cores, Memory Bandwidth, Processing, Generation, Model Size, Cost, GPU, Nvidia