Is Apple M2 Pro Good Enough for AI Development?

Introduction

The world of AI is booming, and with it comes the need for powerful hardware to run the complex models. Large Language Models (LLMs) like Llama 2 are pushing the boundaries of what's possible, and developers are constantly searching for the best tools for the job. But can a powerful device like the Apple M2 Pro, designed for creative tasks like video editing and graphics design, also handle the computational demands of AI development?

In this article, we'll delve into the performance of the Apple M2 Pro when running Llama 2, examining its processing and generation capabilities, and comparing different quantization levels. We'll dissect the data and provide a clear picture of how this powerful chip fares in the world of AI development.

Apple M2 Pro: Powerhouse for AI?

The Apple M2 Pro is a beast of a chip, packed with 16-core, 19-core, and 38-core GPU configurations, boasting impressive bandwidth and impressive performance. But how does it stack up when it comes to AI development?

Let's put it to the test with Llama 2, one of the most popular open-source language models. We'll dive into the performance numbers and see how the M2 Pro tackles the demands of processing and generating text.

Apple M2 Pro Llama 2 Performance: A Deep Dive

Llama 2 7B: A Popular Choice

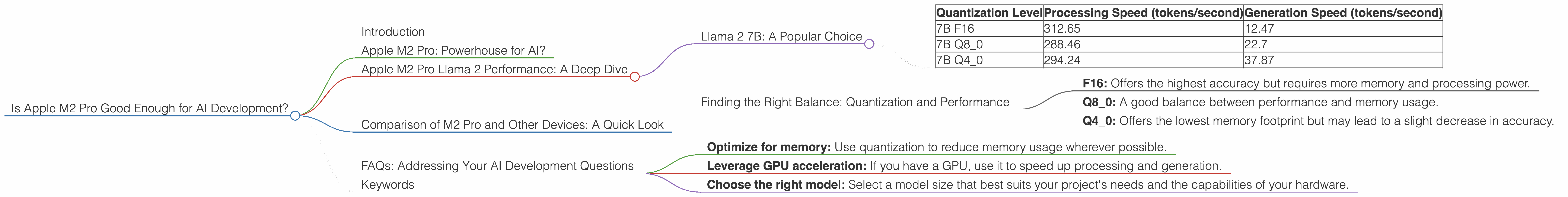

The Llama 2 7B model is a popular choice for developers, offering a balance between power and performance. Let's look at the processing and generation speed of the M2 Pro using different quantization levels:

| Quantization Level | Processing Speed (tokens/second) | Generation Speed (tokens/second) |

|---|---|---|

| 7B F16 | 312.65 | 12.47 |

| 7B Q8_0 | 288.46 | 22.7 |

| 7B Q4_0 | 294.24 | 37.87 |

Understanding Quantization:

Think of quantization as a way to "compress" the model without losing too much accuracy. Lower quantization levels like Q80 and Q40 require less memory, making it possible to run the model on devices with limited resources. However, this compression usually comes at the cost of slightly reduced accuracy.

Observations

- The M2 Pro shines in processing speed, exceeding 300 tokens per second for the F16 quantization, showcasing its remarkable ability to handle large amounts of data.

- Generation speed is lower, especially for F16, but it shows a significant jump for Q80 and Q40. This suggests that the M2 Pro is more efficient when working with smaller, compressed versions of the model.

Finding the Right Balance: Quantization and Performance

The choice between quantization levels depends on your specific project and goals. Here's a breakdown to help you make the best decision:

- F16: Offers the highest accuracy but requires more memory and processing power.

- Q8_0: A good balance between performance and memory usage.

- Q4_0: Offers the lowest memory footprint but may lead to a slight decrease in accuracy.

Comparison of M2 Pro and Other Devices: A Quick Look

There are numerous devices available for AI development. While we're focused on the M2 Pro, it's helpful to compare its performance with other options.

Unfortunately, there aren't readily available performance benchmarks for all devices, especially for the specific Llama 2 7B model. Therefore, we can't offer a comprehensive comparison yet.

FAQs: Addressing Your AI Development Questions

1. What are the differences between Llama 2 7B and Llama 2 70B?

The main difference is model size. Llama 2 7B is a smaller model, requiring less memory, making it more suitable for devices with limited resources. Llama 2 70B is a larger, more powerful model, able to handle more complex tasks but demanding more memory.

2. What is the importance of quantization for LLMs?

Quantization is crucial for reducing the memory footprint of LLMs, making them suitable for devices with limited resources. It helps you run models efficiently on a wider range of devices, including those with less powerful hardware.

3. What are the best practices for running AI models on an Apple M2 Pro?

- Optimize for memory: Use quantization to reduce memory usage wherever possible.

- Leverage GPU acceleration: If you have a GPU, use it to speed up processing and generation.

- Choose the right model: Select a model size that best suits your project's needs and the capabilities of your hardware.

Keywords

Apple M2 Pro, AI Development, Llama 2, Llama 2 7B, Large Language Models, LLM, Performance, Quantization, F16, Q80, Q40, Processing Speed, Generation Speed, GPU, Bandwidth, Token, Memory, Accuracy