Is Apple M2 Powerful Enough for Llama2 7B?

Introduction

The world of language models (LLMs) is abuzz with excitement, and for good reason. These AI marvels can generate realistic text, translate languages, write different kinds of creative content, and even answer your questions in an informative way. But running these LLMs locally on your own device can be a challenge, especially if you're using a model like Llama2 7B, which is considered a large language model.

This article dives into the performance of Llama2 7B on the Apple M2 chip, a popular choice for developers and enthusiasts. We'll explore the token generation speed benchmarks, compare different model and device configurations, and offer practical recommendations for use cases and workarounds. So buckle up, fellow AI enthusiasts, as we embark on this deep dive!

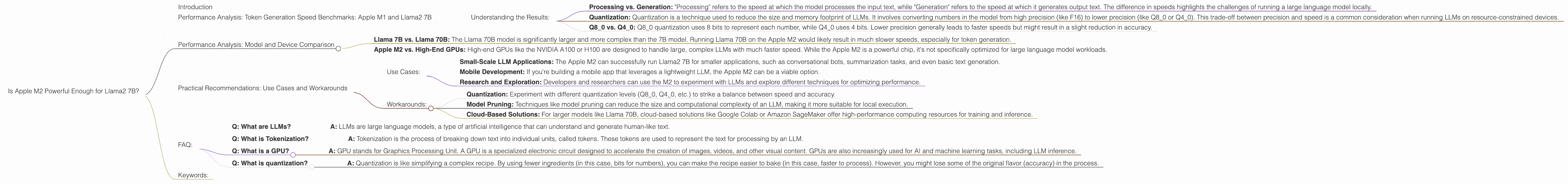

Performance Analysis: Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

Token generation speed is a critical metric for evaluating LLM performance. It represents the number of tokens (words or subwords) a model can generate per second. Here's a breakdown of the Llama2 7B performance on Apple M2:

| Configuration | Token Generation Speed (Tokens/second) |

|---|---|

| Llama2 7B (F16 precision, Processing) | 201.34 |

| Llama2 7B (F16 precision, Generation) | 6.72 |

| Llama2 7B (Q8_0 quantization, Processing) | 181.40 |

| Llama2 7B (Q8_0 quantization, Generation) | 12.21 |

| Llama2 7B (Q4_0 quantization, Processing) | 179.57 |

| Llama2 7B (Q4_0 quantization, Generation) | 21.91 |

Note: The table above shows the token generation speeds specific to the Apple M2 chip.

Understanding the Results:

- Processing vs. Generation: "Processing" refers to the speed at which the model processes the input text, while "Generation" refers to the speed at which it generates output text. The difference in speeds highlights the challenges of running a large language model locally.

- Quantization: Quantization is a technique used to reduce the size and memory footprint of LLMs. It involves converting numbers in the model from high precision (like F16) to lower precision (like Q80 or Q40). This trade-off between precision and speed is a common consideration when running LLMs on resource-constrained devices.

- Q80 vs. Q40: Q80 quantization uses 8 bits to represent each number, while Q40 uses 4 bits. Lower precision generally leads to faster speeds but might result in a slight reduction in accuracy.

Consider this analogy: Imagine a team of workers building a house. "Processing" is like the speed at which workers can get the materials ready, and "Generation" is like the speed at which they build the house. Quantization is like using different sized tools—smaller tools might work faster but might not be as precise.

Performance Analysis: Model and Device Comparison

The table above highlights that the Apple M2 can handle Llama 7B with impressive speed when it comes to processing the input, but the generation speed is much slower. Let's put this into context by comparing it to other LLM models and devices:

- Llama 7B vs. Llama 70B: The Llama 70B model is significantly larger and more complex than the 7B model. Running Llama 70B on the Apple M2 would likely result in much slower speeds, especially for token generation.

- Apple M2 vs. High-End GPUs: High-end GPUs like the NVIDIA A100 or H100 are designed to handle large, complex LLMs with much faster speed. While the Apple M2 is a powerful chip, it's not specifically optimized for large language model workloads.

Practical Recommendations: Use Cases and Workarounds

Use Cases:

- Small-Scale LLM Applications: The Apple M2 can successfully run Llama2 7B for smaller applications, such as conversational bots, summarization tasks, and even basic text generation.

- Mobile Development: If you're building a mobile app that leverages a lightweight LLM, the Apple M2 can be a viable option.

- Research and Exploration: Developers and researchers can use the M2 to experiment with LLMs and explore different techniques for optimizing performance.

Workarounds:

- Quantization: Experiment with different quantization levels (Q80, Q40, etc.) to strike a balance between speed and accuracy.

- Model Pruning: Techniques like model pruning can reduce the size and computational complexity of an LLM, making it more suitable for local execution.

- Cloud-Based Solutions: For larger models like Llama 70B, cloud-based solutions like Google Colab or Amazon SageMaker offer high-performance computing resources for training and inference.

FAQ:

- Q: What are LLMs?

- A: LLMs are large language models, a type of artificial intelligence that can understand and generate human-like text.

- Q: What is Tokenization?

- A: Tokenization is the process of breaking down text into individual units, called tokens. These tokens are used to represent the text for processing by an LLM.

- Q: What is a GPU?

- A: GPU stands for Graphics Processing Unit. A GPU is a specialized electronic circuit designed to accelerate the creation of images, videos, and other visual content. GPUs are also increasingly used for AI and machine learning tasks, including LLM inference.

- Q: What is quantization?

- A: Quantization is like simplifying a complex recipe. By using fewer ingredients (in this case, bits for numbers), you can make the recipe easier to bake (in this case, faster to process). However, you might lose some of the original flavor (accuracy) in the process.

Keywords:

Apple M2, Llama2 7B, LLM, Large Language Model, Token Generation Speed, Quantization, GPU, GPUCores, Processing, Generation, F16, Q80, Q40, Model Pruning, Cloud-Based Solutions, AI, Machine Learning, Deep Dive.