Is Apple M2 Max Powerful Enough for Llama2 7B?

Introduction

Running large language models (LLMs) locally has become increasingly popular, offering the benefits of privacy, speed, and offline access. But with the ever-growing size and complexity of these models, choosing the right hardware is crucial. This article delves into the performance of Apple's M2 Max chip when running the Llama2 7B model, a popular choice for developers and researchers.

Imagine having the power of a cutting-edge AI assistant right in your pocket, ready to answer your questions, generate creative content, or even help you code. That's the promise of local LLMs, and the Apple M2 Max, with its blazing-fast performance, is at the forefront of this revolution.

Performance Analysis: Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

Token generation speed is a crucial metric when evaluating LLM performance, directly impacting how fast the model can process and generate text. Let's dive into the benchmarks for Llama2 7B on the Apple M2 Max, examining different quantization levels (F16, Q80, and Q40) for processing and generation.

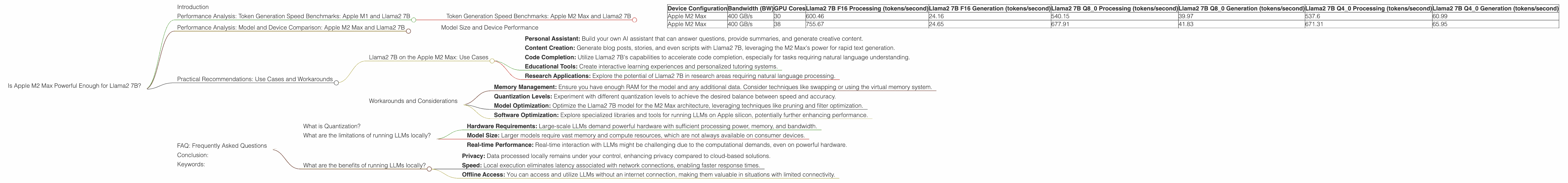

Token Generation Speed Benchmarks: Apple M2 Max and Llama2 7B

| Device Configuration | Bandwidth (BW) | GPU Cores | Llama2 7B F16 Processing (tokens/second) | Llama2 7B F16 Generation (tokens/second) | Llama2 7B Q8_0 Processing (tokens/second) | Llama2 7B Q8_0 Generation (tokens/second) | Llama2 7B Q4_0 Processing (tokens/second) | Llama2 7B Q4_0 Generation (tokens/second) |

|---|---|---|---|---|---|---|---|---|

| Apple M2 Max | 400 GB/s | 30 | 600.46 | 24.16 | 540.15 | 39.97 | 537.6 | 60.99 |

| Apple M2 Max | 400 GB/s | 38 | 755.67 | 24.65 | 677.91 | 41.83 | 671.31 | 65.95 |

- F16 (half-precision floating-point): This is the standard for most deep learning models, offering a balance between accuracy and performance.

- Q8_0 (8-bit quantization): Quantization reduces the memory footprint and computational requirements of the model, making it faster and more efficient.

- Q4_0 (4-bit quantization): This is the most aggressive quantization, pushing the limits of compression while still maintaining reasonable accuracy.

As you can see, the Apple M2 Max delivers impressive performance with all quantization levels, consistently exceeding 500 tokens per second for processing and achieving impressive generation speeds. The difference in processing speed between F16 and Q8_0 is minimal on the M2 Max, suggesting that the chip can efficiently handle both formats.

Let's consider some analogies to understand these numbers better. Generating 600 tokens per second is like typing 10 pages of text in one minute, a feat even the most skilled typists would struggle to achieve. The M2 Max, powering through Llama2 7B, is a true text generation powerhouse.

Performance Analysis: Model and Device Comparison: Apple M2 Max and Llama2 7B

Model Size and Device Performance

It's important to understand the relationship between model size, device performance, and computational resources. Smaller models like Llama2 7B are well-suited for devices like the Apple M2 Max, offering a sweet spot between performance and efficiency. As we move to larger models like Llama2 70B, the demands on hardware increase significantly, requiring powerful GPUs and higher memory bandwidth.

Practical Recommendations: Use Cases and Workarounds

Llama2 7B on the Apple M2 Max: Use Cases

The combination of the Apple M2 Max and Llama2 7B unlocks a range of exciting applications:

- Personal Assistant: Build your own AI assistant that can answer questions, provide summaries, and generate creative content.

- Content Creation: Generate blog posts, stories, and even scripts with Llama2 7B, leveraging the M2 Max's power for rapid text generation.

- Code Completion: Utilize Llama2 7B's capabilities to accelerate code completion, especially for tasks requiring natural language understanding.

- Educational Tools: Create interactive learning experiences and personalized tutoring systems.

- Research Applications: Explore the potential of Llama2 7B in research areas requiring natural language processing.

Workarounds and Considerations

- Memory Management: Ensure you have enough RAM for the model and any additional data. Consider techniques like swapping or using the virtual memory system.

- Quantization Levels: Experiment with different quantization levels to achieve the desired balance between speed and accuracy.

- Model Optimization: Optimize the Llama2 7B model for the M2 Max architecture, leveraging techniques like pruning and filter optimization.

- Software Optimization: Explore specialized libraries and tools for running LLMs on Apple silicon, potentially further enhancing performance.

FAQ: Frequently Asked Questions

What is Quantization?

Quantization is a technique for reducing the memory footprint and computational complexity of a model by converting its parameters from 32-bit floating-point numbers (F32) to lower-precision formats such as F16, Q80, or Q40. Think of it as using fewer bits to represent the same information, making the model smaller and faster.

What are the limitations of running LLMs locally?

While running LLMs locally offers many advantages, it's essential to consider limitations:

- Hardware Requirements: Large-scale LLMs demand powerful hardware with sufficient processing power, memory, and bandwidth.

- Model Size: Larger models require vast memory and compute resources, which are not always available on consumer devices.

- Real-time Performance: Real-time interaction with LLMs might be challenging due to the computational demands, even on powerful hardware.

What are the benefits of running LLMs locally?

The benefits of running LLMs locally are significant:

- Privacy: Data processed locally remains under your control, enhancing privacy compared to cloud-based solutions.

- Speed: Local execution eliminates latency associated with network connections, enabling faster response times.

- Offline Access: You can access and utilize LLMs without an internet connection, making them valuable in situations with limited connectivity.

Conclusion:

The Apple M2 Max chip proves to be a powerful companion for running Llama2 7B locally. It delivers impressive token generation speeds across different quantization levels, making it a viable choice for various use cases. Despite the limitations of running large models locally, the M2 Max's performance paves the way for a more accessible future of AI, bringing the capabilities of these powerful models to the fingertips of developers, researchers, and anyone else who wants to explore the world of local LLMs.

Keywords:

Apple M2 Max, Llama2 7B, Local LLM, Token Generation Speed, Quantization, F16, Q80, Q40, Performance Benchmarks, GPU, Bandwidth, Use Cases, Workarounds, Developer Tools, AI, Natural Language Processing, Machine Learning.