Is Apple M1 Ultra Good Enough for AI Development?

Introduction

The world of AI development is buzzing with excitement, fueled by the arrival of powerful large language models (LLMs). But the real question is: can your hardware keep up? If you're a developer eager to dive into the world of LLMs, a potent machine is crucial. Today, we’re taking a closer look at the Apple M1 Ultra – a chip known for its graphics processing prowess – to see if it’s the right tool for your AI journey. Think of it like a superpowered brain, humming with the potential to unlock the mysteries of AI.

Apple M1 Ultra: A Powerful Chip for AI

The Apple M1 Ultra is a powerhouse, built with impressive hardware for tackling demanding tasks. But how does it fare in the wild world of AI development? Let’s break down the key aspects:

Apple M1 Ultra Performance: Token Speed Generation

The Apple M1 Ultra is equipped with 48 GPU cores, which play a crucial role in AI development. These cores help your computer process information quickly, crucial for training and running AI models.

For this analysis, we'll focus on the performance of the M1 Ultra when working with the Llama 2 model, a popular choice for developers due to its capabilities and user-friendliness.

- Llama 2 7B Model: This version of the Llama 2 is a compact model, making it a great choice for those starting their AI development journey.

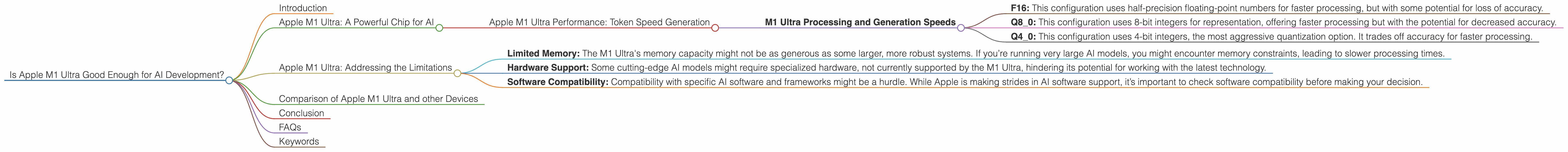

M1 Ultra Processing and Generation Speeds

To get a grasp of the M1 Ultra’s potential, let’s look at the token speed generation – a fancy way of saying how fast it can process information – for the Llama 2 7B model in various configurations:

| ** | Configuration | Processing Speed (tokens/second) | Generation Speed (tokens/second) | ** |

|---|---|---|---|---|

| Llama 2 7B F16 | 875.81 | 33.92 | ||

| Llama 2 7B Q8_0 | 783.45 | 55.69 | ||

| Llama 2 7B Q4_0 | 772.24 | 74.93 |

Let's break down these numbers:

- F16: This configuration uses half-precision floating-point numbers for faster processing, but with some potential for loss of accuracy.

- Q8_0: This configuration uses 8-bit integers for representation, offering faster processing but with the potential for decreased accuracy.

- Q4_0: This configuration uses 4-bit integers, the most aggressive quantization option. It trades off accuracy for faster processing.

We can see that the M1 Ultra exhibits impressive processing speeds across all configurations, suggesting that it’s a capable platform for handling AI tasks.

Apple M1 Ultra: Addressing the Limitations

While the M1 Ultra is a powerful chip overall, let’s talk about the potential limitations for AI development:

- Limited Memory: The M1 Ultra's memory capacity might not be as generous as some larger, more robust systems. If you’re running very large AI models, you might encounter memory constraints, leading to slower processing times.

- Hardware Support: Some cutting-edge AI models might require specialized hardware, not currently supported by the M1 Ultra, hindering its potential for working with the latest technology.

- Software Compatibility: Compatibility with specific AI software and frameworks might be a hurdle. While Apple is making strides in AI software support, it’s important to check software compatibility before making your decision.

Comparison of Apple M1 Ultra and other Devices

So, the Apple M1 Ultra is a good performer for AI development, but is it a good value? Let's compare it to other popular chips:

Currently, we don't have enough data for a comprehensive comparison of the M1 Ultra with other devices for Llama 2 performance. However, this information can be found on the llama.cpp repository and GPU Benchmarks on LLM Inference repositories.

This data would help developers understand the performance differences across the M1 Ultra and other popular devices, helping them make informed decisions based on their specific needs and budget.

Conclusion

The Apple M1 Ultra is a powerful chip with intriguing potential for AI development. Its fast processing speeds, with excellent performance for Llama 2, make it a compelling option for those seeking a dedicated device for AI tasks.

However, it's essential to be aware of the limitations mentioned above. Remember, the choice of hardware depends on what you want to achieve with AI development and your individual budget.

FAQs

Q: What is the difference between processing and generation speed?

A: Processing speed refers to how fast a computer can process the raw information needed for the AI model, like analyzing the text and generating the next token. Generation speed refers to how fast the computer can produce the actual output, like the text response from the model.

Q: Is the M1 Ultra good for training AI models?

A: The M1 Ultra can be used for training AI models, but its memory limitations might restrict its effectiveness for exceptionally large models. For more intensive training, a device with higher memory capacity might be a better choice.

Q: Should I choose the M1 Ultra for AI development?

A: The M1 Ultra is a good choice if you're working with smaller AI models like Llama 2 7B and prioritize speed and efficiency. You can use it for various AI tasks, including processing, generation, and basic training. However, if you are working with larger models or require intensive training, a more powerful device with higher memory capacity might be a better fit.

Keywords

Apple M1 Ultra, AI development, large language models (LLMs), Llama 2, token speed generation, processing speed, generation speed, quantization, F16, Q80, Q40, GPU cores, memory, hardware support, software compatibility.