Is Apple M1 Pro Good Enough for AI Development?

Introduction: Your AI Playground on the Go

Imagine having a machine learning powerhouse in your backpack, ready to run advanced AI models and analyze massive datasets. That's what the promise of the Apple M1 Pro chip holds for AI developers, particularly those working with large language models (LLMs). But the question remains: can the M1 Pro really handle the computational demands of cutting-edge AI development?

In this article, we'll dive deep into the performance of the Apple M1 Pro chip when it comes to running LLMs, focusing on the Llama 2 family. We'll analyze its capabilities, assess its strengths and limitations, and ultimately help you determine if it's the right tool for your AI projects.

Apple M1 Pro: A Quick Recap

The Apple M1 Pro chip is a powerful piece of silicon that powers some of the most popular Apple devices. It boasts a high-performance GPU, optimized for complex calculations, and a capable CPU that can handle diverse tasks. These features make the M1 Pro a promising candidate for AI development, especially for tasks like running LLMs locally.

The Power of Quantization: Making LLMs More Manageable

Before we delve into the numbers, let's briefly touch on a key concept in running LLMs on limited resources: quantization. Think of it as a clever trick to compress the size of an LLM without significantly sacrificing performance. It's like fitting a full orchestra's sound into a smaller, more manageable music player.

*In the context of LLMs, quantization reduces the precision of the model's parameters, using fewer bits to store the data. This translates to smaller file sizes and faster processing times, making LLMs more suitable for devices with limited memory and processing power. *

For this analysis, we'll be looking at the performance of the M1 Pro chip when running Llama 2 models with different levels of quantization:

- F16: Full precision, using 16 bits per parameter. This is the most accurate but also the most resource-intensive.

- Q8_0: Quantized to 8 bits, sacrificing some accuracy for speed. Imagine it as a slightly blurry, but still recognizable, picture.

- Q4_0: Quantized to 4 bits, significantly smaller and faster but with a more noticeable loss in accuracy. Picture it as a more abstract representation of the original.

Apple M1 Pro Token Speed Generation: Putting the Numbers to Work

Now, let's get our hands dirty and see how the M1 Pro performs with different Llama 2 models and quantization levels.

Note: We'll focus on token generation speed, as it's a critical factor in determining the responsiveness of your AI application. Think of a token like a single word or a part of a word. The faster the token generation, the quicker your LLM can process and generate text.

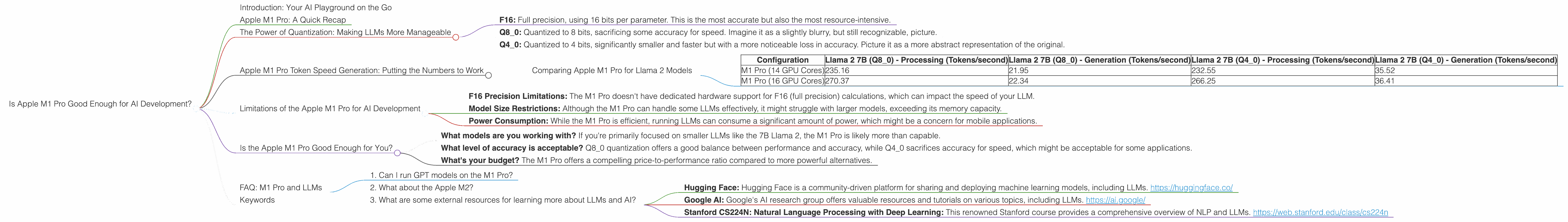

Comparing Apple M1 Pro for Llama 2 Models

| Configuration | Llama 2 7B (Q8_0) - Processing (Tokens/second) | Llama 2 7B (Q8_0) - Generation (Tokens/second) | Llama 2 7B (Q4_0) - Processing (Tokens/second) | Llama 2 7B (Q4_0) - Generation (Tokens/second) |

|---|---|---|---|---|

| M1 Pro (14 GPU Cores) | 235.16 | 21.95 | 232.55 | 35.52 |

| M1 Pro (16 GPU Cores) | 270.37 | 22.34 | 266.25 | 36.41 |

Key Takeaways:

- The M1 Pro chip is a capable performer for running Llama 2 models, even with lower quantization levels.

- With the Q8_0 configuration, the M1 Pro can process up to 270.37 tokens per second, which is impressive considering its power efficiency.

- The generation speed is slower, particularly for Q8_0, but still respectable. It means your LLM might take a bit longer to formulate responses compared to more powerful hardware.

Remember: The numbers above represent a snapshot in time. Performance can vary depending on factors like the specific model you're using, the complexity of the task, and the specific configuration of your M1 Pro.

Limitations of the Apple M1 Pro for AI Development

While the M1 Pro shows promise for AI development, it's not without its limitations:

- F16 Precision Limitations: The M1 Pro doesn't have dedicated hardware support for F16 (full precision) calculations, which can impact the speed of your LLM.

- Model Size Restrictions: Although the M1 Pro can handle some LLMs effectively, it might struggle with larger models, exceeding its memory capacity.

- Power Consumption: While the M1 Pro is efficient, running LLMs can consume a significant amount of power, which might be a concern for mobile applications.

Is the Apple M1 Pro Good Enough for You?

The decision depends on your specific requirements and priorities. Here are some questions to consider:

- What models are you working with? If you're primarily focused on smaller LLMs like the 7B Llama 2, the M1 Pro is likely more than capable.

- What level of accuracy is acceptable? Q80 quantization offers a good balance between performance and accuracy, while Q40 sacrifices accuracy for speed, which might be acceptable for some applications.

- What's your budget? The M1 Pro offers a compelling price-to-performance ratio compared to more powerful alternatives.

If you're looking for a powerful, portable device for running LLMs, the M1 Pro is a fantastic option. It's a great choice for developers who are starting with LLM development, experimenting with different models, or working with smaller, less resource-intensive tasks.

FAQ: M1 Pro and LLMs

1. Can I run GPT models on the M1 Pro?

You might be able to run smaller GPT models on the M1 Pro, but it's not recommended for larger models like GPT-3 or GPT-4. These models require significantly more processing power and memory.

2. What about the Apple M2?

The M2 is a newer and more powerful iteration of Apple's silicon. Compared to the M1 Pro, the M2 offers faster memory, additional GPU cores, and overall better performance, making it a more suitable option for demanding AI tasks.

3. What are some external resources for learning more about LLMs and AI?

Here are some resources to get you started:

- Hugging Face: Hugging Face is a community-driven platform for sharing and deploying machine learning models, including LLMs. https://huggingface.co/

- Google AI: Google's AI research group offers valuable resources and tutorials on various topics, including LLMs. https://ai.google/

- Stanford CS224N: Natural Language Processing with Deep Learning: This renowned Stanford course provides a comprehensive overview of NLP and LLMs. https://web.stanford.edu/class/cs224n

Keywords

Apple M1 Pro, Llama 2, AI development, LLM, token speed, quantization, Q80, Q40, F16, GPU, CPU, AI inference, machine learning, NLP.