Is Apple M1 Powerful Enough for Llama3 8B?

Introduction

The world of Large Language Models (LLMs) is buzzing with excitement as researchers push the boundaries of artificial intelligence. LLMs are powerful tools that can translate languages, write code, create stories, and much more. But with their massive size and demanding computational requirements, running them locally can be a challenge. This article explores the performance of the Apple M1 chip when running the Llama3 8B model.

We’ll break down the numbers, compare different quantization levels, and see just how well this chip can handle this powerful language model. Whether you're a developer looking to build new applications or simply curious about the capabilities of your own devices, this deep dive will give you insights into the performance of LLMs on the Apple M1 and how to work around potential limitations.

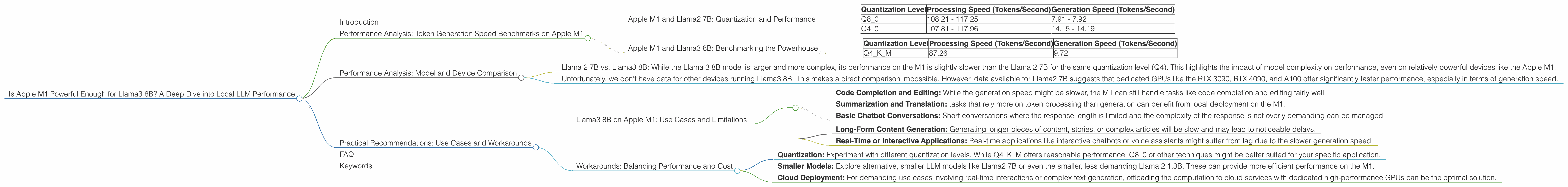

Performance Analysis: Token Generation Speed Benchmarks on Apple M1

Let’s start with the key performance metric: token generation speed. This refers to how quickly the model can process input text and generate new text. The faster the token generation speed, the smoother and more responsive your LLM application will be.

Apple M1 and Llama2 7B: Quantization and Performance

The Apple M1 chip comes with a limited number of GPU cores, ranging from 7 to 8 depending on the specific model. These cores, combined with the chip's architecture, can deliver impressive performance, but only when harnessed effectively. Here's how the Apple M1 fares with the Llama2 7B model:

| Quantization Level | Processing Speed (Tokens/Second) | Generation Speed (Tokens/Second) |

|---|---|---|

| Q8_0 | 108.21 - 117.25 | 7.91 - 7.92 |

| Q4_0 | 107.81 - 117.96 | 14.15 - 14.19 |

Observations:

- Quantization Matters: The Apple M1 performs significantly better with the Q40 quantization level compared to Q80 for Llama2 7B. This is because Q4_0 reduces the model's size by storing weights using only 4 bits, making it more compact and faster to process. Think of it like storing your wardrobe in a smaller suitcase – you can fit more in if you pack more efficiently!

- Processing vs. Generation: The Apple M1 processes tokens for Llama2 7B at over 100 tokens/second, but the generation speed (the rate at which it generates new text) lags behind. This signifies that the model can handle the preliminary steps of processing text quickly, but the actual text generation process takes longer.

Apple M1 and Llama3 8B: Benchmarking the Powerhouse

The Llama3 8B model is a step up from the Llama2 7B, pushing the boundaries of LLM capabilities. Let's see how the Apple M1 handles this larger model:

| Quantization Level | Processing Speed (Tokens/Second) | Generation Speed (Tokens/Second) |

|---|---|---|

| Q4KM | 87.26 | 9.72 |

Observations:

- No F16 Data: There is no data available for the Apple M1 running Llama3 8B with F16 quantization. This is likely due to the limited memory resources on the M1 chip. The model simply may not fit into the available memory when using 16-bit precision.

- Processing vs. Generation: Similar to the Llama2 7B results, the Apple M1 demonstrates a noticeable difference between its processing speed and generation speed when handling the Llama3 8B. This indicates that the M1 chip is capable of handling the computational load of large models like Llama3 8B, but generation speed is still a bottleneck.

Performance Analysis: Model and Device Comparison

To further understand the performance of the Apple M1, let's compare it with other devices and models. Remember, this is a point-in-time snapshot, and performance benchmarks can change.

Model Comparison: * Llama 2 7B vs. Llama3 8B: While the Llama 3 8B model is larger and more complex, its performance on the M1 is slightly slower than the Llama 2 7B for the same quantization level (Q4). This highlights the impact of model complexity on performance, even on relatively powerful devices like the Apple M1.

Device Comparison: * Unfortunately, we don't have data for other devices running Llama3 8B. This makes a direct comparison impossible. However, data available for Llama2 7B suggests that dedicated GPUs like the RTX 3090, RTX 4090, and A100 offer significantly faster performance, especially in terms of generation speed.

Practical Recommendations: Use Cases and Workarounds

Llama3 8B on Apple M1: Use Cases and Limitations

Given the performance analysis, here's how to approach using Llama3 8B on your Apple M1:

Suitable Use Cases: * Code Completion and Editing: While the generation speed might be slower, the M1 can still handle tasks like code completion and editing fairly well. * Summarization and Translation: tasks that rely more on token processing than generation can benefit from local deployment on the M1. * Basic Chatbot Conversations: Short conversations where the response length is limited and the complexity of the response is not overly demanding can be managed.

Limitations: * Long-Form Content Generation: Generating longer pieces of content, stories, or complex articles will be slow and may lead to noticeable delays. * Real-Time or Interactive Applications: Real-time applications like interactive chatbots or voice assistants might suffer from lag due to the slower generation speed.

Workarounds: Balancing Performance and Cost

If you need more performance or have resource constraints, consider these approaches:

* Quantization: Experiment with different quantization levels. While Q4KM offers reasonable performance, Q8_0 or other techniques might be better suited for your specific application.

* Smaller Models: Explore alternative, smaller LLM models like Llama2 7B or even the smaller, less demanding Llama 2 1.3B. These can provide more efficient performance on the M1.

* Cloud Deployment: For demanding use cases involving real-time interactions or complex text generation, offloading the computation to cloud services with dedicated high-performance GPUs can be the optimal solution.

FAQ

Q: What is quantization, and how does it affect LLM performance? A: Quantization is a technique used to reduce the size of LLM models by storing weights using fewer bits. Think of it as compressing a file to save space. This makes the models faster to process, but can sometimes impact accuracy. Lower bit quantization levels like Q4_0 can significantly reduce the LLM's size and increase performance, but can also lead to some accuracy trade-offs.

Q: What are other factors that impact LLM performance besides the device and model size? A: Several factors contribute to LLM performance: * Quantization: We discussed this above. * Memory Bandwidth: How much data the device can move between its memory and processor can impact performance. * Software and Libraries: Optimizations in the software used to run the LLM (like llama.cpp) can significantly impact performance.

Q: Are there any other devices or alternatives to Apple M1 for running LLMs locally? A: Yes, there are many devices suitable for running LLMs locally, including: * Nvidia GPUs: The RTX 3090, RTX 4090, and A100 are popular choices for high-performance computing. * AMD GPUs: The RX 6900 XT and Radeon VII are excellent choices for budget-friendly LLM deployments. * Google Colab: Cloud-based platforms like Google Colab offer access to powerful hardware without needing to purchase equipment.

Q: What are some resources for learning more about LLMs and their performance? A: Here are some resources to explore: * The llama.cpp repository: The GitHub repository for llama.cpp: https://github.com/ggerganov/llama.cpp * LLMs in Action: Blogs and tutorials: https://huggingface.co/blog, https://www.datasciencecentral.com/profiles/blogs/a-practical-introduction-to-large-language-models-llms

Keywords

Apple M1, Llama 3 8B, Llama 2 7B, LLM, Large Language Model, Token Generation Speed, Quantization, Q4KM, Q8_0, F16, GPU Cores, Performance Benchmarks, Local Deployment, Cloud Deployment, Use Cases, Practical Recommendations, AI, Machine Learning, Natural Language Processing, NLP, Deep Learning.