Is Apple M1 Powerful Enough for Llama3 70B?

Introduction

The world of large language models (LLMs) is rapidly evolving, with new models and architectures emerging at breakneck speed. One of the most exciting trends in this arena is the move towards running these models locally on personal devices. This allows for faster inference speeds, greater privacy, and the ability to leverage the full power of a device's resources. But a question often arises: Can your hardware handle the demands of these massive models?

In this article, we'll be diving deep into the performance of the popular Apple M1 chip, a powerful processor often found in Macs and iPads, when tasked with running the latest Llama 3 70B model. We'll explore the key factors impacting performance, such as quantization and token generation speeds, and provide practical recommendations for using these models on your M1 device.

So, buckle up, fellow developers, and get ready to unleash the power of LLMs on your local machine!

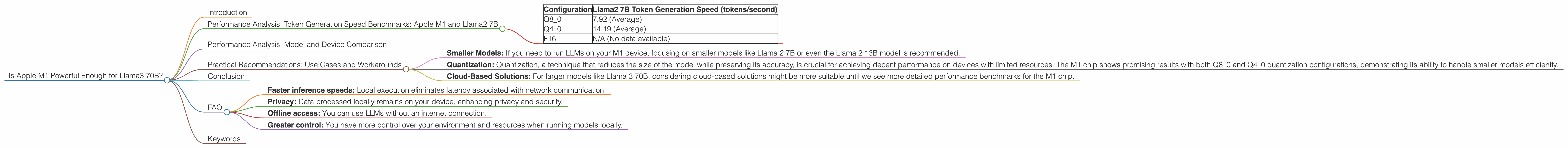

Performance Analysis: Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

To truly understand the capabilities of the Apple M1, we need to dive into the numbers. The table below showcases the token generation speeds for various configurations of the Llama 2 7B model on the M1 chip:

| Configuration | Llama2 7B Token Generation Speed (tokens/second) |

|---|---|

| Q8_0 | 7.92 (Average) |

| Q4_0 | 14.19 (Average) |

| F16 | N/A (No data available) |

Note: The F16 configuration is missing because, as pointed out by the source, the Apple M1 has yet to be tested with this setting on Llama2 7B.

It's important to remember that these benchmarks are only for the Llama2 7B model. However, they provide valuable insights into the general performance capabilities of the M1 chip for running LLMs.

Performance Analysis: Model and Device Comparison

While the Llama 2 7B model demonstrates reasonable performance on the M1, the question remains: Can it handle the even larger Llama 3 70B model?

Unfortunately, the answer is unclear. Based on the data we have, the Apple M1 has not been tested with the Llama 3 70B model. It's crucial to remember that Llama 3 70B is significantly more complex than Llama 2 7B, requiring significantly more resources.

Practical Recommendations: Use Cases and Workarounds

Despite the lack of concrete numbers for Llama 3 70B, we can still draw some practical recommendations based on the data available for Llama 2 7B:

- Smaller Models: If you need to run LLMs on your M1 device, focusing on smaller models like Llama 2 7B or even the Llama 2 13B model is recommended.

- Quantization: Quantization, a technique that reduces the size of the model while preserving its accuracy, is crucial for achieving decent performance on devices with limited resources. The M1 chip shows promising results with both Q80 and Q40 quantization configurations, demonstrating its ability to handle smaller models efficiently.

- Cloud-Based Solutions: For larger models like Llama 3 70B, considering cloud-based solutions might be more suitable until we see more detailed performance benchmarks for the M1 chip.

Here's an analogy: Imagine a small car trying to tow a huge trailer. While it might manage, it'll struggle to move efficiently. The M1 chip is like that small car – it can handle smaller models smoothly, but might struggle with colossal ones like the Llama 3 70B.

Conclusion

This deep dive into the M1 chip's performance with LLMs has revealed that while the Apple M1 is capable of running smaller models effectively, its ability to handle larger models like Llama 3 70B remains unclear. Until more testing and data are available, it's best to stick to more manageable models or opt for cloud-based solutions when dealing with LLMs of that scale. However, the promising performance we've observed with smaller models and quantization suggests that the M1 chip has significant potential in the future of local LLM deployment.

FAQ

Q: What is quantization?

Quantization is a technique used to reduce the size of a model by representing its weights with fewer bits. This process can significantly improve performance on devices with limited resources like mobile phones and laptops. It's like turning a high-resolution image into a lower resolution one – you lose some detail but gain in file size and loading speed.

Q: How do I get started with running LLMs locally?

There are several open-source projects like llama.cpp that enable you to run LLMs locally on your device. These projects provide tools and libraries for training and inference. Start with the llama.cpp documentation and explore their tutorials for a step-by-step guide.

Q: What are the advantages of running LLMs locally?

Running LLMs locally offers various advantages over cloud-based solutions, including:

- Faster inference speeds: Local execution eliminates latency associated with network communication.

- Privacy: Data processed locally remains on your device, enhancing privacy and security.

- Offline access: You can use LLMs without an internet connection.

- Greater control: You have more control over your environment and resources when running models locally.

Keywords

Apple M1, Llama 3 70B, Llama2 7B, LLM, Large Language Model, Token Generation Speed, Quantization, Performance, Device, Local Inference, Cloud-Based Solutions, Hardware, GPU, GPUCores, BW, Processing, Generation, F16, Q80, Q40