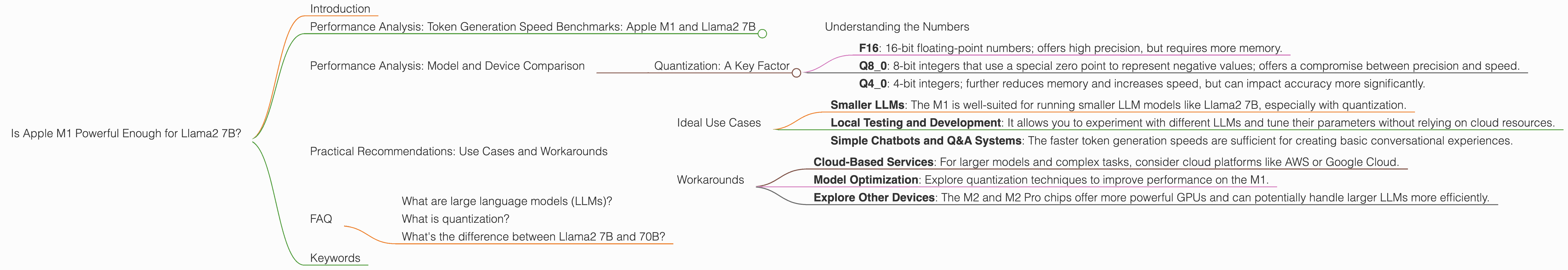

Is Apple M1 Powerful Enough for Llama2 7B?

Introduction

The world of large language models (LLMs) is buzzing with excitement, and for good reason! These powerful AI systems can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in a comprehensive and informative way. But running these models locally, on your own computer, requires some serious horsepower.

Enter the Apple M1 chip. This powerful processor has taken the tech world by storm, promising incredible performance for tasks like video editing and gaming. But can it handle the computational demands of LLMs like Llama2 7B?

This article dives deep into the performance of Llama2 7B on the Apple M1 chip, analyzing its token generation speeds and exploring the limitations of this powerful yet budget-friendly device. We'll also look at different quantization techniques and how they affect performance. So, grab your coffee, and let's dive into the world of local LLM performance!

Performance Analysis: Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

The speed at which an LLM can generate tokens is a crucial benchmark for its practical usability. Token generation refers to the process of breaking down text into individual units, like words or parts of words, that the model can process and understand.

Here's a table summarizing the token generation speed of Llama2 7B running on the Apple M1 chip:

| Quantization | Token Generation Speed (Tokens/second) |

|---|---|

| Q8_0 | 7.92 (Generation) |

| Q4_0 | 14.19 (Generation) |

Important Note: The data for Llama2 7B on the Apple M1 chip is limited and does not include all quantization levels (like F16). This means we can't make a complete comparison for all possible scenarios.

Understanding the Numbers

These numbers reveal some interesting insights. The Q80 quantization level achieves a generation speed of 7.92 tokens/second. This means the model can process about 8 tokens per second. While the Q40 quantization level significantly boosts the speed to 14.19 tokens/second.

Imagine a conversation with a chatbot. If it takes 1 second to generate 8 words, it might feel a bit slow for a natural dialogue. With 14 words per second, however, the conversation becomes more fluid and enjoyable.

Remember: These benchmarks are just a snapshot and do not encompass all the factors that can impact performance, such as the complexity of the prompt, the model's architecture, and the specific implementation.

Performance Analysis: Model and Device Comparison

While our analysis focuses on the Apple M1, it's helpful to compare its performance with other devices. However, there is not enough data available to directly compare the M1 with other devices for Llama2 7B.

Quantization: A Key Factor

Quantization is the process of reducing the number of bits used to represent model weights. This can significantly decrease the memory footprint and improve inference speed. However, it can also lead to a slight drop in accuracy.

Let's break down the different quantization levels:

- F16: 16-bit floating-point numbers; offers high precision, but requires more memory.

- Q8_0: 8-bit integers that use a special zero point to represent negative values; offers a compromise between precision and speed.

- Q4_0: 4-bit integers; further reduces memory and increases speed, but can impact accuracy more significantly.

By comparing the performance of different quantization levels on the same hardware, we can understand the trade-offs between accuracy and speed. In our case, the Q8_0 quantization level on the Apple M1 offers a balance between these two factors.

Practical Recommendations: Use Cases and Workarounds

The Apple M1 chip might not be a powerhouse for running massive LLMs like Llama2 70B, but it can handle smaller models like Llama2 7B quite well.

Ideal Use Cases

- Smaller LLMs: The M1 is well-suited for running smaller LLM models like Llama2 7B, especially with quantization.

- Local Testing and Development: It allows you to experiment with different LLMs and tune their parameters without relying on cloud resources.

- Simple Chatbots and Q&A Systems: The faster token generation speeds are sufficient for creating basic conversational experiences.

Workarounds

- Cloud-Based Services: For larger models and complex tasks, consider cloud platforms like AWS or Google Cloud.

- Model Optimization: Explore quantization techniques to improve performance on the M1.

- Explore Other Devices: The M2 and M2 Pro chips offer more powerful GPUs and can potentially handle larger LLMs more efficiently.

FAQ

What are large language models (LLMs)?

LLMs are a type of artificial intelligence that can understand and generate human-like text. They are trained on massive datasets and can perform various tasks, including translation, summarization, and question answering.

What is quantization?

Quantization is a technique used to reduce the precision of model weights, which can lead to smaller memory footprints and faster inference speeds. Imagine representing a number with fewer digits, potentially sacrificing some accuracy in exchange for efficiency.

What's the difference between Llama2 7B and 70B?

The number after "Llama2" refers to the number of parameters in the model. A model with more parameters is larger and can potentially perform more complex tasks but demands more computational resources. Llama2 7B is smaller and less demanding than Llama2 70B.

Keywords

Apple M1, Llama2 7B, Local LLM, Token Generation Speed, Quantization, Q80, Q40, GPU, Performance, Benchmarks, Use Cases, Workarounds, Cloud Computing, Model Optimization, AI, Machine Learning, Natural Language Processing, Chatbot, Q&A, Inference.