Is Apple M1 Max Powerful Enough for Llama3 8B?

Introduction

The rise of large language models (LLMs) has revolutionized natural language processing, opening up new possibilities for developers and enthusiasts alike. But these powerful AI brains crave computational muscle, and choosing the right hardware platform is crucial for unleashing their full potential.

This article delves into the performance of the Apple M1 Max, a popular and powerful chip, when running the Llama3 8B LLM. We'll explore token generation speeds, compare different quantization techniques, and provide practical recommendations for use cases. So, if you're considering building a local LLM setup, this guide will help you make an informed decision.

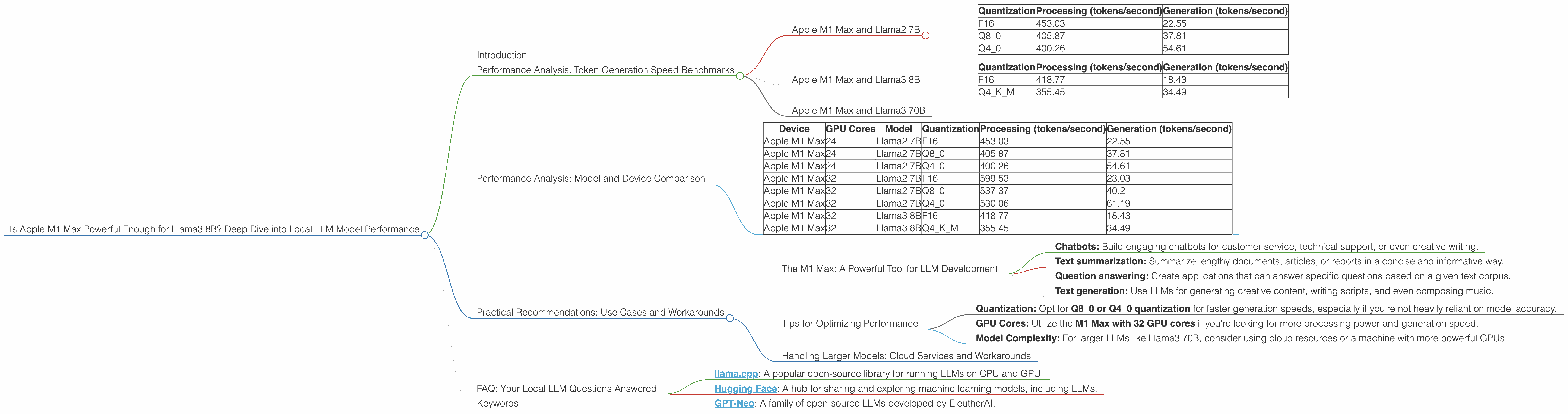

Performance Analysis: Token Generation Speed Benchmarks

Apple M1 Max and Llama2 7B

Let's start with the token generation speed benchmarks for the Apple M1 Max with different configurations:

| Quantization | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|

| F16 | 453.03 | 22.55 |

| Q8_0 | 405.87 | 37.81 |

| Q4_0 | 400.26 | 54.61 |

As you can see, the M1 Max demonstrates remarkable performance with the smaller Llama2 7B model. It's worth noting that the M1 Max, with its 24 GPU cores, delivers 453.03 tokens/second for processing and 22.55 tokens/second for generation with F16 quantization. Moving down to Q80 and Q40, the processing performance drops slightly, but the generation speed increases significantly.

Apple M1 Max and Llama3 8B

Now, let's move on to the real star of the show – the Llama3 8B model. Here's a breakdown of its performance on the M1 Max:

| Quantization | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|

| F16 | 418.77 | 18.43 |

| Q4KM | 355.45 | 34.49 |

The M1 Max handles the Llama3 8B model quite well, with a processing speed of 418.77 tokens/second for F16 quantization and 355.45 tokens/second for Q4KM. The generation speed for F16 comes in at 18.43 tokens/second, while Q4KM achieves 34.49 tokens/second. While the performance is still impressive, it's worth noting that, compared to Llama2 7B, the generation speed sees a slight dip with the larger 8B model.

Apple M1 Max and Llama3 70B

Unfortunately, we do not have any benchmarks for the Llama3 70B model on the M1 Max. The provided data does not include this specific combination. It's safe to assume that running the Llama3 70B model on the M1 Max would be a major challenge, given its sheer size and complexity.

Performance Analysis: Model and Device Comparison

Let's compare the M1 Max's performance with other devices and LLM models:

| Device | GPU Cores | Model | Quantization | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|---|---|---|

| Apple M1 Max | 24 | Llama2 7B | F16 | 453.03 | 22.55 |

| Apple M1 Max | 24 | Llama2 7B | Q8_0 | 405.87 | 37.81 |

| Apple M1 Max | 24 | Llama2 7B | Q4_0 | 400.26 | 54.61 |

| Apple M1 Max | 32 | Llama2 7B | F16 | 599.53 | 23.03 |

| Apple M1 Max | 32 | Llama2 7B | Q8_0 | 537.37 | 40.2 |

| Apple M1 Max | 32 | Llama2 7B | Q4_0 | 530.06 | 61.19 |

| Apple M1 Max | 32 | Llama3 8B | F16 | 418.77 | 18.43 |

| Apple M1 Max | 32 | Llama3 8B | Q4KM | 355.45 | 34.49 |

This table vividly showcases how quantization technique greatly affects performance. For example, with Llama2 7B, the M1 Max with 32 cores enjoys a significant boost in processing speed with F16 quantization, achieving 599.53 tokens/second, compared to 453.03 tokens/second with 24 cores. On the other hand, Q4_0 quantization consistently delivers faster generation speeds across the board.

Practical Recommendations: Use Cases and Workarounds

The M1 Max: A Powerful Tool for LLM Development

The Apple M1 Max is a powerful chip that's well-suited for running smaller LLMs like Llama2 7B or Llama3 8B. Its performance is impressive, and the M1 Max can be a great choice for experimentation, prototyping, and smaller-scale LLM-powered applications like:

- Chatbots: Build engaging chatbots for customer service, technical support, or even creative writing.

- Text summarization: Summarize lengthy documents, articles, or reports in a concise and informative way.

- Question answering: Create applications that can answer specific questions based on a given text corpus.

- Text generation: Use LLMs for generating creative content, writing scripts, and even composing music.

Tips for Optimizing Performance

- Quantization: Opt for Q80 or Q40 quantization for faster generation speeds, especially if you're not heavily reliant on model accuracy.

- GPU Cores: Utilize the M1 Max with 32 GPU cores if you're looking for more processing power and generation speed.

- Model Complexity: For larger LLMs like Llama3 70B, consider using cloud resources or a machine with more powerful GPUs.

Handling Larger Models: Cloud Services and Workarounds

If you're looking to run larger LLMs like Llama3 70B, cloud services provide a viable alternative to local hardware. Platforms like Amazon SageMaker, Google Cloud AI Platform, and Microsoft Azure Machine Learning offer dedicated GPU instances and optimized environments for running LLMs.

For local execution, techniques like model distillation, fine-tuning, and knowledge distillation, can help reduce the memory footprint and computational demands of large LLMs. These methods can compromise accuracy, but they can be effective for optimizing models for resource-constrained devices.

FAQ: Your Local LLM Questions Answered

Q: What is quantization and how does it affect performance?

A: Quantization is a technique used to reduce the memory footprint and computational requirements of LLMs. It involves converting the model's weights from floating-point numbers (F16, F32) to lower-precision data types like Q80 or Q40. This reduces the amount of memory needed to store the model, leading to faster processing and generation speeds. However, quantization can also affect model accuracy, so it's a trade-off you need to consider.

Q: Why is generation speed important for LLMs?

A: Generation speed determines how quickly an LLM can produce text output. A faster generation speed is crucial for interactive applications like chatbots, where users expect near real-time responses.

Q: Is the Apple M1 Max a viable option for running LLMs?

*A: * The Apple M1 Max is a good choice for developers and enthusiasts looking to run smaller to medium-sized LLMs like Llama2 7B or Llama3 8B. However, for larger and more complex models like Llama3 70B, it's best to consider cloud services or more powerful GPU hardware.

Q: What are some of the best resources for learning more about local LLM development?

A: Here are a few excellent resources:

- llama.cpp: A popular open-source library for running LLMs on CPU and GPU.

- Hugging Face: A hub for sharing and exploring machine learning models, including LLMs.

- GPT-Neo: A family of open-source LLMs developed by EleutherAI.

Keywords

Apple M1 Max, Llama3 8B, LLM, Large Language Model, Token Generation Speed, Quantization, F16, Q80, Q40, GPU Cores, Generation Speed, Local Inference, Performance Benchmarks, Cloud Services, Use Cases, Chatbots, Text Summarization, Question Answering, Text Generation, Model Distillation, Fine-tuning, Knowledge Distillation, Developer, Geek, AI, Natural Language Processing, Machine Learning