Is Apple M1 Max Powerful Enough for Llama3 70B?

Introduction

The world of large language models (LLMs) is evolving rapidly, with new models like Llama 3 boasting remarkable capabilities. But can your device keep up? This article delves into the performance of the Apple M1Max chip paired with the Llama 3 70B model, answering the crucial question: Is the M1Max a good fit for running this powerful LLM locally?

Whether you're a developer tinkering with LLMs or simply curious about the latest technological advancements, this deep dive will shed light on the potential and limitations of running Llama 3 70B on the Apple M1_Max.

Performance Analysis

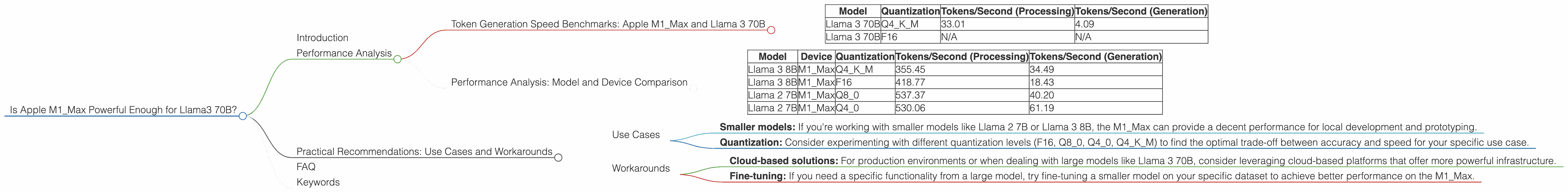

Token Generation Speed Benchmarks: Apple M1_Max and Llama 3 70B

The key performance metric we're focusing on is token generation speed, which is the number of tokens your device can process per second. A higher token speed translates to faster responses from the LLM. Let's examine the numbers for Llama 3 70B on the M1_Max:

| Model | Quantization | Tokens/Second (Processing) | Tokens/Second (Generation) |

|---|---|---|---|

| Llama 3 70B | Q4KM | 33.01 | 4.09 |

| Llama 3 70B | F16 | N/A | N/A |

Important Note: There is no available data for running Llama 3 70B with F16 quantization on the M1_Max.

Performance Analysis: Model and Device Comparison

To understand the M1_Max's performance in context, let's compare it to other models and devices:

| Model | Device | Quantization | Tokens/Second (Processing) | Tokens/Second (Generation) |

|---|---|---|---|---|

| Llama 3 8B | M1_Max | Q4KM | 355.45 | 34.49 |

| Llama 3 8B | M1_Max | F16 | 418.77 | 18.43 |

| Llama 2 7B | M1_Max | Q8_0 | 537.37 | 40.20 |

| Llama 2 7B | M1_Max | Q4_0 | 530.06 | 61.19 |

Observations:

- Smaller models perform significantly better: The smaller Llama 3 8B model achieves substantially higher token speeds compared to the Llama 3 70B, even with the same Q4KM quantization.

- Quantization impacts speed: The M1Max performs best with Llama 2 7B and Q40 quantization.

- M1Max struggles with larger models: The results clearly indicate that the M1Max, while powerful, might not be the ideal platform for running the largest LLMs like Llama 3 70B.

Practical Recommendations: Use Cases and Workarounds

Use Cases

- Smaller models: If you're working with smaller models like Llama 2 7B or Llama 3 8B, the M1_Max can provide a decent performance for local development and prototyping.

- Quantization: Consider experimenting with different quantization levels (F16, Q80, Q40, Q4KM) to find the optimal trade-off between accuracy and speed for your specific use case.

Workarounds

- Cloud-based solutions: For production environments or when dealing with large models like Llama 3 70B, consider leveraging cloud-based platforms that offer more powerful infrastructure.

- Fine-tuning: If you need a specific functionality from a large model, try fine-tuning a smaller model on your specific dataset to achieve better performance on the M1_Max.

FAQ

Q: How does quantization affect LLM performance?

A: Quantization is a technique that reduces the precision of numbers used in the LLM's calculations. This can lead to faster processing and reduced memory usage, but sometimes at the cost of slightly reduced accuracy.

Q: What are the best tools for running LLMs on the M1_Max?

*A: * Tools like llama.cpp and transformers offer options for running LLMs on the M1_Max.

Q: Are there any other devices that might be better for running Llama 3 70B?

*A: * Devices with more powerful GPUs, like the NVIDIA A100 or H100, are generally better suited to handle larger models.

Keywords

Apple M1_Max, Llama 3, Llama 3 70B, Llama 2, LLM, Large Language Model, quantization, token generation speed, processing, generation, performance, GPU, cloud computing, fine-tuning, device comparison, practical recommendations.