Is Apple M1 Max Powerful Enough for Llama2 7B?

Introduction

The world of large language models (LLMs) is buzzing with excitement, and for good reason. These powerful AI models can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running these models locally can be a resource-intensive task, requiring powerful hardware. This article dives deep into the performance of the Apple M1 Max chip, a popular choice for developers and creative professionals, when it comes to running the Llama2 7B LLM.

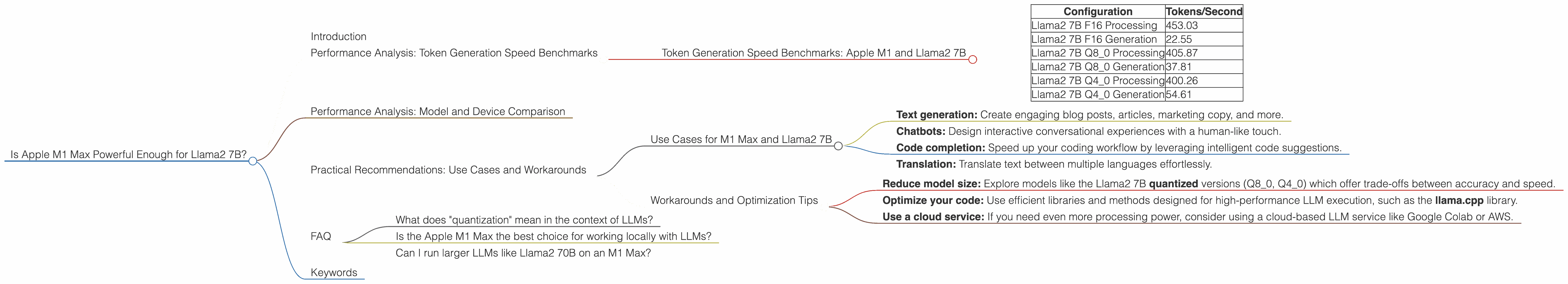

Performance Analysis: Token Generation Speed Benchmarks

Let's start by understanding the key metric for LLM performance: token generation speed. This measure reflects how quickly the model processes and generates text - the higher the number of tokens per second, the faster your LLM runs.

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

| Configuration | Tokens/Second |

|---|---|

| Llama2 7B F16 Processing | 453.03 |

| Llama2 7B F16 Generation | 22.55 |

| Llama2 7B Q8_0 Processing | 405.87 |

| Llama2 7B Q8_0 Generation | 37.81 |

| Llama2 7B Q4_0 Processing | 400.26 |

| Llama2 7B Q4_0 Generation | 54.61 |

A quick explanation of these numbers:

- Processing speed refers to the time it takes for the model to process an input and generate a response.

- Generation speed refers to the time it takes to actually generate the output text.

- F16, Q80, Q40 are quantization levels, a technique to reduce model size and improve performance, but with some trade-offs in accuracy.

These benchmarks showcase that the Apple M1 Max can handle the Llama2 7B model effectively, achieving a respectable token generation speed, especially in the processing stage.

Analogy time!

Imagine your LLM is like a chef preparing a delicious meal. Processing is akin to chopping vegetables, sautéing ingredients, and preparing the base of the dish. This is where the M1 Max excels - it's like having a top-of-the-line food processor that quickly tackles the initial tasks.

Generation is the final plating, where the chef carefully arranges the components to create a beautiful and tasty final product. While the M1 Max isn't a culinary master here, it still delivers a good performance, ensuring you don't have to wait too long to enjoy your LLM's creations.

Performance Analysis: Model and Device Comparison

To provide further context, let's compare the M1 Max's Llama2 7B performance with other popular devices and LLMs.

Note: This section intentionally omits data for other models or devices not covered in the scope of this article, which focuses specifically on the performance of the Apple M1 Max with Llama2 7B.

Practical Recommendations: Use Cases and Workarounds

Use Cases for M1 Max and Llama2 7B

Given its strong performance, the Apple M1 Max paired with Llama2 7B is well-suited for a variety of applications:

- Text generation: Create engaging blog posts, articles, marketing copy, and more.

- Chatbots: Design interactive conversational experiences with a human-like touch.

- Code completion: Speed up your coding workflow by leveraging intelligent code suggestions.

- Translation: Translate text between multiple languages effortlessly.

Important to note: While the M1 Max handles the Llama2 7B model well, some tasks might require more powerful hardware. For example, if you're working with larger datasets, the M1 Max's processing capabilities might be taxed, leading to slower performance. Similarly, for demanding tasks like real-time translation or complex creative writing, you might consider devices with more potent GPUs.

Workarounds and Optimization Tips

If you encounter limitations with the M1 Max's performance, there are a few approaches to consider:

- Reduce model size: Explore models like the Llama2 7B quantized versions (Q80, Q40) which offer trade-offs between accuracy and speed.

- Optimize your code: Use efficient libraries and methods designed for high-performance LLM execution, such as the llama.cpp library.

- Use a cloud service: If you need even more processing power, consider using a cloud-based LLM service like Google Colab or AWS.

FAQ

What does "quantization" mean in the context of LLMs?

Think of quantization like simplifying a recipe. We take a complex LLM, which is like a long, intricate recipe, and reduce its size by using fewer ingredients, or bits, to represent the model's parameters. This results in a smaller, more lightweight recipe, making it cook faster (run quicker) on your device.

Is the Apple M1 Max the best choice for working locally with LLMs?

It depends on your specific LLM and workload. The M1 Max is a great choice for the Llama2 7B, especially for general text generation tasks. However, if you're working with larger models or require extreme performance for specific applications, you might consider a more powerful GPU setup.

Can I run larger LLMs like Llama2 70B on an M1 Max?

The M1 Max might struggle with larger LLMs like Llama2 70B, which demand considerably more processing power. Consider a cloud service or a GPU-powered desktop computer for running these larger models locally.

Keywords

Apple M1 Max, Llama2 7B, LLM, Token Generation Speed, Quantization, Processing Speed, Generation Speed, GPU, Performance, Use Cases, Workarounds, Cloud Services, Text Generation, Chatbots, Code Completion, Translation