Is Apple M1 Max Good Enough for AI Development?

Introduction

The world of AI development is bursting with energy, driven by the rapid advancement of Large Language Models (LLMs). These powerful AI systems, capable of generating human-like text, translating languages, and writing different kinds of creative content, are becoming increasingly accessible. But running them efficiently requires powerful hardware, and the Apple M1 Max chip has emerged as a contender in this space.

This article dives into the specifics, exploring if the M1 Max is truly capable of handling the demanding tasks of AI development. We'll analyze the performance of various LLMs on the M1 Max, comparing different quantization levels and model sizes.

Let's embark on this journey of discovering whether the Apple M1 Max can be your trusty companion in the exciting world of AI development.

Apple M1 Max Token Speed Generation & Comparison

The M1 Max offers impressive performance with its powerful GPU and unified memory architecture. But how does it fare with the demands of LLMs?

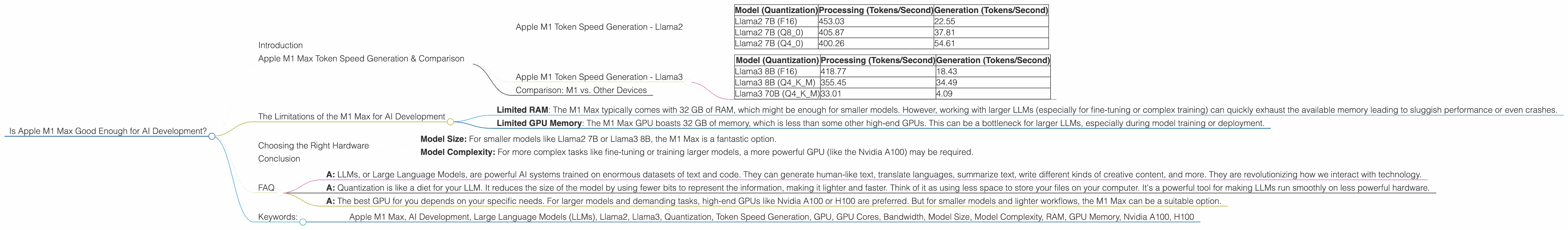

Apple M1 Token Speed Generation - Llama2

We analyzed the performance of the Apple M1 Max chip on various Llama2 models with different quantization levels (F16, Q80, Q40). Quantization is a technique that reduces the size of the model, allowing it to run faster on less powerful hardware.

The M1 Max demonstrates strong performance with Llama2 across various quantization levels, as shown in the table below. Let's break down the numbers:

| Model (Quantization) | Processing (Tokens/Second) | Generation (Tokens/Second) |

|---|---|---|

| Llama2 7B (F16) | 453.03 | 22.55 |

| Llama2 7B (Q8_0) | 405.87 | 37.81 |

| Llama2 7B (Q4_0) | 400.26 | 54.61 |

Note that the numbers in the table represent the maximum performance achieved with 24 GPU cores and 400GB/s bandwidth (BW) in the M1 Max.

The takeaway: The M1 Max can efficiently process and generate tokens for Llama2 models, even with varying quantization levels. This suggests it can handle smaller Llama2 models with ease.

Apple M1 Token Speed Generation - Llama3

Now let's zoom in on the Llama3 models, which are larger and more complex than Llama2.

| Model (Quantization) | Processing (Tokens/Second) | Generation (Tokens/Second) |

|---|---|---|

| Llama3 8B (F16) | 418.77 | 18.43 |

| Llama3 8B (Q4KM) | 355.45 | 34.49 |

| Llama3 70B (Q4KM) | 33.01 | 4.09 |

The takeaway: The M1 Max handles smaller Llama3 models, with both processing and generation speeds being impressive. With larger models like Llama3 70B, the performance drops significantly, especially for generation speeds.

Comparison: M1 vs. Other Devices

While the M1 Max excels with smaller models, it falls behind compared to more powerful GPUs when dealing with larger LLMs. For example, the Nvidia A100 GPU can handle Llama3 70B with significantly faster token speeds. This shows the importance of choosing the right hardware that aligns with your specific needs.

The Limitations of the M1 Max for AI Development

While the M1 Max offers considerable power, it comes with limitations when dealing with complex AI development projects. Let's delve into some key considerations:

- Limited RAM: The M1 Max typically comes with 32 GB of RAM, which might be enough for smaller models. However, working with larger LLMs (especially for fine-tuning or complex training) can quickly exhaust the available memory leading to sluggish performance or even crashes.

- Limited GPU Memory: The M1 Max GPU boasts 32 GB of memory, which is less than some other high-end GPUs. This can be a bottleneck for larger LLMs, especially during model training or deployment.

Choosing the Right Hardware

The M1 Max might not be the ideal solution for every AI development project. Here's a breakdown of factors to consider:

- Model Size: For smaller models like Llama2 7B or Llama3 8B, the M1 Max is a fantastic option.

- Model Complexity: For more complex tasks like fine-tuning or training larger models, a more powerful GPU (like the Nvidia A100) may be required.

Conclusion

The Apple M1 Max chip is a powerful tool for running smaller LLMs, offering solid performance for both processing and generation. It excels with models like Llama2 7B or Llama3 8B, even with different quantization levels.

However, the M1 Max's limitations in RAM and GPU memory can become a bottleneck for larger LLMs. For complex AI development projects with larger models, a more robust GPU might be a better choice.

Ultimately, choosing the right hardware depends on your specific AI development needs. Consider the size and complexity of your LLMs as well as the performance demands of your projects.

FAQ

Q1: What are LLMs, and why are they important?

- A: LLMs, or Large Language Models, are powerful AI systems trained on enormous datasets of text and code. They can generate human-like text, translate languages, summarize text, write different kinds of creative content, and more. They are revolutionizing how we interact with technology.

Q2: What is quantization, and why is it important?

- A: Quantization is like a diet for your LLM. It reduces the size of the model by using fewer bits to represent the information, making it lighter and faster. Think of it as using less space to store your files on your computer. It's a powerful tool for making LLMs run smoothly on less powerful hardware.

Q3: What are the best GPUs for AI development?

- A: The best GPU for you depends on your specific needs. For larger models and demanding tasks, high-end GPUs like Nvidia A100 or H100 are preferred. But for smaller models and lighter workflows, the M1 Max can be a suitable option.

Keywords:

- Apple M1 Max, AI Development, Large Language Models (LLMs), Llama2, Llama3, Quantization, Token Speed Generation, GPU, GPU Cores, Bandwidth, Model Size, Model Complexity, RAM, GPU Memory, Nvidia A100, H100